From Writing Code to Prompting It

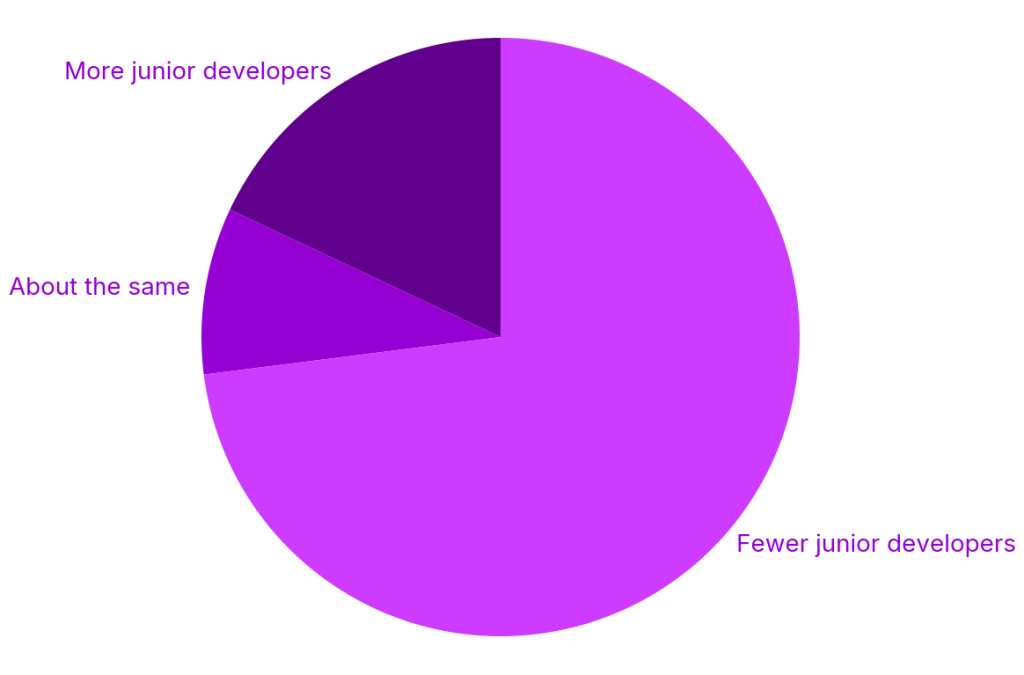

AI code generation tools have turned programming into a prompt-and-verify workflow. Developers can now ship features by describing intent to an AI assistant, which produces compilable, test-passing code in minutes. Industry research cited by Octopus Deploy shows juniors completing tasks up to 55% faster with AI assistance, while many organizations cut back on junior hiring in favor of “seniors with AI.” This shift decouples code output from developer understanding: the person shipping a change often never walked through the logic line by line. For seasoned engineers, years of architectural context help them validate AI-assisted programming. For newer developers, the speed gain hides a dangerous gap. They are learning how to orchestrate tools rather than how to reason about data structures, concurrency, or failure modes—skills that only surface when something breaks in production.

The New Code Review Crisis: Clean Diffs, Shallow Understanding

Engineering leaders are increasingly worried that code review challenges are no longer about style or tests, but about comprehension. Reviewers encounter careful, well-structured patches—sometimes generated largely by AI—that hide subtle bugs, such as timing issues that only appear when events align at precisely the wrong moment. When questions arise, some juniors cannot explain why the code behaves as it does because they did not truly author the solution. Leaders describe a new kind of “expert beginner”: fast, conscientious developers whose pull requests look solid but whose mental model of the system is thin. The oversight gap shows up in reviews, where seniors must interrogate not just the diff, but the human’s understanding of it. Teams are discovering that the bottleneck has moved from writing code to validating that both the code and the author’s reasoning are robust enough for complex systems.

Developers Warn of Cognitive Decline and AI Fatigue

Beyond management concerns, developers themselves say AI assisted programming is affecting how they think. On technical forums, many describe a creeping sense of de-skilling—feeling their “brain is rotting” as they reach for AI for every problem instead of working through solutions manually. Some report that AI-generated code is often flawed or awkwardly structured, turning simple tasks into tedious cycles of suggesting, inspecting, and patching model output. Others, under pressure from leadership, are told to use AI agents for broad codebase changes they cannot feasibly review in full, raising fears of accumulating opaque technical debt. The experience is cognitively disorienting: engineers are responsible for outcomes but are increasingly intermediaries between product requirements and opaque machine-generated implementations. This erodes confidence, weakens debugging muscles, and makes it harder to maintain the deep familiarity with codebases that used to be a core part of senior craftsmanship.

Rising AI Adoption Meets Persistent Distrust

Even highly experienced developers are adopting AI coding tools, though many remain wary of their reliability. The Standard C++ Foundation’s latest survey reports that nearly 40% of respondents frequently use AI for writing code, with increased use for test generation and debugging as well. Yet a substantial share still rarely or never uses AI, citing incorrect output, lack of trust, data privacy concerns, and cost. Respondents note that AI often struggles with large projects and complex build systems—the exact contexts where mistakes are hardest to detect. This combination of rising usage and low trust creates a precarious dynamic: teams lean on AI to move faster, but know they must double-check everything. In practice, that often means senior engineers spend more time auditing AI suggestions, while less experienced developers lack the intuition to spot when an apparently clean solution is subtly wrong.

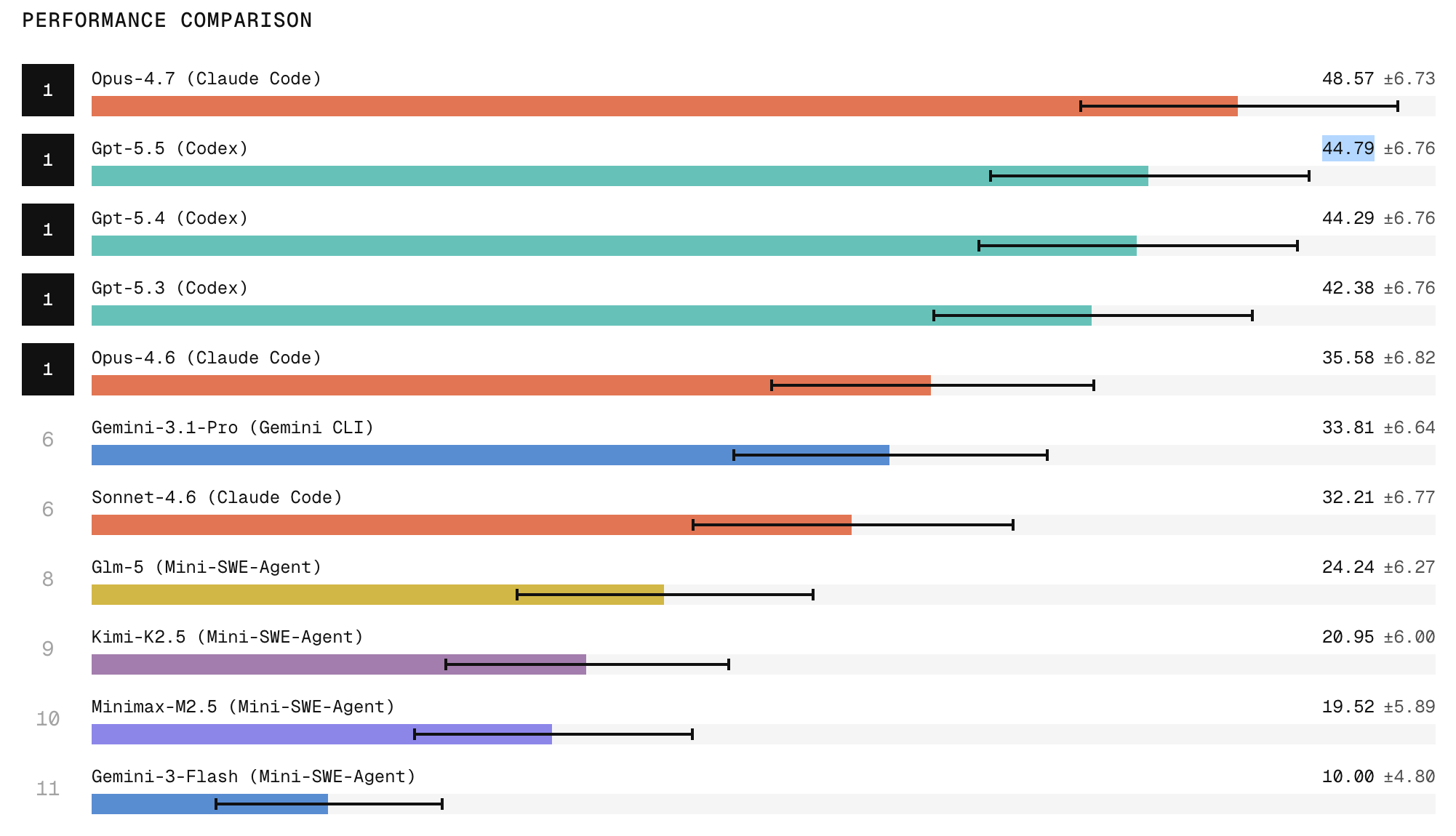

Benchmarks Reward AI Agents—But Not Human Skill Growth

Vendors are racing to prove that AI agents can handle serious engineering work, but their metrics rarely consider the human side. Scale Labs’ SWE Atlas Refactoring Leaderboard, for example, evaluates whether agents can restructure production-style codebases, preserve behavior, and clean up artifacts across many files. Top models like Claude Code with Opus 4.7 and ChatGPT 5.5 lead on decomposing monoliths, extracting shared modules, and improving interfaces. These benchmarks show AI can perform complex refactors at scale, often touching twice as many lines and far more files than traditional coding tasks. What they do not measure is how developers working alongside these agents build—or lose—core skills like architectural reasoning and debugging. Engineering leaders are starting to respond by pairing AI usage with explicit learning goals, more rigorous code reviews, and policies that require humans to be able to explain any change they ship in their own words.