Physical AI Factory: From Demos to Live Industrial Workflows

Physical AI is no longer confined to flashy stage demos. At a Siemens electronics factory in Erlangen, the HMND 01 humanoid robot from Humanoid, built on the NVIDIA physical AI stack, already performs autonomous logistics tasks on the live production floor. This Siemens NVIDIA humanoid deployment shows robots navigating around workers and machines while tying into Siemens’ existing automation systems, shifting physical AI from simulation to day-to-day operations. At Hannover Messe, Siemens extended this narrative with a flexible shoe line where AI agents actually execute decisions rather than merely recommending them, underscoring a move from advisory analytics to operational autonomy. For manufacturers, this marks a quiet but profound transition: robots that perceive, decide and act in real time are becoming core infrastructure, not experimental add-ons, redefining what a modern physical AI factory looks like.

Humanoid Robots in Warehouses: ERP Becomes a Control System

In warehouse operations, humanoid robots are starting to plug directly into enterprise backbones. At Vodafone Procure & Connect’s facility in Duisburg, a pilot by Accenture and SAP linked a humanoid robot to SAP Extended Warehouse Management (SAP EWM). The robot received inspection tasks from SAP, then autonomously roamed aisles to spot misplaced or damaged products, unsafe pallet stacking, unused storage capacity and hazards such as obstacles or misaligned pallets. Findings and recommendations flowed back into SAP in real time, turning EWM from a passive system of record into an active system of operational control. Accenture’s Robot Brain and digital twin training provided the physical AI intelligence, while SAP’s Joule AI execution fabric grounded robot actions in business logic and auditable data. These humanoid robots in warehouses foreshadow new automation governance models, where ERP orchestrates both people and embodied AI as a single, integrated workforce.

Industrial AI Edge Computing: The New Control Layer for Embodied Systems

Scaling embodied AI is forcing manufacturers to rethink industrial AI edge computing. High-bandwidth sensor streams, multi-camera vision, and on-robot generative models demand low-latency, power-efficient compute close to the factory floor. NVIDIA’s Jetson and IGX platforms, with deeply tuned memory optimizations, are being positioned as the edge “nervous system” for physical AI agents, ensuring multi-billion-parameter models can run within strict power and thermal envelopes without latency spikes or failures. At Hannover Messe, NVIDIA and partners demonstrated how accelerated edge and cloud infrastructure underpin digital twins, autonomous agents and humanoid robotics in live factory environments, while Deutsche Telekom’s Industrial AI Cloud keeps sensitive telemetry and design data within compliant boundaries. Together, these deployments sketch a reference stack: optimized edge silicon and software for real-time control, coupled to cloud-scale simulation and orchestration layers that coordinate robot fleets and continuously retrain models on operational data.

DEEPX–Hyundai: Building the ‘Edge Brain’ for Next-Gen Robots

At the silicon level, DEEPX and Hyundai Motor Group’s Robotics LAB are co-architecting a next-generation Physical AI compute platform for robotics. Centered on DEEPX’s DX-M2 Physical GenAI chip, the collaboration targets ultra-low-power AI semiconductor designs capable of running large-scale generative models directly on robots. The focus is Vision-Language-Action (VLA) and Vision-Language Models (VLM), enabling robots to perceive via cameras, understand natural language, and act autonomously in factories or logistics hubs. Beyond chips, the effort spans hardware systems, a Physical AI software stack and application libraries, effectively defining a robotics “edge brain” tailored for continuous, on-device inference. With projections that the Physical AI semiconductor market could reach roughly USD 123 billion (approx. RM566 billion) by 2030, and with robotics and humanoids as primary demand drivers, DEEPX Hyundai robotics efforts underline how specialized silicon is becoming foundational to the emerging physical AI factory stack.

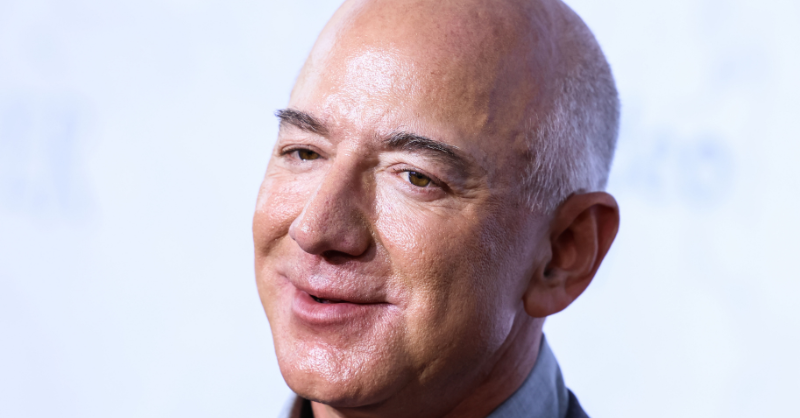

Capital and Cloud: Project Prometheus and the New Factory Stack

Massive capital is now flowing into physical AI explicitly targeting industrial use cases. Jeff Bezos’ Project Prometheus is close to securing a USD 10 billion (approx. RM46 billion) round, led by investors including JPMorgan and BlackRock, bringing total funding to more than USD 16 billion (approx. RM73 billion). Prometheus trains models on real-world experimental data, robotics interactions and engineering workflows, with an emphasis on aerospace, automotive and advanced manufacturing. It also aims to buy up industrial businesses to feed operational data back into its models, effectively treating factories themselves as training assets. In parallel, NVIDIA and Google Cloud are co-engineering AI-optimized infrastructure for agentic and physical AI, from Blackwell-based GPUs to the Gemini Enterprise Agent Platform. Together, these moves hint at a standard stack: specialized AI silicon at the edge, industrial AI platforms in the cloud, and humanoid or mobile robots woven directly into MES and ERP systems.