From Code to Conversation: The Rise of Natural Language Programming

For decades, building software or robots meant mastering specialized programming languages, complex tools, and arcane workflows. Natural language programming turns that model on its head. Instead of writing code line by line, users describe goals and behaviors in plain English, and an AI agent translates those instructions into working software. This shift underpins a new wave of AI agent development, where autonomous agents can be designed, tested, and deployed through conversation rather than code editors. The result is true no-code agent control: people with domain knowledge—but no engineering background—can now create assistants, tools, and robotic behaviors by simply stating what they want. As AI models grow more capable of understanding context, chaining tools, and self-correcting their own output, plain English coding stops being a novelty and becomes a foundational interface for interacting with intelligent systems across apps, websites, and physical devices.

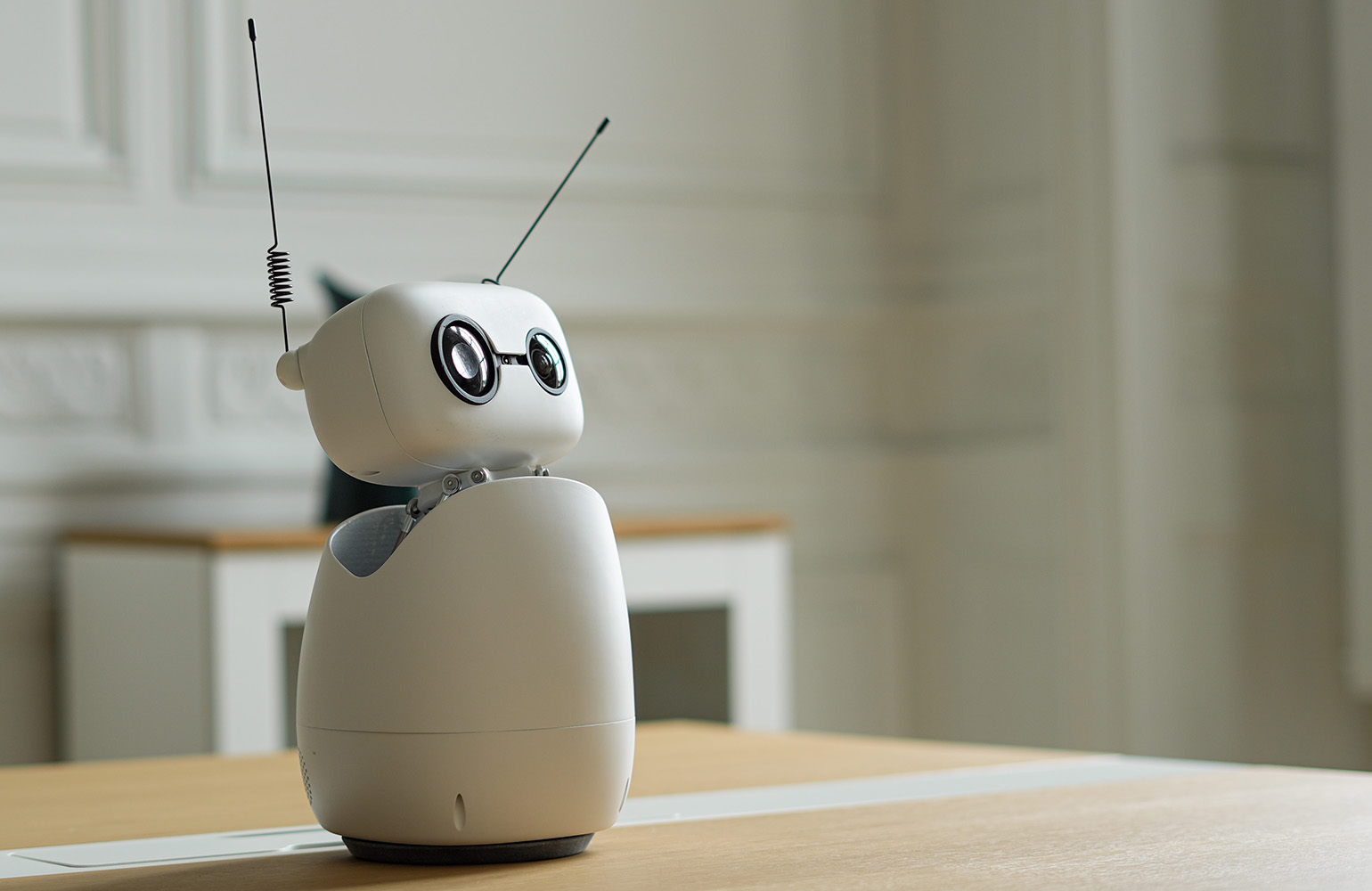

Hugging Face’s Reachy Mini: Robots You Program by Talking

Hugging Face’s agentic toolkit for the Reachy Mini robot shows how far natural language programming has come. Users don’t write a single line of code; they describe the behavior they want—like a voice-controlled facilitator, a language tutor, or a playful chess partner—and an AI agent writes, tests, and ships the code directly to the robot. Expertise that once required robotics and software engineering is now offloaded to the agent, transforming AI agent development into a guided conversation. The Reachy Mini app ecosystem reinforces this shift. Apps live on the Hugging Face Hub, where anyone can duplicate an existing project, ask the agent to tweak it in plain English, and publish a customized version with one click. A browser-based simulator lets people experiment without owning the hardware, making no-code agent control accessible to educators, hobbyists, and professionals who simply want a useful desktop robot, not a new career in robotics.

Meta’s Hatch Agent: Natural Language Control for Multimodal Tasks

Meta’s upcoming Hatch agent extends plain English coding into rich, multimodal experiences. Positioned as a mainstream autonomous assistant, Hatch is being designed to handle image and video generation, shopping flows, learning sessions, and research tasks through natural language commands. Instead of juggling separate apps and settings, users could describe what they want—a personalized shopping journey, a study plan, or a content exploration workflow—and the agent orchestrates the tools required. A key differentiator is social grounding: Hatch is expected to integrate deeply with platforms like Instagram and Facebook, turning feed exploration, creator discovery, and shopping research into agent-driven workflows. By framing complex behaviors in everyday language, Meta aims to remove traditional programming knowledge barriers, putting powerful AI agent development capabilities in the hands of people who may never open a code editor, but who know exactly what outcomes they want from their digital experiences.

Democratizing AI Agents Across Hardware and Everyday Use Cases

Natural language interfaces are collapsing the distance between ideas and implementation across both software and hardware. On the desktop, Reachy Mini demonstrates how open-source robots can be retasked in minutes: new apps for facilitation, education, games, or productivity emerge from simple descriptions, not intricate codebases. Online, Meta’s Hatch aims to embed agentic behavior directly inside social feeds and shopping journeys, meeting users where they already spend their time. This convergence signals a broader democratization of AI agent creation. Domain experts, teachers, creatives, and entrepreneurs can design agents tailored to their workflows by narrating desired behaviors in plain English, while AI handles the underlying engineering. As more platforms adopt no-code agent control and shareable app ecosystems, AI agents stop being niche tools for developers and become everyday collaborators—living on desks, in browsers, and inside social apps—built by the people who actually use them.