What OpenAI’s New Realtime Voice Models Actually Do

OpenAI has launched three OpenAI realtime voice models through its Realtime API, each targeting a specific part of live audio workflows. GPT-Realtime-2 is the reasoning engine for spoken conversations, bringing GPT-5-class intelligence to voice interactions. It can track context across long calls, handle interruptions, respond to corrections, and call external tools in parallel while continuing to talk. GPT-Realtime-Translate focuses on live translation AI, converting speech from more than 70 input languages into 13 output languages while keeping pace with the speaker. GPT-Realtime-Whisper completes the stack with streaming transcription, turning speech into text continuously as users talk. By splitting reasoning, translation, and transcription into separate models, OpenAI lets developers tune latency, accuracy, and cost per task instead of forcing one monolithic model to handle every voice workload.

GPT-Realtime-2: GPT-5-Class Reasoning for Voice Agents

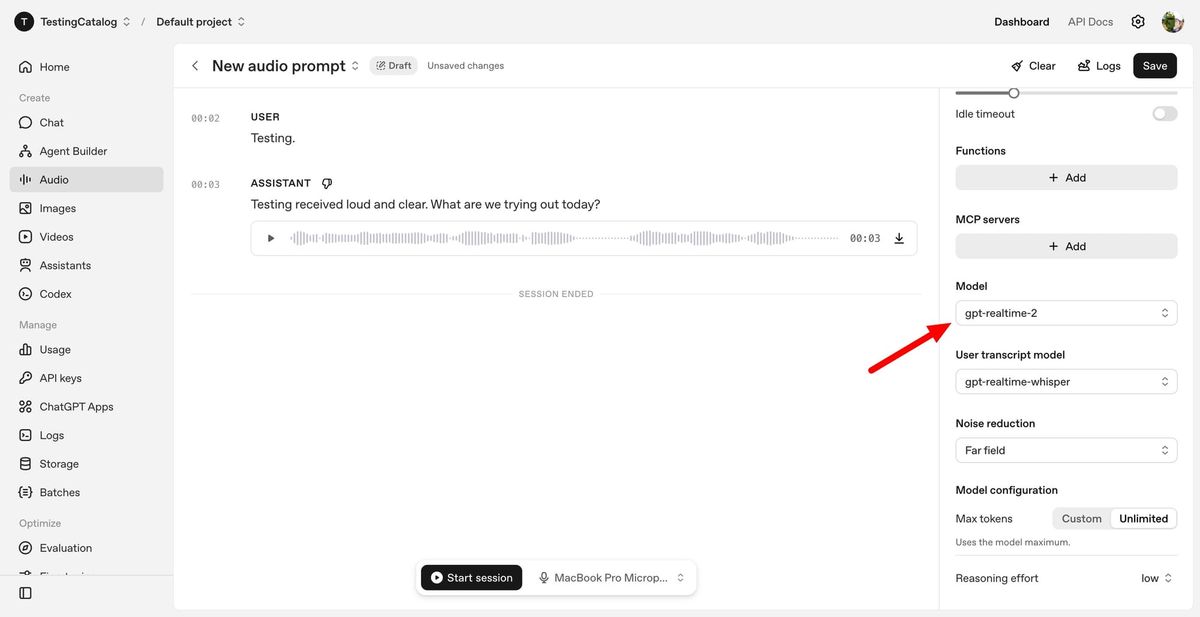

GPT-Realtime-2 is the flagship GPT-Realtime-2 API for voice app development, designed to make assistants feel less like rigid IVR menus and more like natural conversational partners. It supports short spoken preambles such as “let me check that” while it calls tools in the background, so users aren’t left in silence during processing. The model also improves failure handling with clearer verbal cues when a task cannot be completed, instead of dropping the conversation. A 128K token context window—up from 32K in earlier models—helps it maintain longer, more coherent sessions, even across tool hops and topic changes. Developers can dial reasoning effort from minimal to xhigh, choosing low-latency responses for simple queries or deeper analysis for complex tasks like troubleshooting or multi-step planning, all while keeping the conversation flowing in real time.

GPT-Realtime-Translate: Live Translation AI for Multilingual Conversations

GPT-Realtime-Translate is tailored for live multilingual scenarios where latency and comprehension both matter. It listens and translates speech from over 70 languages into 13 output languages, aiming to keep up with speakers even when they talk quickly or shift topics mid-sentence. The model is built to handle regional accents, domain-specific terms, and context switches so translated responses stay accurate and meaningful. Developers can use it to power customer support lines that assist callers in their native language, cross-border sales calls where both parties hear translated speech, or educational tools that let teachers lecture in one language while students receive another. GPT-Realtime-Translate can also provide real-time transcriptions alongside translated audio, enabling hybrid experiences like bilingual captions for live events, creator streams, or media localization workflows without stitching together separate translation and transcription systems.

GPT-Realtime-Whisper: Streaming Transcription for Live Speech-to-Text

GPT-Realtime-Whisper brings streaming transcription to the Realtime API, turning spoken audio into text as words are being said rather than after the fact. This low-latency, continuous speech-to-text capability unlocks live captions, meeting notes, and voice-driven workflows that respond instantly to what users say. In customer service, it can generate transcripts while an agent or AI assistant is still speaking, powering searchable logs and compliance monitoring without separate batch processing. For accessibility, developers can build apps that display real-time captions for people who are deaf or hard of hearing, whether in classrooms, events, or everyday conversations. Because transcription is handled by a dedicated model, teams can combine GPT-Realtime-Whisper with GPT-Realtime-2 or GPT-Realtime-Translate, routing audio to the right model without overburdening a single system that must reason, transcribe, and translate all at once.

Why Low Latency Matters for Voice App Development

Low latency is critical for any voice app that aims to feel natural instead of frustrating. Humans expect near-instant back-and-forth in conversation; delays longer than a second or two make systems feel slow, brittle, or untrustworthy. OpenAI’s split voice stack is designed so each task—reasoning, live translation AI, or streaming transcription—can be tuned for responsiveness. For simple tasks, developers can keep GPT-Realtime-2 at lower reasoning levels to respond quickly, while reserving higher settings for complex requests where deeper analysis is worth extra milliseconds. GPT-Realtime-Translate and GPT-Realtime-Whisper are optimized to keep pace with speakers, reducing lag in translated speech and live captions. Together, these OpenAI realtime voice models make it feasible to build assistants that talk, translate, and transcribe continuously, improving user experience in customer service, accessibility tools, and global communication platforms.