From Chatbot to Android AI Assistant

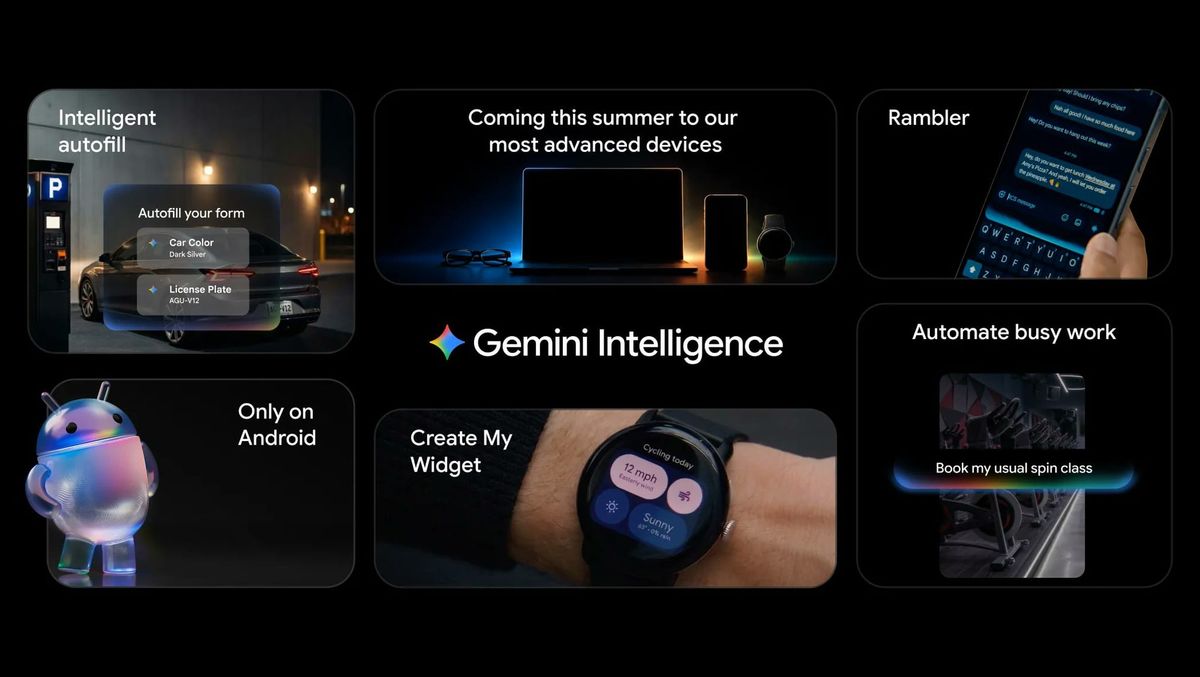

Gemini Intelligence is Google’s strongest attempt yet to make Gemini feel like a true Android AI assistant instead of just another chatbot. Where the original Gemini mostly answered questions, Gemini Intelligence is built for agentic operations: understanding what you want done and then taking actions across apps and the web on your behalf. Google is reframing Android as a system where Gemini can read context—who you are, what’s on your screen, and what apps you’re using—and then suggest or execute tasks once you approve them. This shift aligns with Google’s broader Personal Intelligence work, which, after you opt in, lets Gemini reference data from Gmail, Photos, YouTube, Search, and more. The result is a platform where Gemini Intelligence Android features can automate real-world workflows, from reservations and shopping to communication, while still requiring explicit user commands or confirmation for sensitive steps.

Cross-App AI Task Automation and Chrome Integration

The core of Gemini Intelligence is AI task automation that spans multiple apps and the web. On supported Android devices, you can long-press the power button and ask Gemini to build a grocery cart from a written list, reorder a favorite meal, book a ride, or even find tours based on a brochure photo. Gemini chains these steps across food, grocery, and rideshare apps, surfacing progress via live notifications and leaving the final confirmation to you. Beyond apps, Gemini in Chrome is coming to select Android phones, built on Gemini 3.1. It can summarize pages, answer questions about what you are viewing, or run auto browse routines such as reserving parking or updating an order. This deeper Chrome integration strengthens Gemini Intelligence Android capabilities by blending in-page understanding, Google app links, and visual creation tools, all under a controlled, opt-in automation layer.

Circle Anything: The New Overlay and On-Screen Context

Gemini’s upgraded overlay makes it feel much closer to Circle to Search, but fused directly into the Android AI assistant experience. When you invoke Gemini, you now see a prompt inviting you to “Circle anything or ask about this screen.” You can circle, highlight, or select text, photos, and other elements, then immediately ask Gemini for explanations, comparisons, or follow-up actions based on that exact context. The selected region includes resize handles so you can fine-tune what Gemini should pay attention to, and tapping the captured image still opens annotation tools for additional markup. A new Screen content shortcut in Gemini’s plus menu also lets you attach whatever is currently displayed without drawing. This overlay upgrade turns every app, webpage, or document into a launchpad for questions and tasks, tightening the link between what you see and what Gemini Intelligence can automate or clarify.

Daily Brief, Gboard Rambler, and Create My Widget

Gemini Intelligence is also about polishing everyday experiences, not just heavy-duty automation. A Gemini daily brief pulls together Gmail and Calendar into a streamlined, visually refined overview of your day, turning emails and events into a concise agenda. Gboard’s new Rambler mode tackles messy dictation, cleaning up repeated phrases, mid-sentence corrections, and even multilingual speech into smooth, readable text before you hit send. For personalization, Create My Widget lets you describe the widget you want—such as a rain-and-wind-focused weather tile or a meal prep dashboard—and Gemini generates the code for Android or Wear OS. Under the hood, Gemini Intelligence decides when to use on-device Gemini Nano or cloud models based on complexity. Together, these features show Google’s intent to make Gemini a practical, ever-present assistant that quietly refines how you type, plan, and glance at information on your phone.

Rollout: Which Phones Get Gemini Intelligence First?

Gemini Intelligence will debut first on recent Galaxy and Pixel phones starting this summer, before expanding to more Android devices later in the year. Google is also planning a broader ecosystem rollout, bringing Gemini Intelligence to Android-powered watches, cars, glasses, and laptops so the same AI task automation can follow you beyond your phone. Gemini in Chrome for Android will initially arrive on select devices running Android 12 or higher, with at least 4GB of RAM and English-US as the system language. Certain advanced auto browse features will be available first to Gemini AI Pro and AI Ultra subscribers on supported phones. All of this is opt-in, with Gemini Intelligence designed to operate only with apps you explicitly allow and tasks you directly trigger. The strategy underscores Google’s push to make Gemini the default, context-aware assistant woven throughout the Android experience.