From AI ‘Slop’ to Structured Inputs

As AI coding assistants move from sidekick to semi-autonomous agent, a new problem has emerged: AI-generated “slop” — code that compiles but is misaligned with what teams actually wanted. Vendors are increasingly concluding that AI code generation reliability depends less on ever-larger models and more on disciplined, spec-driven development. Instead of dumping vague feature requests into a single prompt, tools from major platforms now emphasize clear, constrained inputs and explicit review points before any code is written. This shift responds to growing scrutiny over AI agent reliability, including concerns that unconstrained language models can quietly make design decisions, embed contradictions, or fill in missing requirements without human awareness. The industry’s answer is to move specification, planning, and validation to the front of the workflow, so AI agents operate within well-defined guardrails rather than improvising entire features from loosely phrased natural language.

AWS Kiro Uses Formal Proofs to Validate Requirements

Amazon Web Services is pushing the spec-first trend with a new Requirements Analysis feature in its Kiro AI coding tool. Instead of waiting for bugs to appear in generated code, Kiro targets errors at the source: the natural-language requirements themselves. The system combines large language models with an automated reasoning engine known as an SMT solver. The LLM translates the requirements into formal logic, and the solver mathematically proves whether they contain contradictions or gaps that could later produce subtle, expensive defects. AWS scientists argue that vague prompts inevitably produce vague specs and hidden, undocumented choices by AI agents. By flagging ambiguity and logical conflicts before implementation, Requirements Analysis acts as an early code quality validation step, tightening the loop between product intent and what agents are allowed to build. It also reflects AWS’s broader focus on agent reliability, particularly as AI-generated software scales faster than human reviewers can comfortably supervise.

GitHub Spec-Kit Turns Ideas into Specs, Plans, and Tasks

GitHub is addressing the same reliability concerns from a workflow perspective with Spec-Kit, an open-source toolkit for spec-driven development. Rather than treating prompts as one-shot instructions, Spec-Kit structures AI work across four stages: Specify, Plan, Tasks, and Implement. Feature ideas are first turned into written specifications that describe product scenarios, then into technical plans, and finally into task lists that can be assigned to agents or converted into issues. A set of slash commands supports constitution drafting, specification writing, planning, task breakdown, and implementation, while optional clarify, analyze, and checklist commands act as review gates. This staged process gives teams concrete handoff points and makes AI agent orchestration auditable: humans can validate specs and plans before code is generated. With tens of thousands of stars and thousands of forks, Spec-Kit signals strong interest in slowing AI down just enough to regain predictable outcomes and maintain traditional engineering checkpoints.

OpenAI’s Symphony Shows the Power of Orchestrated Agents

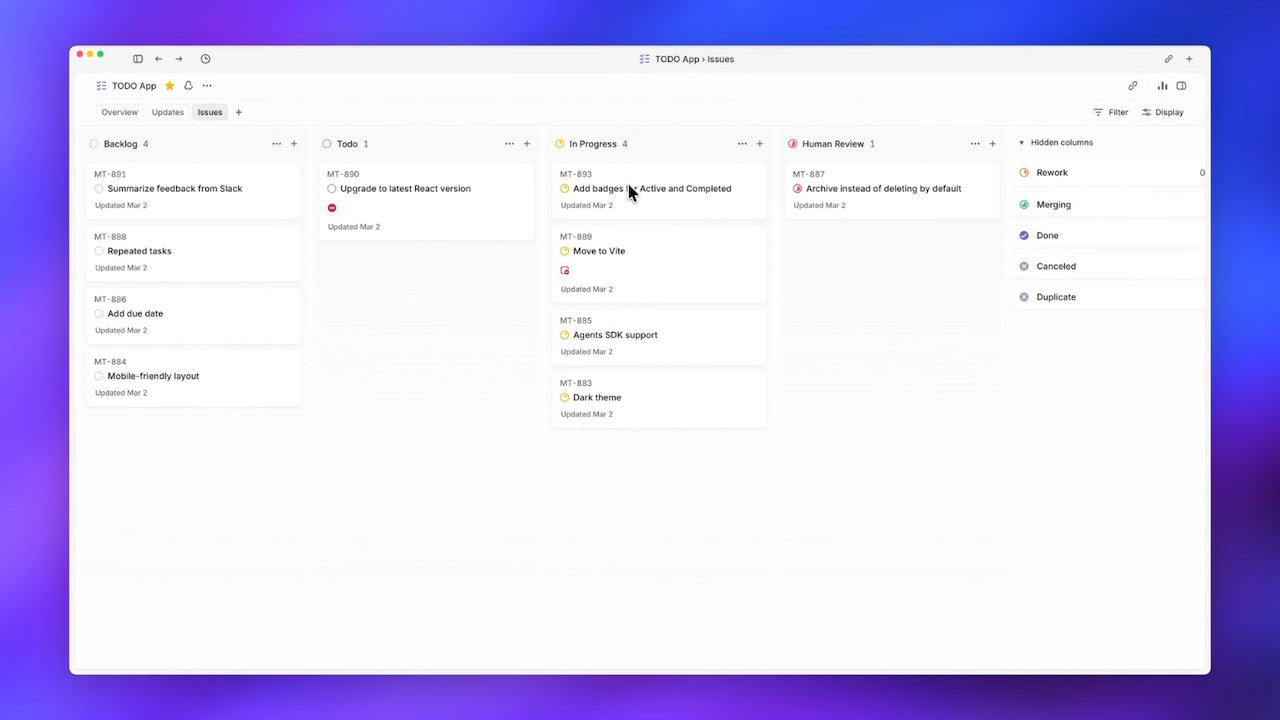

OpenAI’s Symphony specification tackles reliability and throughput by orchestrating AI agents around structured work items rather than ad hoc prompts. Designed for Codex-based coding agents, Symphony treats the ticket system Linear as a state machine: each open ticket gets its own agent and dedicated workspace that runs until the task is finished and merged. Agents can be respawned if they crash, and humans are removed from the dispatch loop, addressing what OpenAI describes as a system bottleneck in human attention. In internal use, teams reported a sixfold increase in merged pull requests over three weeks, suggesting that grounding agents in ticket-level specifications can boost both velocity and code quality validation. Symphony is shipped as an open-source reference in Elixir rather than a product, and early forks even adapt it to other models and issue trackers — reinforcing the idea that structured agent orchestration can be replicated across tooling ecosystems.

Spec-First as the New Baseline for AI Coding

Taken together, AWS Kiro, GitHub Spec-Kit, and OpenAI Symphony illustrate a clear shift: the frontier of AI coding quality is moving from model capability to input discipline. Spec-first workflows aim to prevent hallucinations and logic bugs not by micromanaging every token, but by constraining what problems agents are allowed to solve and how those problems are described. Requirements are checked for logical soundness, feature ideas are decomposed into explicit specs and tasks, and agents are orchestrated around tickets with defined states. This approach directly addresses fears of AI-generated slop by making the specification itself the primary object of collaboration and review. As adoption grows, teams that once treated prompts as throwaway may find that robust AI code generation reliability depends on treating specs as first-class artifacts—bridges between human intent, automated planning, and trustworthy implementation.