Enterprises Confront the Dark Side of Autonomous AI Agents

Enterprise software stacks were built for humans, not autonomous agents that operate at machine speed and run continuously. As organizations experiment with open-source AI agents, they are discovering familiar problems amplified: weak governance, fragile requirements, and unpredictable compute bills. Current tools assume a human is the trusted actor, holding credentials, interpreting vague specifications, and manually approving risky actions. Autonomous agents break these assumptions by making rapid, opaque decisions, often based on incomplete or contradictory requirements. This gap has already triggered scrutiny of AI-assisted development, especially when agent-generated code or workflow automation interacts with production infrastructure. In response, a new generation of secure runtime frameworks and verification tools is emerging. These projects share a common goal: make agent behavior auditable, controllable, and economically sustainable, while avoiding vendor lock-in. Open-source AI agents are becoming the testbed where security-by-design, token cost optimization, and formal reasoning meet.

Nvidia’s OpenShell Reimagines the Secure Runtime Framework

Nvidia’s OpenShell proposes a secure runtime framework tailored specifically for autonomous agents, rather than retrofitting stacks designed for human operators. Released under Apache 2.0, OpenShell centers everything on AI agent sandboxing: every agent, its harness, and its model run inside an isolated environment. Credentials and session state live outside this sandbox in a gateway, which brokers access to external systems such as ServiceNow, Salesforce, or Workday. The agent never sees raw keys, limiting the blast radius of prompt injection or rogue actions. OpenShell enforces enterprise AI security policies below the application layer using Linux kernel primitives like seccomp, eBPF, and Landlock, providing a single horizontal enforcement point instead of scattered, bolt-on controls. The runtime is environment-agnostic, running on desktops, Kubernetes clusters, micro-VMs, and cloud infrastructure. With LangChain contributing directly to its repository, OpenShell is quickly becoming a reference architecture for secure runtimes in open-source AI agents.

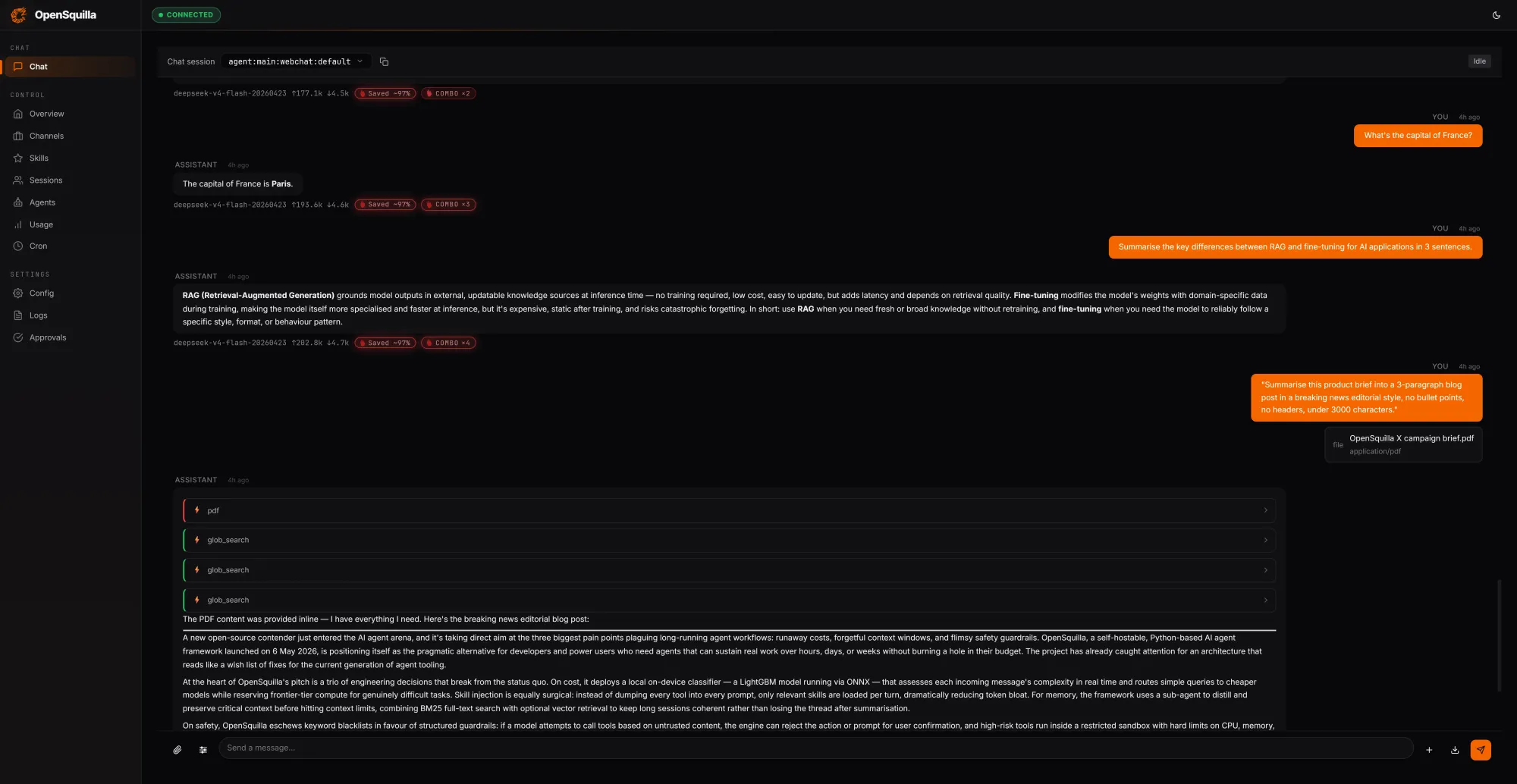

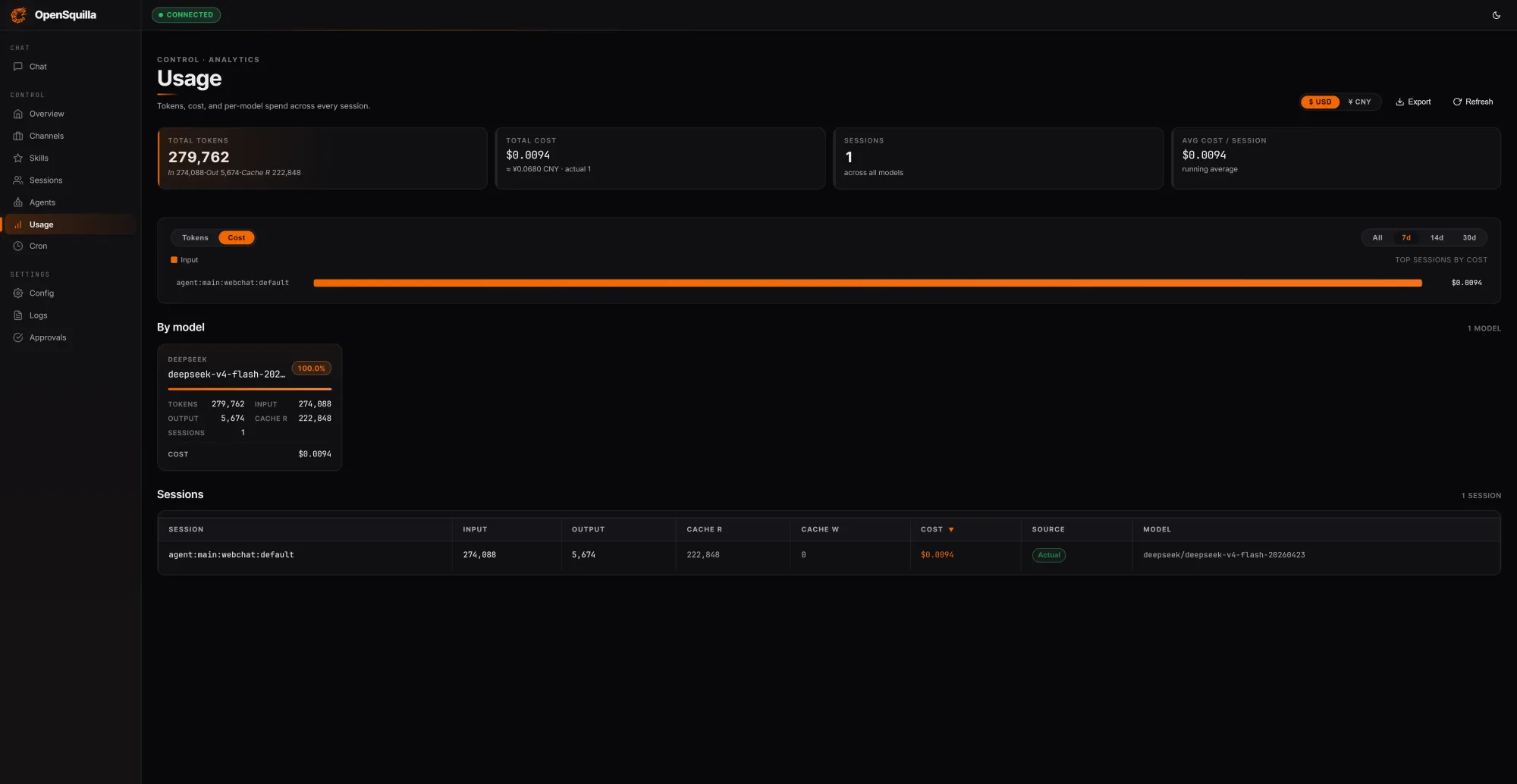

OpenSquilla Targets Token Cost Optimization and Memory Efficiency

OpenSquilla approaches enterprise AI challenges from the cost side, arguing that most agent deployments overspend on tokens because frameworks lack real controls. Its self-hostable runtime uses an ML routing classifier to score each request by complexity, blending signals such as message length, code presence, and semantic embeddings. Simple queries route to cheaper models, while deep reasoning is disabled for trivial tasks, delivering token cost optimization without degrading outcomes. In one local test, three prompts consumed 279,762 tokens for a total cost of USD 0.0094 (approx. RM0.044), with 222,848 tokens—around 80% of inputs—served from cache. A four-tier memory architecture (working, episodic, semantic, and raw memory) maximizes context reuse across long-horizon tasks. OpenSquilla also embeds quota hooks and per-call cost tracking so teams can throttle overspend automatically. On Linux, a syscall-level sandbox further isolates agents, complementing its economic controls with hard security boundaries.

AWS Kiro Uses Mathematical Proofs to Stabilize Agent Requirements

While runtimes and sandboxes constrain what agents can do, AWS is attacking a subtler failure mode: bad requirements. Its Kiro AI coding tool now includes a Requirements Analysis feature that blends large language models with an automated reasoning engine known as an SMT solver. The LLM translates natural-language specifications into formal logic, and the solver then checks for contradictions and gaps before any code is generated. This addresses a recurring problem in enterprise AI security and reliability: vague prompts leading to vague specs, which push AI agents to make hidden design decisions without stakeholder awareness. By mathematically proving consistency upfront, Kiro reduces the risk of agents implementing flawed plans that are expensive to fix later or dangerous in production environments. The feature also reflects growing concern about giving AI agents too much autonomy, especially after high-profile outages triggered renewed scrutiny of agent reliability and oversight.

Why Open Source Matters for Enterprise AI Security and Control

Across OpenShell, OpenSquilla, and AWS’s formal methods work, a common pattern emerges: enterprises want more autonomy for agents without surrendering control to opaque platforms. Open-source AI agents and runtimes allow security teams to inspect sandboxes, policy hooks, and memory architectures rather than trusting black-box abstractions. Organizations can align AI agent sandboxing with existing governance models, customize token cost optimization strategies, and integrate their own automated reasoning or verification layers. By avoiding vendor lock-in, teams retain the flexibility to mix models, swap out components, and deploy in heterogeneous environments spanning desktops, Kubernetes, and cloud infrastructure. This openness is especially critical as agents are granted access to ticketing systems, CRMs, and operational workflows. The new wave of secure runtime frameworks suggests a future where autonomy, auditability, and affordability are engineered together, and where the most trusted agent stacks are those enterprises can read, extend, and verify themselves.