From Dictation to Dynamic Voice Interfaces

Real-time voice AI is rapidly evolving from simple dictation into a full-fledged interface for applications and workflows. OpenAI’s latest audio models API release brings three specialized models—GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper—designed to power live voice agents, live translation AI, and low-latency transcription inside products. Instead of treating speech as a one-way input that gets converted into text, these systems listen, reason, and respond continuously. Voice becomes an operational layer over apps: users can speak naturally while the AI executes tasks, maintains context over long sessions, and responds in real time. At the same time, Thinking Machines’ new Interaction Voice Models push interactivity even further, treating conversation and perception as continuous streams rather than turn-based exchanges. Together, these launches signal that voice app development is entering a phase where speech is not just captured, but actively orchestrates complex, multi-step interactions.

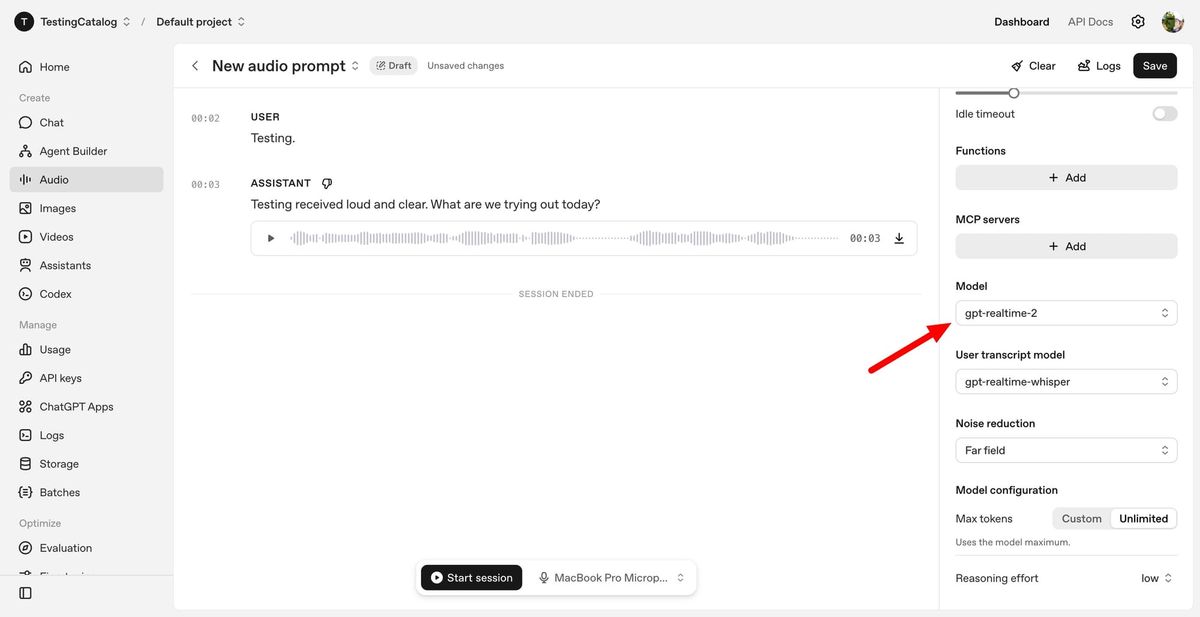

OpenAI’s GPT-Realtime-2: Voice with Live Reasoning and Tools

GPT-Realtime-2 is OpenAI’s flagship real-time voice model, built with GPT-5-class reasoning to handle richer spoken interactions. It supports a 128K-token context window, allowing voice agents to track long conversations, histories, and workflows without losing thread. Crucially, it can perform parallel tool calls, meaning a voice assistant can say “let me check that” while querying external systems in the background. Developers can tune reasoning effort from minimal to xhigh, balancing latency against depth of analysis depending on the voice use case. The model is designed to tolerate interruptions, corrections, and topic shifts, addressing one of the biggest weaknesses of legacy voice systems that fall apart when conversations become messy. GPT-Realtime-Whisper complements this with streaming, low-latency transcription for captions, meeting notes, and voice-driven workflows, while Realtime-Translate focuses on multilingual voice-to-voice experiences, all exposed through a unified audio models API.

Live Translation and Multilingual Voice Workflows

OpenAI’s GPT-Realtime-Translate is engineered for live translation AI scenarios where speech must be understood and re-expressed across languages without lag. It accepts speech input in over 70 languages and produces spoken output in 13, while tracking context shifts, regional accents, and domain-specific jargon. That makes it suitable for customer support, cross-border sales calls, education platforms, events, and media localization. In parallel, GPT-Realtime-Whisper enables low-latency transcription that keeps up with speakers in real time, powering live captions, searchable meeting archives, and voice-to-action workflows where spoken instructions immediately map to software operations. OpenAI frames these capabilities within three emerging patterns: voice-to-action, where speech triggers tasks; systems-to-voice, where software speaks proactively based on live data; and voice-to-voice, where real-time translation bridges participants. For developers, this is expanding voice app development from simple transcription tools into multi-lingual, reasoning-capable agents embedded across business processes.

Thinking Machines’ 0.4-Second Interaction Models

Thinking Machines, founded by former OpenAI CTO Mira Murati, is attacking a different bottleneck: interaction bandwidth between humans and AI. Its Interaction Voice Models, led by TML-Interaction-Small, respond in about 0.40 seconds and process audio, video, and text simultaneously. Instead of waiting for a user to finish speaking, the system operates in continuous 200-millisecond chunks, listening, reasoning, and talking at the same time. One internal process manages conversational flow while another tackles complex background tasks, mirroring how people can think ahead while speaking. Demos show the AI counting exercise reps from video, delivering real-time translation, and even noticing posture changes, all while holding a conversation. This approach aims to remove the stop-start pattern typical of current voice assistants and make AI feel more like a live collaborator than a question-and-answer box, especially in scenarios that blend vision, speech, and real-time collaboration.

What This Means for the Next Wave of Voice Apps

Taken together, OpenAI’s Realtime API models and Thinking Machines’ Interaction Voice Models mark a shift toward voice as a primary interface, not a bolt-on feature. Developers now have building blocks for voice experiences that combine reasoning, live translation, and low-latency transcription in a single stack. A customer support agent can listen in one language, respond in another, call backend tools in parallel, and keep a running transcript—all in real time. Productivity apps can overlay conversational AI that tracks meetings, executes commands, and explains what it is doing as it goes. As platforms like phones, in-car systems, and desktops expand support for conversational interfaces, these audio models API offerings are poised to become standard components in voice app development. The next generation of real-time voice AI will be judged less by how human it sounds and more by how much real work it can handle while the conversation is still unfolding.