Production Reality: GPT-5.5 Pricing Jumps 49–92% Over GPT-5.4

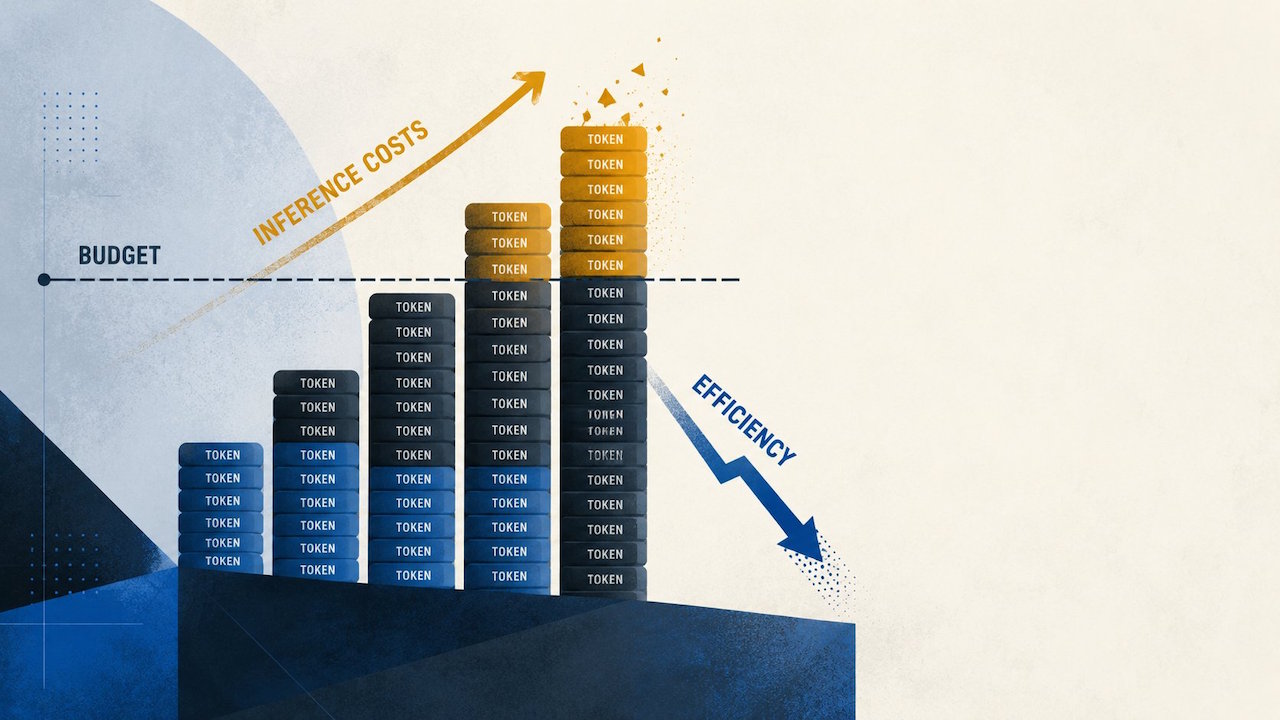

OpenRouter’s April 2026 analysis reveals a stark cost shift for teams moving from GPT-5.4 to GPT-5.5. Once customers migrated live traffic, effective AI model costs climbed between 49% and 92% across different prompt bands. This is not just a list-price issue but a reflection of how the model behaves under real production workloads. Short and mid-length prompts, where most enterprise assistants and agents actually operate, saw the steepest jumps in production workload expenses. Even where GPT-5.5 produced fewer tokens for very long prompts, those gains did not fully offset higher billing in the more common ranges. For buyers, the key takeaway is that GPT-5.5 pricing can nearly double your token bill if your usage resembles typical retrieval assistants, coding copilots, or customer-support bots. Budget owners can no longer rely on headline OpenAI efficiency claims alone; they must examine actual usage logs and token traces before committing.

Why OpenAI Efficiency Claims Do Not Automatically Cut Your Bill

OpenAI positions GPT-5.5 as more token efficient than GPT-5.4 with comparable per-token latency, arguing that shorter responses should soften the impact of higher list prices. OpenRouter’s data complicates that narrative. For prompts above 10,000 tokens, GPT-5.5 did produce 19–34% fewer completion tokens, but in the 2,000–10,000 token band, median completions grew 52%. Even prompts under 2,000 tokens showed a 7% rise in median output length. Because many enterprise flows consist of short prompts, repeated tool calls, and follow-up questions, these longer completions accumulate. The result: average cost per million tokens under 2,000-token prompts rose from USD 4.89 (approx. RM22.50) to USD 9.37 (approx. RM43.10). For 50,000–128,000-token prompts, cost climbed from USD 0.74 (approx. RM3.40) to USD 1.10 (approx. RM5.10). Efficiency in isolated benchmarks does not guarantee savings once real users and workflows enter the loop.

Recalculating ROI and Budget: From Benchmarks to Billing Reality

For enterprise buyers, GPT-5.5 forces a shift from theoretical planning to billing reality. Pre-launch, finance teams may approve deployments by comparing list prices: GPT-5.4 at USD 2.50 (approx. RM11.50) per million input tokens and USD 15 (approx. RM69.00) per million output tokens versus GPT-5.5 at USD 5 (approx. RM23.00) and USD 30 (approx. RM138.00) respectively. GPT-5.5 Pro climbs to USD 30 (approx. RM138.00) for input and USD 180 (approx. RM830.00) for output. But OpenRouter’s analysis shows that production workload expenses can outpace these assumptions once prompt drift, retries, and longer mid-range completions kick in. Platform teams and product owners inherit this ongoing token bill. To protect ROI, organizations should run constrained pilots on real traffic, track completion lengths by prompt band, and model worst-case usage scenarios. Without that discipline, the move to GPT-5.5 can quietly turn into a recurring inference-budget overrun.

Cost-to-Performance: Deciding Where GPT-5.5 Actually Belongs

As AI deployments scale, the cost-to-performance ratio becomes the critical decision lens. GPT-5.5 may be justified for long-context tasks where its shorter outputs and advanced capabilities matter more than its higher pricing. In these cases, routing GPT-5.5 to premium user tiers or specialized long-context jobs can contain costs while preserving value. For everyday workloads—retrieval-based assistants, coding partners, workflow agents, and customer support bots—the extended mid-range completions can turn GPT-5.5 into a costlier default path than GPT-5.4. Teams should conduct routing experiments: reserve GPT-5.5 for jobs with prompts above specific token thresholds, or dynamically switch models based on context window and latency requirements. Rival options like Claude Opus 4.7 show that headline rates alone are misleading. Tokenization differences, response length, and workload shape will ultimately decide which model delivers the best balance between performance and sustainable AI model costs.