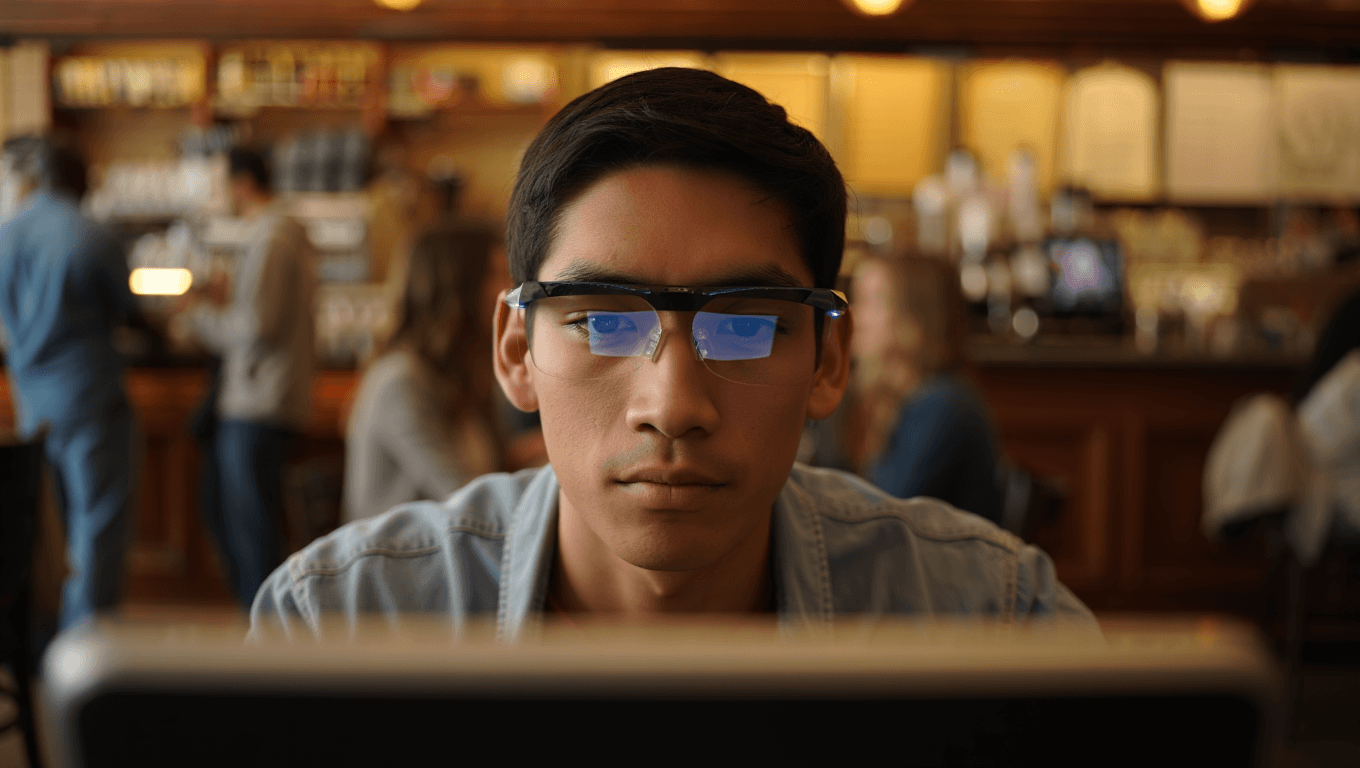

Why Live-Captioning Smart Glasses Matter More Than Apps

Live captioning smart glasses are finally moving from novelty to practical AI hearing assistance. Instead of looking down at a phone, subtitles are projected inside your field of view, so you can keep eye contact and body language in play. That makes a big difference in crowded rooms or noisy cafés, where lip-reading and traditional hearing aids can struggle. Recent coverage from tech reviewers highlights five new real-time subtitle glasses that promise to handle overlapping voices, background music, and echo-prone halls better than earlier generations. These accessibility smart glasses are no longer just about fitness metrics or entertainment notifications; they are designed to make group conversations more inclusive and spontaneous. For anyone who relies heavily on captions—whether because of hearing loss, auditory processing challenges, or simply loud environments—the key question is not whether these devices exist, but which models stay readable and accurate when the world around you gets loud.

Captify and Even: Strong Caption-First Options Without Heavy Subscriptions

Among today’s real-time subtitle glasses, Captify and Even stand out as caption-first designs. Captify is highlighted in recent reviews for delivering readable subtitles in noisy settings, and for prioritising transcription accuracy over complex extras. It is positioned as an option that avoids heavy ongoing subscription commitments, which appeals to buyers who want predictable costs and straightforward AI hearing assistance. Even, meanwhile, surprised reviewers by bundling full features without requiring any subscription at all. Everything comes included out of the box, so you can focus on how well the captions track conversation instead of managing plans or minutes. The trade-off with Even’s G2 is that it relies heavily on an internet connection and is largely devoid of offline features, so you will want reliable connectivity if you move between venues. For many users, however, that compromise is worthwhile in exchange for its power, simplicity, and value-focused model.

XRAI, Leion, and AirCaps: Subscriptions, Offline Modes, and Heavy Frames

Other accessibility smart glasses take a different path, balancing subscription tiers, offline modes, and hardware trade-offs. Leion’s Hey 2 is a price leader in this space and keeps even its prescription lenses relatively affordable at USD 90–299 (approx. RM414–RM1,375). Its app offers captioning, translation, two-way “free talk,” and a teleprompter, with nine base languages and more unlocked via Pro minutes. Those Pro features are sold by usage time—USD 10 (approx. RM46) for 120 minutes up to USD 200 (approx. RM920) for 6,000 minutes—so you must actively manage your consumption. XRAI uses similar hardware and offers a large language list, selling Pro both by the month and by the minute, while also including a rudimentary offline mode that works better than most. AirCaps opts for a simpler one-button interface and an optional Pro subscription at USD 20 (approx. RM92) per month, plus a lens-holder system that requires separate prescription inserts, but its frames are noticeably bulkier on the face.

Meta Ray‑Ban, Clip-Ons, and Chinese AI Glasses: When Captions Are Only One Feature

Not every pair of live captioning smart glasses is built around accessibility first. Meta’s Ray‑Ban collaboration, for example, folds live-caption and translation features into a broader smart-glass experience focused on mainstream AR and style. If you prefer a familiar eyewear brand and a single app ecosystem, this hybrid approach can be appealing, though caption performance competes with many other functions. Xreal- and Aura-style clip-ons go the opposite way: they deliver captions via lightweight displays paired with a phone app. These solutions can undercut full-frame smart glasses on cost and weight, but you may notice occasional latency because all processing flows through your handset. Meanwhile, makers such as Rokid showcase aggressive AR captioning demos and rich virtual-screen features. They can look powerful and comparatively affordable, yet buyers should examine long-term support and data practices carefully. Across these options, captions are just one part of a larger AR toolkit, so accessibility may not always be the primary design priority.

How to Choose: Matching Glasses to Your Real-World Soundscapes

Choosing the right pair of accessibility smart glasses starts with where you struggle to follow speech most. For constant conversations in busy offices, classrooms, or restaurants, caption-first models like Captify and Even put accuracy and readability at the center, often with fewer distracting extras. If you move in and out of areas with weak connectivity, XRAI or AirCaps may appeal thanks to more capable offline modes, though both layer in subscription decisions and, in AirCaps’ case, heavier hardware. Users who want fashion-forward frames or multipurpose AR tools might prefer Meta Ray‑Ban or clip-on displays, accepting some trade-offs around latency or feature complexity. Whatever you choose, smart glasses fundamentally change the feel of live captioning compared with phone apps, because subtitles sit where conversation actually happens—on the people in front of you. That shift can turn previously exhausting social situations into more organic, less stressful interactions.