AI Code Generation Outpaces Human Understanding

Generative AI has reshaped how software is written, but it has not reshaped how humans understand it. Tools that promise massive productivity gains now let developers generate entire features from prompts, with juniors reportedly completing tasks up to 55% faster using AI assistance. Yet the act of understanding code still depends on slow, hard-won expertise. Senior engineers can usually anchor AI suggestions in years of architectural context. For less experienced developers, the gap between AI code generation skills and genuine comprehension is the whole problem. They may ship clean, well-formatted changes that pass tests, while being unable to explain why the code works—or why it fails in edge cases. The result is a growing disconnect between output and insight: AI coding assistants impact the quantity of code produced dramatically, but they do far less to develop the reasoning muscles required for reliable debugging.

The Quiet Decline of Developer Debugging Abilities

Programmers in multiple companies describe a creeping sense that their hands-on skills are deteriorating. Many say they now “vibe code”: prompting AI until a solution appears, rather than systematically reasoning through a problem. Some compare the experience to forgetting phone numbers after smartphones became ubiquitous—the brain offloads what it no longer routinely practices. Developers report that even when they recognize a bug, they struggle to trace through AI-generated logic they never truly internalized. Others feel burnt out by the mental load of coaxing AI systems instead of engaging in traditional problem-solving. What once was the core of the craft—stepping through stack traces, isolating conditions, and refining hypotheses—is increasingly replaced by prompt tweaking. Over time, this shift from active debugging to passive supervision erodes foundational skills that previously came from years of deliberate practice on smaller, hand-written codebases.

Code Review Quality Issues and the New ‘Expert Beginner’

The consequences of this skills erosion are surfacing most clearly in code reviews. Teams report situations where tests pass, reviews look clean, and yet subtle bugs—like timing issues only triggered in rare conditions—slip into production. The juniors submitting these changes often cannot articulate why the implemented approach is wrong or right, because the AI wrote it. This dynamic echoes the “expert beginner” concept: developers who appear competent but lack depth. Today’s version is not rooted in arrogance but in over-dependence on AI. They move fast, ship frequently, and produce tidy pull requests—but their debugging intuition remains underdeveloped. That creates code review quality issues for senior engineers, who must now audit not only the human developer’s intent but also the opaque decisions of AI tools. The oversight gap widens when seniors themselves have not integrated AI into their everyday work and struggle to recognize emerging patterns in AI-produced code.

Technical Debt Accumulation in the Age of Vibe Coding

The most alarming outcome of this shift is technical debt accumulation at a scale that is hard to track. Developers describe shipping large, AI-generated changes that no one fully understands, under pressure from management to demonstrate AI usage. Some teams skip thorough audits because the volume of generated code makes deep inspection impractical. Others concede they simply lack the debugging confidence to untangle what the AI produced. That debt—performance regressions, security gaps, brittle abstractions—often remains invisible until the next major refactor or incident. When future engineers revisit these codebases, they may discover sprawling logic that was never truly designed, only assembled through prompts. As AI coding assistants impact day-to-day workflows, this vibe-coded history risks becoming a latent liability, where fixing bugs or adding features takes longer precisely because foundational design and debugging decisions were never consciously made by humans.

What Engineering Leaders Must Do to Protect the Craft

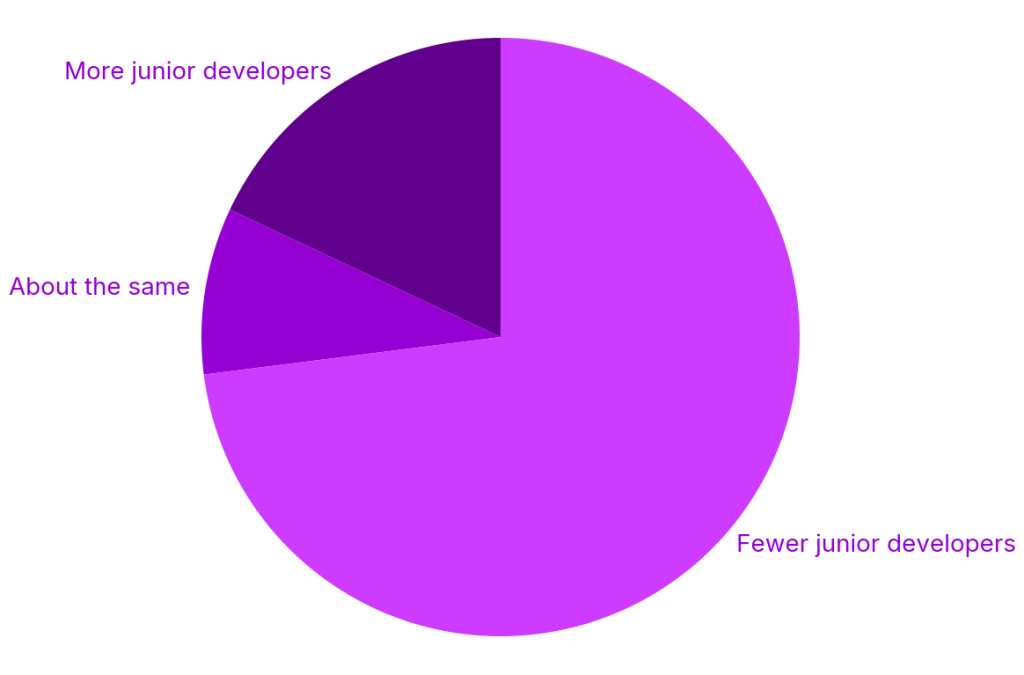

Engineering leaders now face a strategic choice: treat AI purely as a productivity lever, or deliberately use it to strengthen, not weaken, the craft. That starts by redefining expectations. Code produced with AI still needs human reasoning applied: teams should formalize standards for when and how AI output must be read, explained, and tested. Leaders can require developers to justify critical sections in their own words during reviews, reinforcing understanding rather than passive acceptance. They can also protect the talent pipeline by resisting the temptation to replace junior cohorts entirely with “seniors plus AI,” recognizing that debugging abilities mature through exposure to real problems. Training programs should explicitly teach how to audit AI-generated code, not just how to prompt it. The goal is a new equilibrium where AI code generation skills coexist with robust human debugging, ensuring that speed does not come at the expense of long-term code health.