From Ad-Hoc Prompts to Spec-Driven Development

Three new tools—GitHub Spec-Kit, OpenAI Symphony, and Anthropic’s Petri 3.0—are pushing AI coding automation away from one-off prompts and toward structured workflows. Instead of treating AI models as reactive autocomplete engines, each framework embeds them inside a deliberate process: write a spec, break it into tasks, assign AI agents, then evaluate their behavior against explicit checks. For developers, this marks a shift from manual orchestration to AI agent orchestration, where the human role moves up a level from typing prompts to designing flows, constraints, and review gates. The common thread is a spec-first mindset: GitHub focuses on feature specs, OpenAI on ticket-based execution, and Anthropic on alignment test definitions. Together, they show an industry converging on the idea that powerful models need equally powerful scaffolding to be reliable in real-world software delivery.

GitHub Spec-Kit: Planning Before the First Line of Code

GitHub Spec-Kit, now open-sourced, formalizes spec-driven development by forcing structure before any code is generated. The toolkit’s workflow runs through Specify, Plan, Tasks, and Implement, turning loose feature ideas into concrete specs, detailed plans, and task lists that AI agents can execute. A rich command surface underpins this flow: slash commands cover constitution drafting, specification writing, planning, task breakdown, conversion to issues, and implementation, while optional clarify, analyze, and checklist tools help teams close gaps and add tests or safeguards. With more than 90,000 stars and over 8,000 forks, Spec-Kit is already seeing significant community interest, even as teams still have to wrestle with installation paths, Python requirements, and extension drift. For engineering leaders, the promise is clear: reduce improvisation, standardize planning, and embed quality gates directly into AI-assisted development pipelines.

OpenAI Symphony: Ticket-to-Merge AI Agent Orchestration

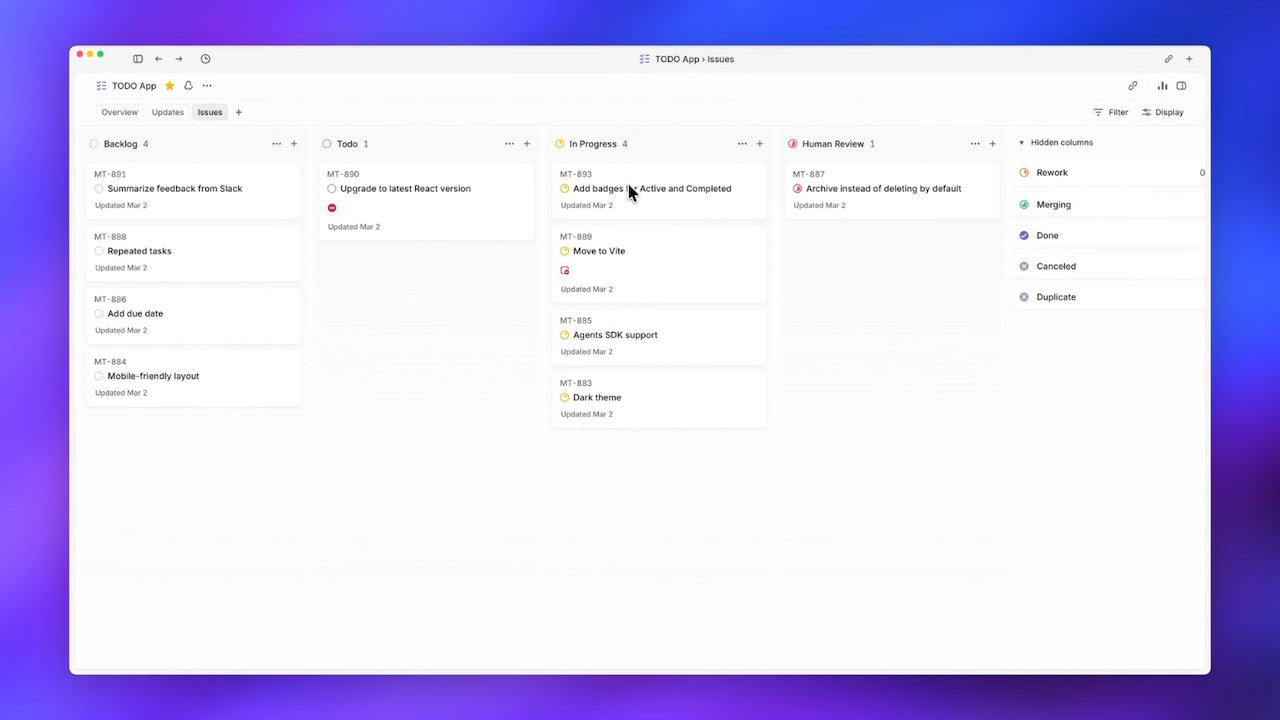

OpenAI Symphony pushes AI coding automation further down the delivery pipeline by letting Codex agents pull tickets directly from Linear and work autonomously until their changes are merged. Instead of engineers manually spinning up and supervising multiple sessions, Symphony treats Linear as a state machine and assigns each ticket its own Codex agent. If an agent crashes mid-task, the system respawns it, keeping work in motion without human dispatch. This design attacks a specific bottleneck OpenAI identified: human attention limited teams to supervising only three to five parallel agents before context switching erased productivity gains. In internal use, Symphony reportedly drove a sixfold increase in merged pull requests within three weeks. Although OpenAI ships Symphony only as an Elixir reference implementation, early adoption signals—including workspace spikes and forks porting the spec to other stacks—suggest developers see value in this agent orchestration pattern.

Anthropic Petri 3.0: Production-Aware Alignment for AI Agents

Anthropic’s Petri 3.0 update complements spec-driven development by attacking a different problem: how to systematically test and align AI systems that are already deployed. The new release splits the auditor and target models so they can be tuned independently, giving teams finer control over how their judging systems behave without rebuilding evaluation pipelines around each model under review. Petri 3.0 also introduces Dish and Bloom-based behavior checks, expanding the toolkit’s ability to run targeted evaluations that reflect real-world deployment environments. Crucially, these changes arrive alongside a governance shift, with Meridian Labs taking over stewardship and positioning Petri within a broader open evaluation stack alongside tools like Inspect and Scout. For organizations experimenting with agent-driven coding, Petri offers a modular way to subject those agents—and the scaffolding around them—to repeatable, production-ready alignment tests rather than ad-hoc spot checks.

A Converging Future: Spec-First, Agent-Driven Workflows

Taken together, GitHub Spec-Kit, OpenAI Symphony, and Anthropic Petri 3.0 point toward a new, converging paradigm in AI-assisted software development. Spec-Kit enforces upfront structure through specs, plans, and tasks; Symphony operationalizes continuous execution by assigning autonomous agents to tickets and managing them as a distributed system; Petri rounds out the lifecycle by offering modular auditor–target splits and behavior checks for production alignment. The common pattern is that manual, ad-hoc processes are replaced by structured, AI-orchestrated workflows with built-in quality gates at planning, execution, and evaluation stages. For developers, this means less time spent micro-managing models and more time designing the rules, specs, and tests that govern them. For organizations, it signals a maturing ecosystem where AI coding automation is no longer just about speed, but about predictable, spec-driven, and auditable delivery.