From Grammarly to ‘anti‑Grammarly’: The rise of flawed-by-design writing

In Malaysian offices and campuses, AI-generated emails, reports and assignments are quickly becoming routine. Tools like ChatGPT, Gemini and dedicated email writers now draft messages that are clear, structured and almost perfectly grammatical in seconds, replacing the old struggle of starting from a blank page. That polished quality is exactly what made Grammarly popular – but it is also what is now making people suspicious. When every line sounds like it has been reviewed by a professional editor, colleagues and lecturers may wonder whether a machine is doing the heavy lifting. This is where “AI humanizer tools” enter the picture. Instead of correcting your English, they deliberately roughen it up so it feels more like everyday human writing. The trend signals a shift: generating text is easy; standing out as genuinely human – or at least looking that way – has become the new challenge.

Sinceerly and the new ‘anti‑polish’ approach to AI generated emails

One of the most talked‑about examples is Sinceerly, a browser plugin created by Harvard Business School student Ben Horwitz and often described as an “anti‑Grammarly” tool. The Sinceerly email tool takes AI generated emails and intentionally adds small imperfections: a dropped letter, a slightly odd phrase, or a tiny inconsistency in tone. Instead of turning rough drafts into perfect English, it nudges polished text back toward the messy reality of how people actually type quick messages. The name itself – misspelling “sincerely” as “sinceerly” – makes the point: a little imperfection can feel more authentic than a flawless sign‑off. Sinceerly even offers different modes, ranging from mostly polished with one or two mistakes to looser, more casual styles that read like a fast WhatsApp‑style reply rather than a carefully edited memo.

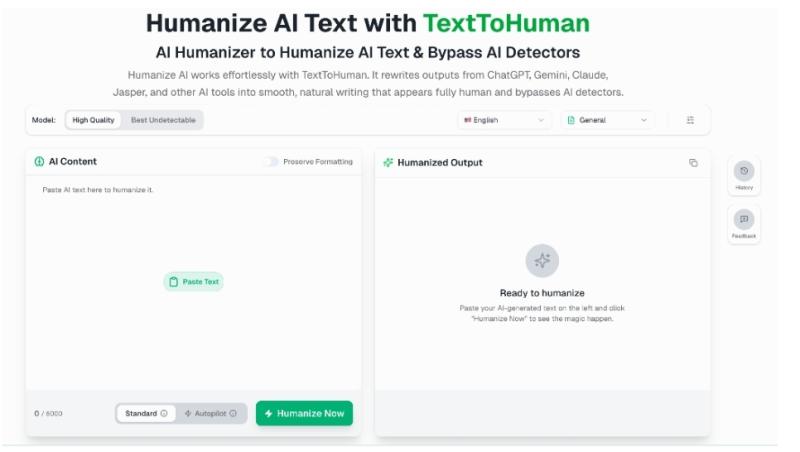

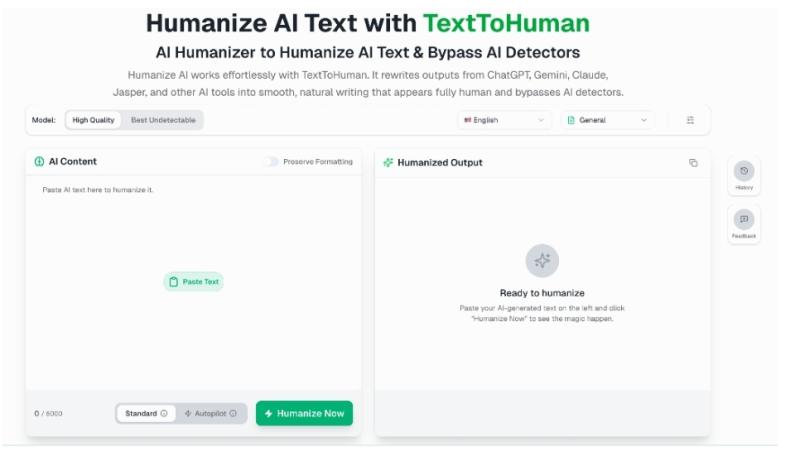

Beyond typos: TextToHuman and AI humanizer tools that chase human like writing

Other AI humanizer tools focus less on typos and more on tone and rhythm. TextToHuman, for example, is marketed as a way to turn robotic AI drafts into smoother, more natural human like writing for students and professionals. Instead of sprinkling obvious errors, it rewrites sentences to break predictable language patterns that many AI writing detection services look for. The platform emphasises readability and flow, aiming to make text sound like a person who is thinking through an idea, not a system optimising for grammar. Users can paste in AI content, choose a mode, and get a rephrased version that feels less uniform, with sentence alternatives for more control. This reflects a broader shift: people are no longer satisfied with generic AI outputs. They want purpose‑built tools that make their work sound like them – but without triggering suspicion from supervisors, clients or lecturers who are wary of AI assistance.

Why ‘too perfect’ writing feels suspicious in schools and offices

Malaysian students and office workers know the feeling: you send a carefully crafted English email, only to be asked, “Did you use AI for this?” As AI systems become capable of producing flawless grammar and highly structured paragraphs, polished writing is increasingly treated as a clue that a chatbot did the work. AI writing detection tools reinforce this anxiety by scoring texts as likely “AI generated” based on patterns that can also appear in perfectly competent human writing. Some professionals worry that years of improving their skills in English are now undermined because their work “looks like AI.” For students, the stakes are higher: if an assignment is flagged by AI writing detection software, they risk being accused of cheating, even if they only used AI lightly. This tension drives interest in AI humanizer tools that promise to hide the machine’s fingerprints by reintroducing small quirks and inconsistencies.

Ethics, privacy and practical tips for Malaysian users

For Malaysians, the big question is not just whether AI humanizer tools work, but when they are appropriate. Lightly humanising a draft for a casual work email or an internal note may be acceptable if you are transparent about using AI to save time. However, using these tools to disguise fully AI-written assignments, official reports or research papers crosses ethical lines and can violate university or company policies. Institutions may respond by tightening rules, updating honour codes or requiring process evidence, such as outlines and drafts. Privacy is another concern: pasting client data, financial details or confidential student information into third‑party tools can expose sensitive content. Before using any AI humanizer, check its data policy, avoid including confidential information, and consider whether a simpler approach – editing the AI draft yourself to add your natural voice – might be safer, more ethical and ultimately more convincing.