Remy: From Chatbot to Always-On Gemini AI Agent

Google’s experimental Remy project shows how quickly Gemini is moving beyond static chat into true Gemini AI agents. Described internally as a “24/7 personal agent,” Remy is being tested by employees inside a staff-only Gemini app, where it can monitor user-relevant information, act on their behalf, and learn preferences over time. Unlike simple prompt-and-response chat, Remy is meant to integrate across Google’s connected services—Gmail, Calendar, Docs, Drive, Tasks, Photos, and even third-party apps like WhatsApp or Spotify—to handle multi-step, real-world workflows. That raises the stakes on control. Google is leaning on its Gemini Privacy Hub to let users inspect logs, delete activity, and restrict what data fuels personalization. Research and Cloud guidance emphasize least-privilege access and auditable actions, reflecting how much trust is required when autonomous AI capabilities are allowed to plan, decide, and execute beyond a single query.

Seven Hidden Gemini Live Models and a New Thinking Variant

A hidden model selector discovered in the Google app points to seven new Gemini Live options, suggesting a rapid expansion of agentic AI models tailored for different tasks. These include several Audio-to-Audio models, a personalization-focused P13n variant, and a dedicated Gemini thinking variant labeled A2A_Rev25_RC2_Thinking. Early testing shows they behave measurably differently: some access live location for weather, others avoid it; the personalization model remembers user details and uses them naturally later, improving continuity. A model codenamed Capybara even identifies itself as “Gemini 3.1 Pro,” hinting at a higher-end runtime compared with the Flash Live baseline. Because these models are activated via server-side flags, Google can dynamically roll them out or swap them in during events like I/O, letting it showcase live, specialized behaviors—from memory-rich conversations to deeper reasoning—without forcing users to install new software.

Thinking Models and Gemini Omni: Reasoning as a Platform Play

The emerging Gemini thinking variant and the early peek at Gemini Omni hint at Google’s next frontier: reasoning-first systems that operate as platforms, not just bots. The A2A_Rev25_RC2_Thinking model is tuned for complex reasoning in live conversations, while Omni—an extension of Google’s Veo video foundation—demonstrates multimodal reasoning by interpreting prompts such as a professor writing a trigonometric proof on a chalkboard. In tests, Omni generated lifelike scenes but still showed typical generative glitches, and strict guardrails blocked certain benchmark prompts. Together, these systems underline Google’s belief that agentic AI will depend on models that can plan, infer, and adapt across modalities, not just answer questions. If Gemini’s thinking models can consistently handle long, messy tasks and ambiguous instructions, they’ll underpin autonomous AI capabilities that feel less like demos and more like infrastructure for real-world tools.

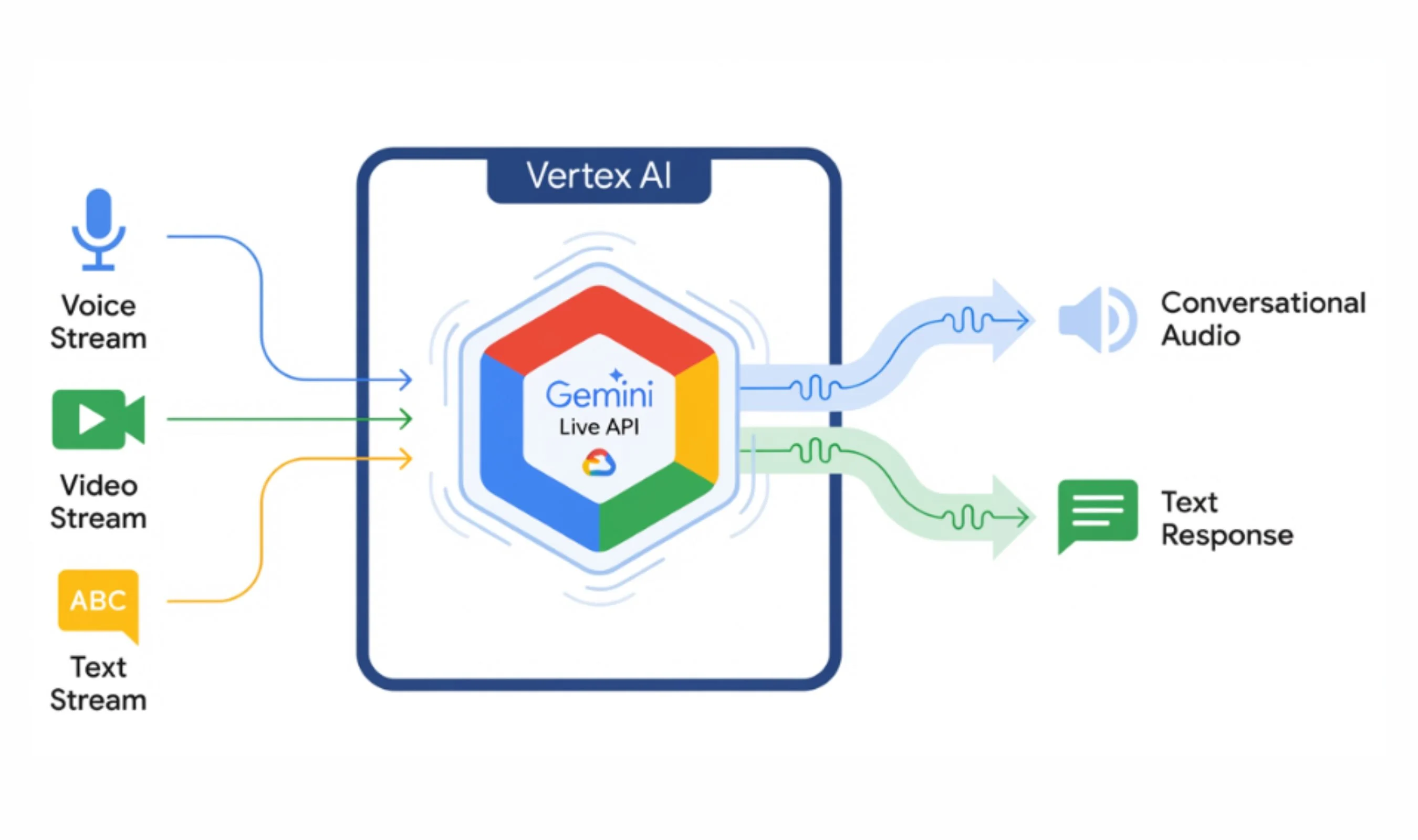

Gemini 3.1 Flash-Lite and the Enterprise Agent Platform

Behind the headline models, Google is investing in the less glamorous but crucial plumbing needed for agentic workflows. Gemini 3.1 Flash-Lite is optimized for low-latency, high-volume processing, making it a candidate backbone for enterprise agents that must respond quickly while orchestrating tool calls and API chains at scale. On the cloud side, the Gemini Enterprise Agent Platform bundles identity, observability, orchestration, and security to help teams manage fleets of AI agents. This is aimed squarely at developers who judge models not by benchmarks but by whether they reduce cleanup and survive messy, multi-step work. With Flash-Lite handling fast, cost-sensitive tasks and more capable Gemini AI agents like Remy or Capybara-class models tackling reasoning-heavy steps, Google is trying to build a layered stack that supports everything from lightweight automations to deeply integrated autonomous workflows across Workspace and custom business apps.

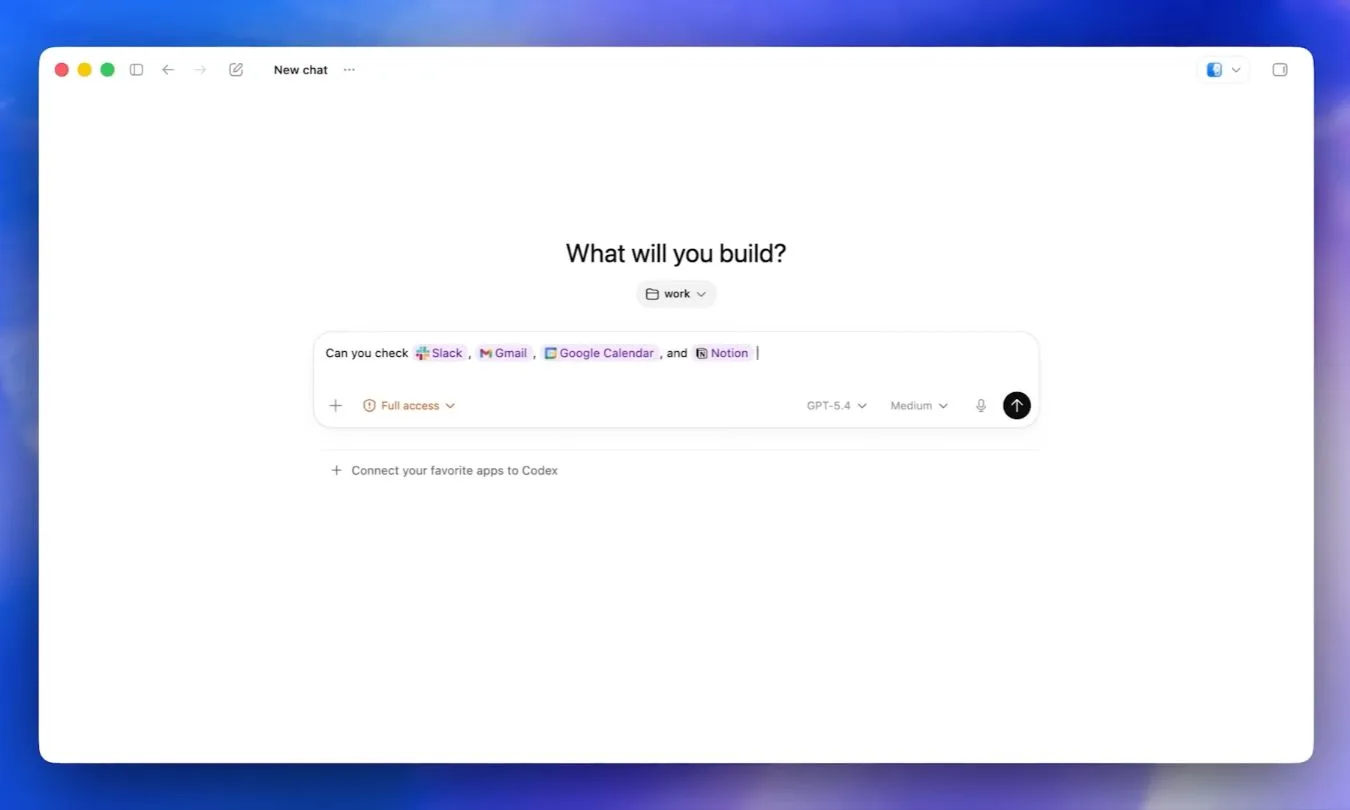

Competing with ChatGPT by Owning Agentic Workflows

As OpenAI pushes toward more autonomous ChatGPT experiences, Google is repositioning Gemini to compete not just on model quality, but on workflow ownership. Developer-focused events highlight “agentic coding,” and Google knows coders will quickly expose any gap between keynote polish and production reality. The company’s strategy combines multiple tiers of Gemini models—Flash-Lite, Pro, thinking variants, audio and video engines—into a coherent ecosystem of Gemini AI agents that can act, remember, and coordinate tools with clear governance. Success hinges on more than raw intelligence: agents must respect privacy controls, log their choices, and operate under constrained powers aligned with user risk tolerance. If Google can turn Remy-like agents and the new thinking models into dependable, auditable teammates rather than novelty chatbots, it could narrow the perceived AI capability gap and make Gemini the default platform for orchestrated, autonomous workflows.