From Power Users to the Mainstream: AI Agents Go Consumer

AI agents have largely lived in specialist tools and enterprise workflows, where power users chain models, APIs, and automation scripts together. That’s starting to change. A new wave of AI agents is being designed for consumer apps, with natural language as the primary interface rather than dashboards and developer consoles. Meta and Hugging Face are emerging as two of the clearest examples of this shift. Meta is weaving an autonomous agent directly into social platforms billions of people already use, while Hugging Face is turning a desktop robot into a no-code playground for agentic behavior. In both cases, autonomous agents powered by natural language are moving closer to everyday users and everyday contexts—shopping, content creation, learning, and even group facilitation—marking a transition from experimental, technical toys to tools that can quietly run in the background and advance personal goals.

Meta’s Hatch Agent: A Socially Grounded Companion Inside Your Feed

Meta’s upcoming Hatch agent is designed as a consumer-grade autonomous assistant that lives inside Meta’s existing apps rather than a separate chatbot. Early code traces suggest a waitlist-based rollout and a wide task portfolio: image and video generation, shopping flows, learning sessions, research workloads, and groundwork for scheduled tasks and file generation. Unlike many productivity-focused agents, Hatch is expected to lean on Meta’s deep social graph, reaching into Instagram and Facebook to turn feed exploration, creator discovery, and shopping research into agent-driven workflows. It reflects Mark Zuckerberg’s vision of agents that work “day and night” toward user goals, quietly handling background tasks while users scroll. Internal testing is reportedly targeted for mid-year, using mock environments modeled after popular platforms to train tool use. For consumers, Hatch hints at AI agents that feel less like separate apps and more like native capabilities threaded through familiar social experiences.

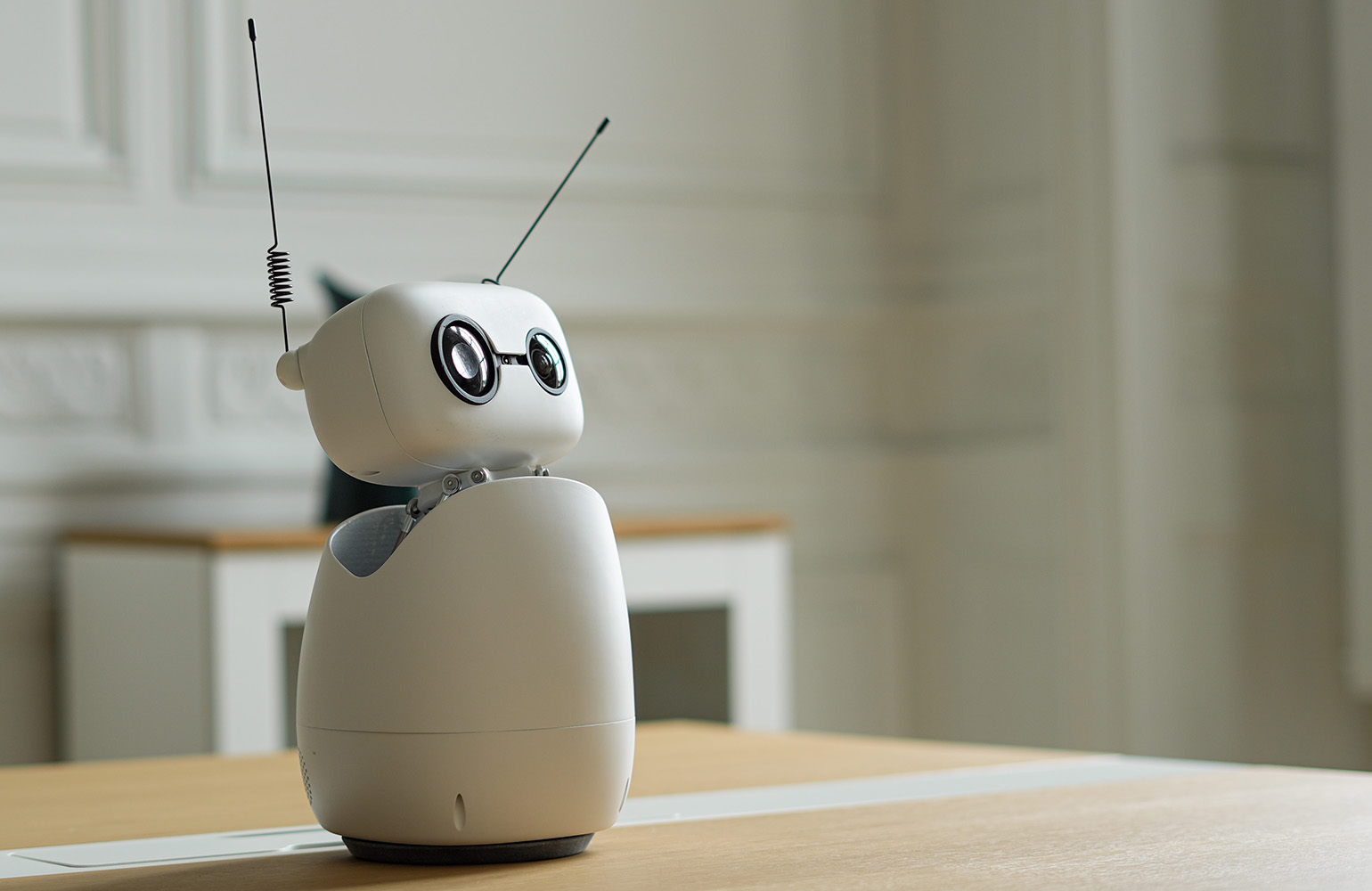

Hugging Face’s Agentic Toolkit: Describe the App, Let the Agent Code It

If Meta is embedding agents into social feeds, Hugging Face is embedding them into creation itself. Its agentic toolkit for the Reachy Mini desktop robot lets users build working apps in under an hour without writing code. Instead, users describe desired behavior in plain English, and an AI agent writes, tests, and deploys the code to the robot. This collapses three historic barriers in robotics—expertise, expensive bespoke hardware, and lengthy integration work—into a simple web flow on the Hugging Face Hub. The impact is visible in early adopters like Joel Cohen, a non-developer who built a voice-controlled co-facilitator for CEO peer groups by just specifying what he wanted. Apps live in a searchable, forkable catalog, can be modified through natural language, and even run in a browser-based simulator. It’s a concrete example of autonomous agents using natural language to translate human intent directly into functioning software.

Lowering the Barrier: Natural Language as the New API

Both Hatch and Hugging Face’s agentic toolkit highlight the same structural change: natural language is becoming the primary API for automation. Meta’s Hatch positions image generation, shopping, and research as conversational tasks that an agent can pursue continuously, while Hugging Face lets users specify complex robotic behaviors as simple instructions: wake on a voice cue, greet participants by name, summarize discussions, or react to a chess blunder. In each case, the user does not see code, APIs, or configuration files. The AI agent assumes responsibility for planning, tool selection, and integration. This lowers the barrier to AI agents in consumer apps, opening them to people who may never have used automation tools or scripting. As app stores for agents and agent-powered experiences grow, users can copy, remix, and adapt behaviors through plain language, turning automation into something as accessible as searching or texting.

From Enterprise Automation to Everyday Agent Companions

Consumer-grade agents signal a broader shift in how AI automation is conceived and delivered. Enterprise systems have long used bots for research, customer support, and data processing, but these tools were framed as back-office utilities. Meta and Hugging Face are instead treating autonomous agents as companions that live where users already spend time—inside social feeds or on the desktop, visible and interactive. Meta’s roadmap even hints at agentic shopping that keeps users inside Instagram while researching and checking out products. Hugging Face’s Reachy Mini apps turn a small robot into a language tutor, anti-procrastination coach, or office receptionist. As these approaches mature, the line between “app” and “agent” will blur. Rather than downloading yet another tool, users will increasingly expect everyday services to quietly orchestrate multi-step tasks on their behalf, guided only by conversation and preference, not by technical skill.