Android 17 steps up as a serious content creation platform

Android 17 is being framed less as a routine OS update and more as a statement of intent: smartphones should be production tools, not just playback devices. Google is rolling out a suite of Android 17 creator features that cover the entire workflow, from capture to edit to upload. At the Android Show I/O Edition, the company highlighted new camera pipelines for third‑party apps, closer integration with Instagram, and on-device AI tools designed to reduce the friction of making short-form video. This focus reflects how many creators already work—shooting, cutting, and posting directly from their phones instead of relying on laptops. Google’s own engineering leadership describes the goal as trading endless “screen time” in editing apps for more streamlined tools that let creators stay present while still publishing consistently. In practice, that means tighter defaults, smarter automation, and fewer reasons to reach for a desktop.

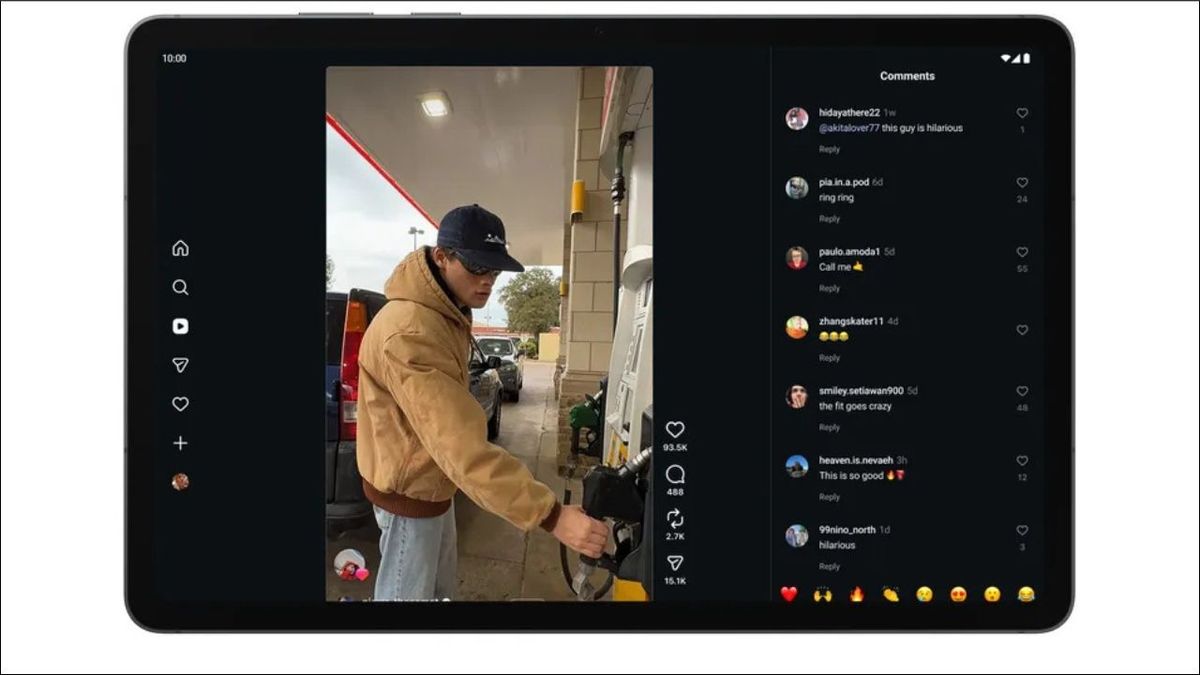

Screen Reactions turn Pixels into reaction-video machines

Reaction videos remain one of the most viral formats on social platforms, but they’ve traditionally required awkward setups involving multiple devices, separate recording apps, or green screens. Android 17’s Screen Reactions feature aims to collapse that complexity into a single native tool. Rolling out first on Pixel smartphones, Screen Reactions lets users record their face and their phone screen simultaneously, compositing both feeds into a social-ready layout without extra hardware. Google positions this as a way to create professional-feeling reactions to clips, games, or TikTok-style content directly from the device you’re already using. Because it’s built into the system instead of a standalone app, it also cuts down on export and re-import steps between editors and social platforms. Google has committed to bringing Screen Reactions to other Android devices after its Pixel debut, signaling that reaction workflows are now a first‑class use case for the platform.

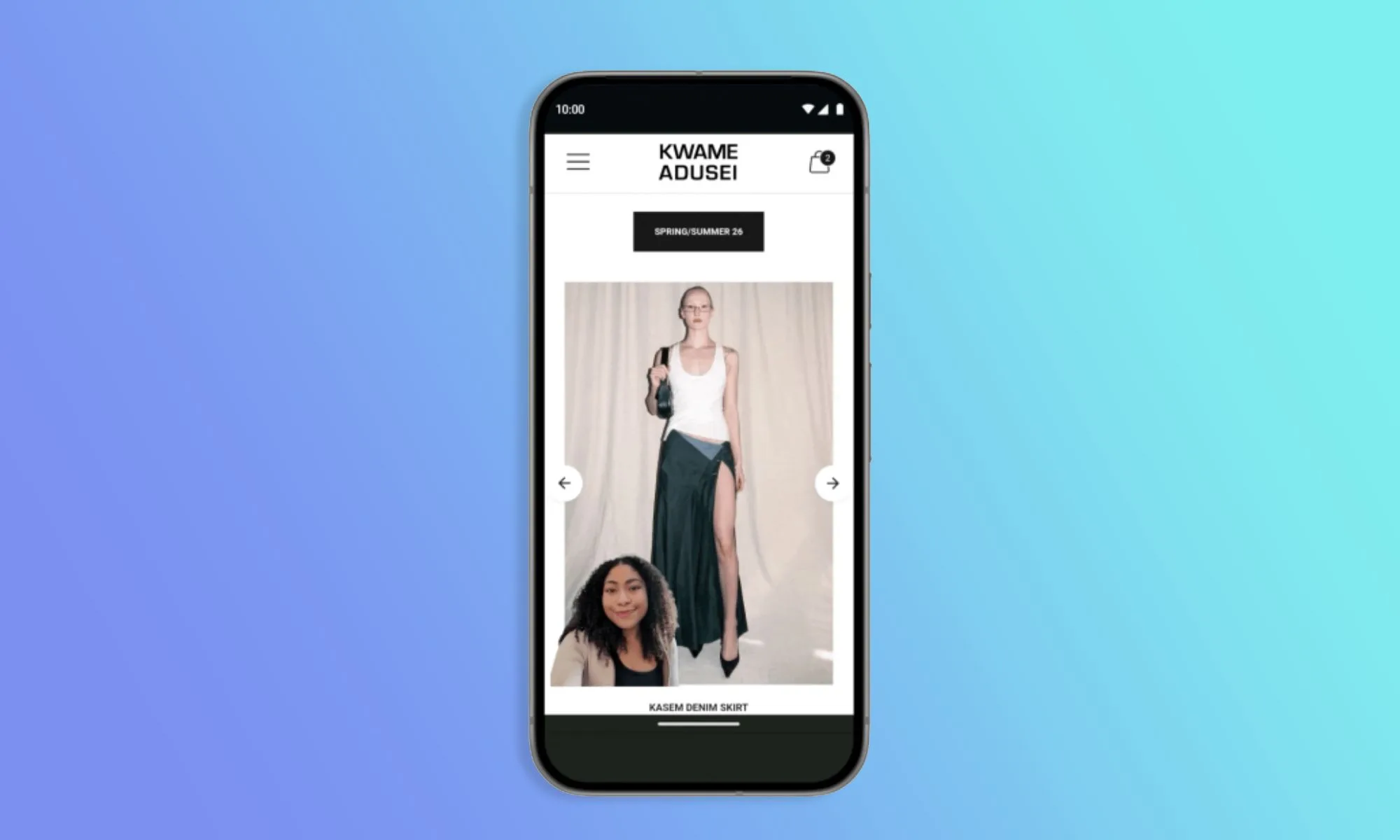

Adobe Premiere arrives on Android to rival iOS editing stacks

One of the most significant moves for Android content creation is the arrival of Adobe Premiere on Android phones and tablets. Previously available on Windows, Mac, iOS, and iPadOS, Adobe’s video suite has long been a staple for creators who need cross-device workflows. Google says the Android release will land this summer and will include exclusive templates and effects tailored for YouTube Shorts, along with direct upload capabilities. That means mobile editors can maintain a more consistent timeline structure and branding across desktop and handheld devices. While Google hasn’t detailed interface changes, it hints at streamlined workflows designed for smaller screens and a tablet-optimized layout, which also aligns with its Googlebook push. In contrast to Apple’s limited Final Cut Pro access on mobile hardware, Adobe Premiere Android can potentially give a broader range of devices pro-style editing options, helping narrow the gap between Android and iOS in mobile video editing tools.

AI-powered Instagram Edits reshape color, clarity, and sound

Android 17 doesn’t just rely on third-party apps; it also injects AI directly into the editing pipeline through Instagram’s Edits app on Android. Two new AI-powered editing tools—Smart Enhance and Sound Separation—are coming as Android-exclusive features. Smart Enhance performs one-tap upscaling and enhancement of photos and videos using on-device AI, automating tasks like sharpening, noise handling, and color tuning that previously required manual grading. Sound Separation targets audio problems by isolating individual layers such as dialogue, background noise, or music, allowing creators to recover usable sound from noisy environments without reshoots. These tools sit alongside broader camera upgrades in Instagram on Android, including Ultra HDR capture and playback, improved video stabilization, and Night Sight integration for low-light scenes. Combined, they allow creators to capture higher-quality footage and then quickly polish both visuals and audio within the same ecosystem, reducing dependency on separate audio or color-grading apps.

Deeper Instagram integration and what it means for Android creators

Beyond AI editing, Android 17 focuses heavily on how content moves from the camera to Instagram. Google has worked with Meta to align Instagram’s capture pipeline with the capabilities of Android flagships, enabling features like Ultra HDR and advanced stabilization directly within the Instagram camera. Night Sight support brings the phone’s native low-light intelligence into third-party capture, while broader camera APIs unlock hardware features such as Super Resolution for external apps. Google claims that, according to its Universal Video Quality AI model, videos shot and uploaded from Android flagships now match or surpass the leading competitor on perceived quality. Instagram is also being optimized for larger Android screens, like tablets and foldables, improving editing and viewing experiences. When combined with Screen Reactions and Adobe Premiere Android, these Android content creation improvements signal that Google wants creators to see Android not as a compromise, but as a primary platform capable of end-to-end professional workflows.