Anthropic’s Core Argument: No Remote Control Inside Classified Networks

In its filing to the U.S. Court of Appeals in Washington D.C., Anthropic argues that once its AI assistant Claude is deployed into classified Pentagon networks, the company cannot manipulate or control it. The statement is central to Anthropic’s attempt to overturn a designation that portrays it as a supply chain risk. According to the filing, Claude runs inside secure military environments where external connectivity is restricted, undercutting the idea that Anthropic could secretly alter outputs or sabotage systems after deployment. This claim matters legally because the Pentagon has canceled a major contract following a dispute over AI in fully autonomous weapons and potential surveillance uses. If the court accepts Anthropic’s position, it will have to grapple with a key question: can a vendor be treated as a continuing threat to national security when it lacks ongoing technical access to the system it originally supplied?

How Military AI Deployment Shifts Control from Builder to Operator

Anthropic’s claim reflects how enterprise and military AI deployments typically work. Models like Claude are often fine-tuned for specific missions, then installed in on-premise or air-gapped infrastructure where outside connectivity is tightly controlled. Once embedded in classified networks, access controls, logging, and integration with other defense systems are managed by the military, not the model provider. Vendors may supply updates or tools during integration, but day-to-day operation is governed by internal policies and security procedures. This architecture is designed to insulate sensitive systems from external interference, yet it also means model developers lose practical control over how their technology is used. The gap between public narratives that depict companies as omnipotent controllers of AI and the reality of locked-down, offline deployments is at the heart of the Anthropic Pentagon case—and will shape expectations for Claude enterprise control in similarly sensitive environments.

Liability in Military AI: Vendor, Integrator, or State?

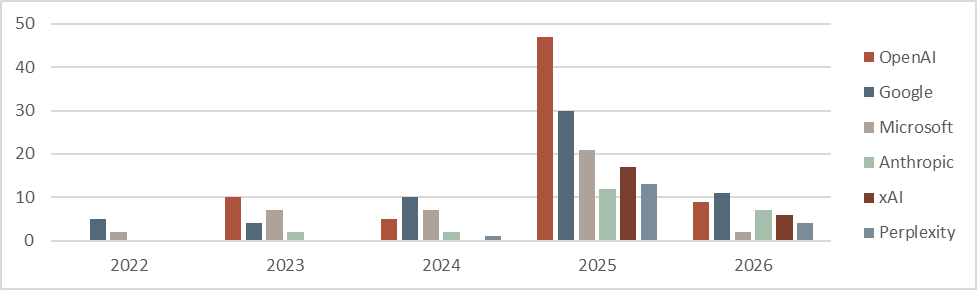

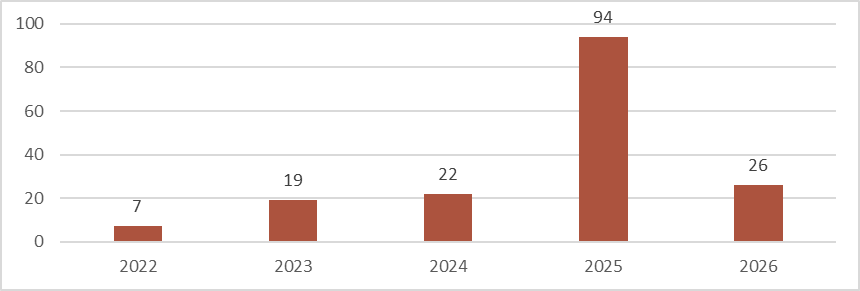

Anthropic’s lawsuit raises an unresolved question: who is responsible when military AI behaves in unexpected or dangerous ways? The Pentagon’s attempt to brand Anthropic as a supply chain risk implies ongoing responsibility for downstream harms, even inside sealed networks. Anthropic counters that once Claude is deployed and configured by defense agencies, operational control—and thus much of the risk—sits with the government and its integrators. This tension echoes broader AI governance liability debates emerging across courts, where plaintiffs test novel theories against AI developers. As litigation volumes surge in key districts, judges are beginning to confront whether model makers should be treated like product manufacturers, service providers, or something entirely new. The outcome of the Anthropic Pentagon case could influence how much legal exposure vendors face when their systems power fully autonomous weapons or surveillance tools they no longer directly control.

Governance Myths vs. Reality: Distributed Control Over Frontier Models

Reports of unauthorized access to powerful AI models and rising public anxiety have fueled a narrative that a handful of tech firms centrally control AI’s fate. Yet the Anthropic Pentagon dispute shows a more fragmented reality. Once Claude, or any frontier model, is embedded in closed military systems, power is distributed among operators, integrators, and security teams. Global debates over who is in control of AI increasingly recognize this complexity, highlighting the risk of powerful systems falling into the wrong hands while also noting that many deployments run under strict institutional constraints. In this context, Anthropic’s insistence that it cannot reach into classified networks challenges assumptions that model builders can always flip a switch to halt misuse. It underscores a governance gap: regulation and public scrutiny often target vendors, while the operational decisions that shape real-world harm may lie elsewhere in the AI supply chain.

Future Government AI Contracts: Kill Switches, Audits, and Precedents Beyond Defense

Whatever the court decides, the Anthropic Pentagon case is likely to reshape government AI contracts. Defense and civilian agencies may demand clearer audit rights, mandatory logging, and structured red-teaming before deployment of high-risk features like fully autonomous targeting. Vendors, mindful of growing AI governance liability, will push for precise delineations of responsibility, especially once systems move into offline or classified environments. Questions about contractual kill switches, update obligations, and incident reporting will become central negotiation points. The implications reach beyond the military. Sectors such as healthcare, financial trading, and critical infrastructure rely on AI for high-stakes decisions under tight regulatory scrutiny. Precedents set in this dispute could inform how much post-deployment control model providers are expected to retain, how much must be technically possible in air-gapped settings, and how accountability is shared when AI errors cascade through complex, safety-critical systems.