Liquid Glass: A Design Revolution That Dominated the iOS 26 Conversation

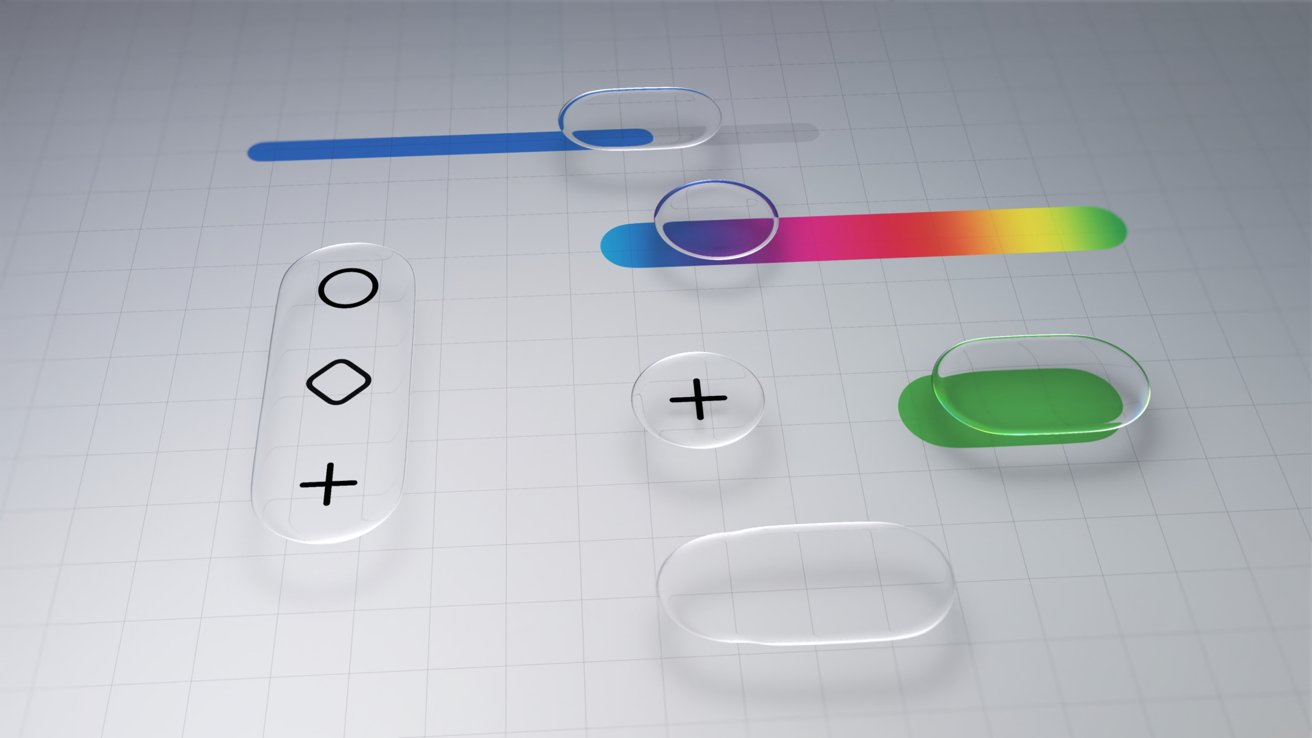

In most iOS 26 review discussions, the Liquid Glass design quickly became the headline act. The translucent, physics‑driven material coats home and lock screens, popovers, and system controls with glossy edges, smoky overlays, and subtle warping effects as elements move and overlap. Powered by Apple Silicon, this Liquid Glass design gives sliders a bubble‑like feel and makes interface elements appear to float above one another. Users noticed: transparent icons, tinted wallpapers, and the dramatic new clock on the Lock Screen made iOS feel visually fresh again. At the same time, Apple’s insistence that Liquid Glass is here to stay, without an option to disable it, fueled online controversy. That debate over aesthetics, however, ended up overshadowing the more important question—whether iOS 26 meaningfully advanced Apple’s iOS AI capabilities beyond surface‑level polish.

Customization Wins, But It Cannot Hide the AI Gap

Beyond Liquid Glass, iOS 26 delivered modest but welcome upgrades to customization: more expressive Lock Screen layouts, transparent app icons, and tighter integration between Focus Modes, wallpapers, and home screen setups. Many users embraced the ability to theme their devices, even if the process still involves too many menus and an awkward reliance on Photos for wallpaper management. Yet this focus on visual personalization highlights what is missing. Apple has hinted that future iPhone customization could be driven by Apple Intelligence features, automatically generating wallpapers or layouts that adapt to behavior. One year later, that vision remains largely unrealized. iOS 26 looks new, but its underlying intelligence feels familiar. For a platform that increasingly competes on software, the absence of deeply integrated Apple Intelligence features is more glaring than the lack of extra icon packs or additional Focus limits.

Apple Intelligence: A Promise of Transformative AI Still Waiting to Land

When Apple first framed Apple Intelligence as the next big leap for iOS AI capabilities, expectations were sky‑high. The company’s pitch implied smarter, context‑aware actions, proactive assistance, and system‑wide intelligence that would make the iPhone feel genuinely new. A year into iOS 26, that transformation has not arrived. Siri‑powered tools like Call Screening and Hold Assist offer practical improvements, but they feel incremental rather than revolutionary. There is no sense that Apple Intelligence has rewired the operating system in the way users were led to anticipate. Instead, the AI story of iOS 26 is one of ongoing delays and cautious rollouts, while competitors push aggressively into generative features. Apple’s slow, privacy‑focused approach may prove wise long term, but today it translates into a gap between the bold marketing narrative and the everyday experience of using an iPhone.

Missed Differentiation: iOS 26’s Real Shortcoming One Year Later

Looking back, the biggest missed opportunity in iOS 26 is not design missteps but unrealized Apple Intelligence integration. Liquid Glass has already settled into the background as a new visual language, and most users either accept or enjoy it. What they still do not have is an iPhone that feels meaningfully smarter than it did before. Apple Intelligence has yet to deliver system‑wide suggestions that change habits, productivity tools that adapt to context, or personalization that goes beyond wallpapers and widgets. As a result, iOS 26 struggles to stand apart in a market where AI is the new battleground. Instead of becoming the release that redefined how the iPhone thinks, it became the one remembered for how the iPhone looks. Until Apple Intelligence moves from cautious promise to pervasive reality, user expectations for truly intelligent iOS features will remain unfulfilled.