From Simple Chatbots to Intelligent Voice Agents

OpenAI is expanding its API platform with three new real-time audio models aimed squarely at developers building voice-first products. The lineup—GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper—targets use cases such as live voice agents, translation tools, and transcription streaming for applications that must react while people are still speaking. The models operate through a Realtime API that supports low-latency voice AI, enabling interactions that feel more like human conversations than rigid command-and-response flows. Rather than focusing only on natural-sounding speech, OpenAI is emphasizing the ability of these models to handle real tasks during live dialogues. This shift positions OpenAI real-time voice technology as an operational layer for apps and workflows, where users can talk naturally, systems can reason in the background, and results arrive without breaking conversational flow.

GPT-Realtime-2: GPT-5-Class Reasoning for Voice

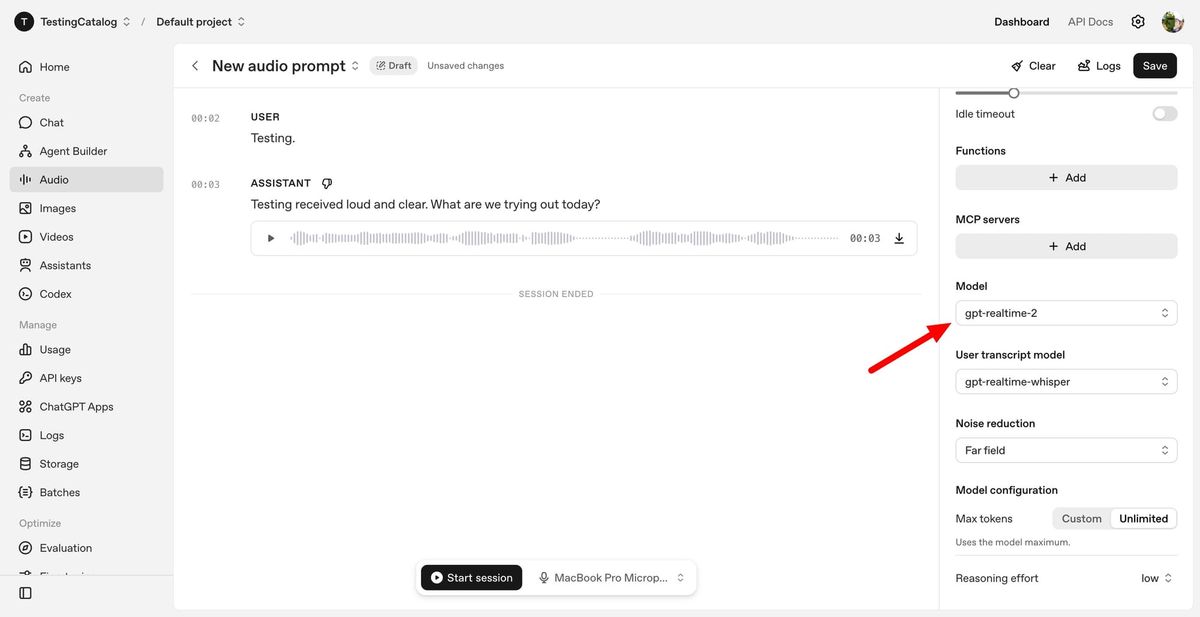

GPT-Realtime-2 is the flagship among OpenAI’s new voice AI models, bringing GPT-5-class reasoning to spoken interactions. Unlike earlier systems that reverted to simple question-and-answer patterns, this model is designed for dynamic, non-linear conversations where users interrupt, change topics, or refine their requests. It supports parallel tool calls, allowing the voice agent to say brief preambles like “let me check that” while executing tasks in the background. A major upgrade is the expanded 128K context window, up from 32K in the previous generation, which helps maintain coherence over longer sessions. Developers can tune the reasoning effort from minimal to xhigh to balance latency against depth of analysis. According to OpenAI, GPT-Realtime-2 improves audio intelligence, instruction following, context handling, and recovery when something goes wrong, making it a foundation for more reliable voice agent development.

GPT-Realtime-Translate: Live Multilingual Conversations at Scale

GPT-Realtime-Translate targets developers building live translation API experiences, where conversations must be translated as they happen rather than after the fact. The model accepts speech in more than 70 languages and can respond in 13 output languages, supporting cross-border sales, customer service, education, events, and media localization scenarios. It is engineered to keep pace with natural speech, even when speakers shift topics, use regional accents, or rely on domain-specific terminology. Early evaluations by companies such as BolnaAI highlight lower word error rates and better task completion for languages including Hindi, Tamil, and Telugu, underscoring its potential for multilingual voice-to-voice communication. For enterprises, this model helps turn voice AI into an accessibility tool, enabling callers, learners, or patients to speak in the language they are most comfortable with while still participating in global workflows and services.

GPT-Realtime-Whisper: Streaming Transcription for Live Workflows

GPT-Realtime-Whisper extends OpenAI’s transcription streaming capabilities into real-time scenarios where speech must be converted to text as people talk. The model delivers low-latency speech-to-text that can power live captions, meeting notes, classroom tools, broadcasts, customer support automation, healthcare documentation, recruiting, and sales calls. Instead of generating transcripts only after a conversation ends, it listens continuously and outputs structured text in parallel with ongoing dialogue. This enables new workflows, such as instant search over active meetings, real-time compliance logging, or live coaching for service agents. By integrating directly into the same Realtime API as the other models, GPT-Realtime-Whisper allows developers to chain transcription with higher-level reasoning or translation, creating pipelines where spoken input becomes text, is analyzed or acted upon, and then returned as voice or on-screen output within a single, cohesive system.

Voice as a Full-Fledged Interface for Business

Together, OpenAI’s new models illustrate a broader shift: voice is evolving into a primary interface for applications and operations. OpenAI describes emerging patterns such as voice-to-action, where users issue natural spoken commands and the AI executes tasks behind the scenes; systems-to-voice, where software proactively speaks to users based on live data; and voice-to-voice, where multilingual communication happens without manual intervention. Companies like Zillow see GPT-Realtime-2 as a way to make complex voice interactions more intelligent and reliable, while telecom operators are exploring multilingual customer support that adapts to each caller’s preferred language. With low-latency architecture, expanded context windows, and GPT-class reasoning, OpenAI real-time voice models are moving beyond novelty. They are becoming foundational tools for developers who need live translation API capabilities, robust voice agent development, and transcription streaming integrated into core business workflows.