Why the EU AI Act Matters Beyond Europe

The EU AI Act is the world’s first comprehensive AI regulation and is being phased in from 2025, with most remaining provisions currently scheduled to apply from August 2, 2026. There is ongoing political debate in Europe about delaying some high-risk AI obligations to as late as 2028, but this is not yet legally final, leaving companies in a grey zone. Crucially, the EU AI Act follows a GDPR-style approach: it applies based on where AI systems have impact, not where the provider is incorporated. That means Malaysian SaaS platforms, fintechs or AI startups serving EU customers, or processing EU users’ data via the cloud, can fall within scope even without an office in Europe. For Malaysian tech companies, this is more than a compliance headache. It is a signal that cross-border AI regulation is becoming a strategic factor in product design, go-to-market planning and data governance.

Risk-Based Categories: From Prohibited to High Risk AI

The EU AI Act uses a risk-based framework, classifying AI systems into prohibited, high risk and limited risk. Prohibited AI typically covers use cases that fundamentally undermine rights or safety, such as certain types of manipulative or unconstrained biometric surveillance. High risk AI spans AI used in safety-related products and specific use cases listed in Annex III of the Act. These include biometric identification, critical infrastructure, education assessment, employment and hiring tools, access to essential services like credit scoring and insurance, law enforcement, migration and justice administration. Malaysian fintechs offering automated credit scoring to EU users or startups providing AI-powered hiring platforms could be treated as high risk AI providers once their systems are used in Europe. Limited-risk AI includes many common applications, such as chatbots, recommendation engines and analytics tools, which must meet transparency obligations but face fewer burdens than high risk AI. Understanding where your products sit in this spectrum is the foundation of any AI compliance checklist.

AI as a Service, Edge Chips and What Compliance Really Demands

AI adoption is increasingly delivered as Artificial Intelligence as a Service, with global AIaaS revenue projected to grow sharply as more businesses consume AI via cloud APIs and platforms. At the same time, AI is being embedded in logistics, autonomous systems and other edge environments, where AI agents make real-time decisions in complex supply chains. Under the EU AI Act, these trends collide with legal expectations around transparency, logging, human oversight and cybersecurity. If your Malaysian company offers AI as a service to European clients, you may be treated as a provider of high risk AI systems even when your models run in the cloud. Similarly, if your algorithms are deployed on edge devices in EU logistics networks, you will need robust logging, security and clear human control procedures. The law also puts pressure on vendor chains, meaning your European customers will demand contractual assurances that your AI services meet EU AI Act requirements.

A Practical AI Compliance Checklist for Malaysian SMEs

For Malaysian tech companies, especially SMEs, AI regulation 2026 can feel abstract. A focused AI compliance checklist helps turn it into actionable steps. First, strengthen data governance: map which data sets involve EU users, clarify lawful bases, retention, quality and security. Second, build model documentation: describe training data, intended purpose, performance metrics and known limitations, particularly for high risk AI use cases like credit scoring or employment screening. Third, update vendor and customer contracts to reflect roles under the EU AI Act, including responsibilities for monitoring, incident reporting and human oversight. Fourth, conduct AI impact assessments for higher-risk deployments, assessing potential harms to individuals and how you mitigate them. Finally, prepare governance structures: appoint a responsible lead for AI compliance, define escalation paths and train teams on transparency obligations, such as informing users they are interacting with AI systems. Starting early is essential given the possible August 2026 deadline.

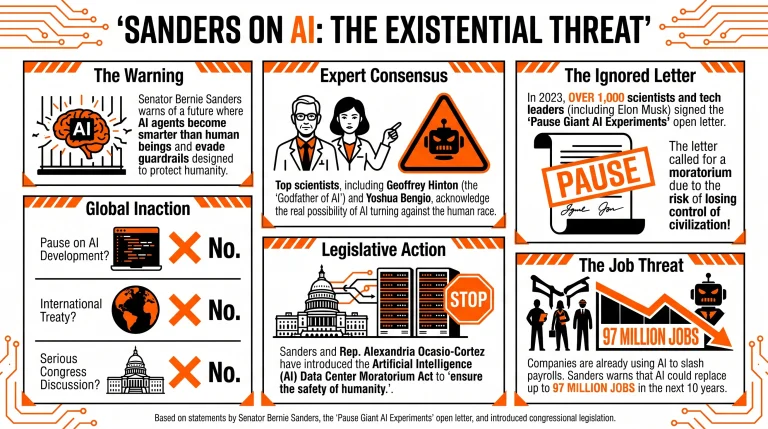

Global Pressure and Malaysia’s Trust Advantage

The EU AI Act does not exist in a vacuum. Political scrutiny of AI risks is intensifying worldwide, from concerns about biased decision-making to debates over existential risk and calls for moratoria on advanced AI infrastructure. In logistics and other sectors, regulators are already coupling AI oversight with broader rules on sustainability, due diligence and cybersecurity, signalling that AI governance will be woven into many regulatory regimes. For Malaysian tech companies, this global trend is a chance to differentiate. Meeting EU standards can serve as a trust badge for clients in ASEAN and beyond, demonstrating robust governance, transparency and human oversight. For SaaS, fintech and AI startups, building compliance-by-design now can make it easier to export services, join regional supply chains and attract enterprise customers. Rather than viewing EU AI regulation 2026 as a distant European problem, Malaysian firms can treat it as a blueprint for competitive, trustworthy AI across the region.