From Code to Conversation: The Rise of Natural Language Programming

For decades, working with autonomous systems meant wrestling with SDKs, specialized tooling, and domain-specific languages. That assumption is rapidly breaking down as AI agents that understand plain English give users a new way to specify what software should do. Instead of manually writing functions and integration logic, people now describe goals in everyday language and let an AI agent generate, test, and deploy the code. This is more than a convenience feature: it is a form of natural language programming that treats English as a primary interface for autonomous agent control. Developers still matter, but their role shifts from low-level implementation to high-level orchestration and review. Meanwhile, non-technical users can suddenly participate directly in no-code AI development, expressing workflows, behaviors, and constraints without needing to translate their ideas into a formal programming syntax.

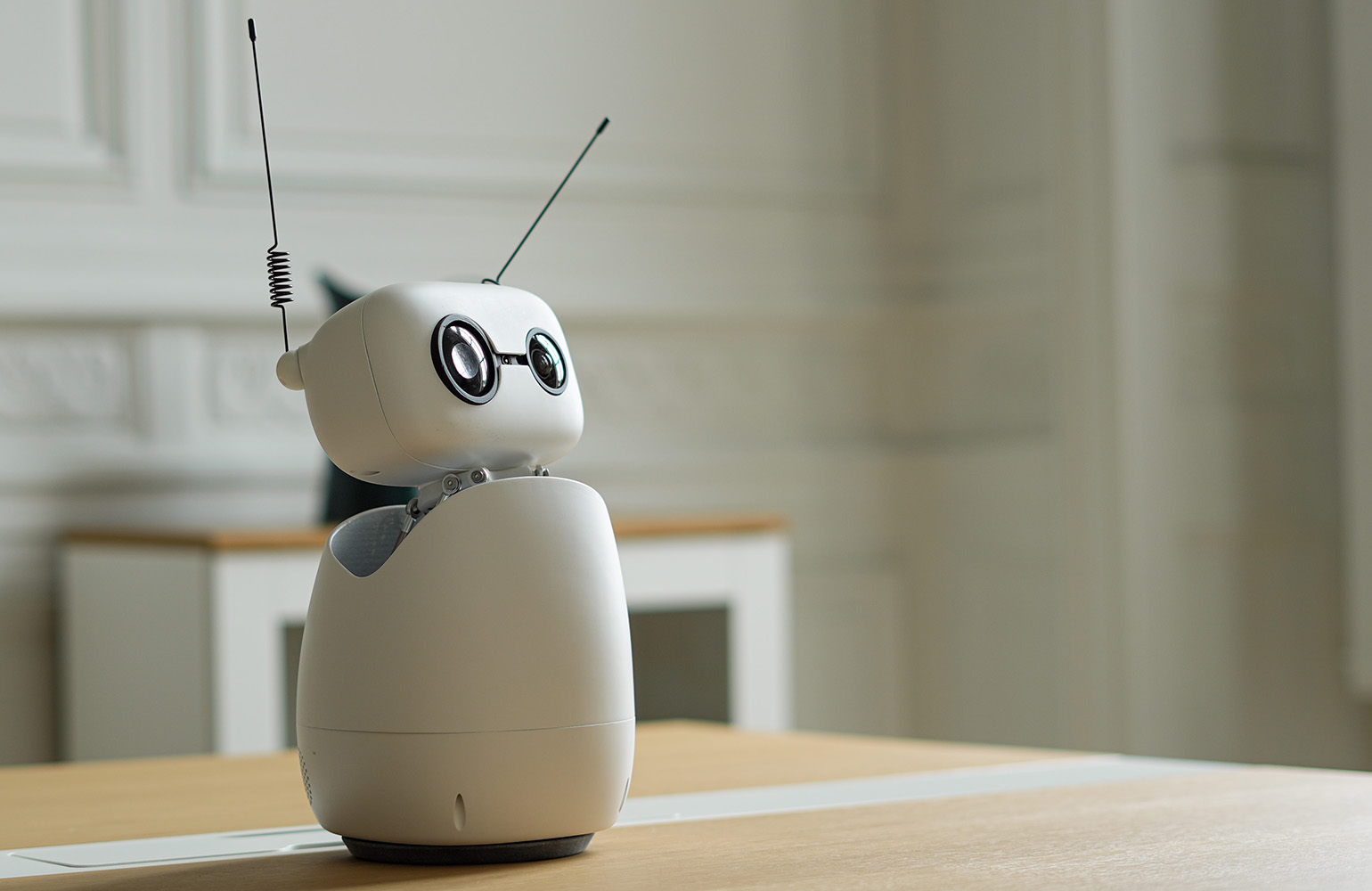

Hugging Face’s Reachy Mini: Building Robot Apps by Talking, Not Coding

Hugging Face’s new agentic toolkit for the Reachy Mini desktop robot shows how far this shift has progressed. Anyone can now build a working app for the open-source robot in under an hour without writing a single line of code: users simply describe the behavior they want in plain English, and an AI agent writes, tests, and ships the code to the robot. The company positions this as collapsing three historical barriers in robotics—expertise, expensive hardware, and lengthy integration work—by pairing a low-cost, open-source robot with a one-click web flow and autonomous agent control. On the Hugging Face Hub, more than 200 apps for Reachy Mini can be searched, forked, and installed with one click, or adapted by asking the agent to modify them. This turns robotics into a practical arena for no-code AI development, accessible to hobbyists, educators, and professionals alike.

A 78-Year-Old Facilitator Shows What No-Code AI Development Enables

The most striking proof of concept comes from Joel Cohen, a 78-year-old retired marketing executive with no robotics or developer background. After taking weeks to assemble his Reachy Mini Lite, he built a sophisticated app by describing what he needed in plain English. An AI agent handled all the implementation details, eliminating the need for an SDK or traditional coding skills. The result is a voice-controlled co-facilitator for CEO peer groups he runs on Zoom. When he says, “Hey, Reachy,” the robot wakes up, listens, and responds with a distinct personality he calls his “VP of future thinking.” The system supports four facilitation modes, draws from a bank of more than 60 questions, greets 29 participants by name, can hot-seat a member, challenge superficial answers, and summarize key themes. Cohen’s experience illustrates how AI agents in plain English can convert domain expertise—not programming ability—into working autonomous tools.

Meta’s Hatch and the Next Wave of Natural Language Agents

Beyond robotics, natural language programming is also reshaping how people create and research digital content. Meta’s Hatch agent, for example, demonstrates how plain English prompts can drive complex autonomous workflows across image creation and research tasks. Rather than issuing a series of low-level commands, users can assign open-ended objectives—such as exploring a visual concept, generating alternative designs, or gathering and synthesizing information on a topic—and let the agent decompose the request into steps, execute tools, and iterate on results. This represents a different flavor of autonomous agent control, focused on knowledge work and creativity instead of physical hardware. Yet the underlying pattern is the same: natural language becomes the control surface, and the agent becomes the executor. As tools like Hatch mature, they point toward productivity environments where “describe the job once, refine in conversation” replaces many manual, multi-application workflows.

How Plain English AI Agents Are Changing the Developer’s Role

As AI agents plain English interfaces spread, they are reshaping both who can build agents and how professionals work with them. For non-programmers, the removal of technical barriers means they can directly design, deploy, and iterate on autonomous systems: a teacher can build a language tutor robot; a facilitator can craft a custom co-host; an office worker can assemble a desk robot that discourages distractions. For developers, this is less about replacement and more about elevation. They increasingly act as reviewers, system designers, and integrators, steering agents, defining guardrails, and ensuring reliability rather than hand-writing every line of code. Over time, we can expect agent platforms, app stores, and simulators—like Hugging Face’s browser-based environment for Reachy Mini—to become standard parts of the software lifecycle. Natural language programming is turning everyday conversation into a first-class interface for software creation and control.