From One-Size-Fits-All Chatbots to Layered Doctor AI Training

Hospitals are discovering that dropping a generic chatbot onto the ward is not how clinical AI workflows succeed. Instead, many are turning to layered doctor AI training that mirrors how clinicians learn medicine: step by step. The first layer focuses on large language models (LLMs) used as advanced text tools for drafting notes, visit summaries, and patient instructions. Here, doctors learn to treat AI like an assistant scribe, not an automated decision-maker, and to double‑check every assessment and plan before it enters the chart. The next layers introduce retrieval augmented generation and medical AI agents that can act inside specific workflows, and eventually agentic systems that coordinate multiple tools over time. By staging capabilities this way, hospitals help clinicians build mental models of what each layer can and cannot do, rather than expecting them to trust a mysterious black box in one leap.

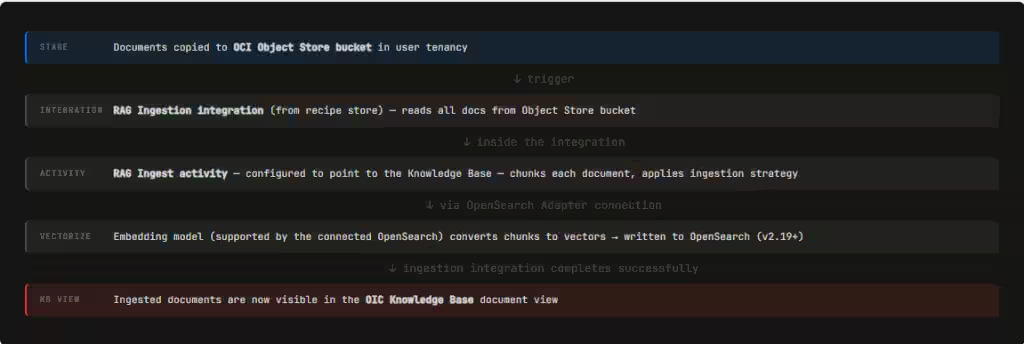

RAG in Healthcare: Grounding AI in Guidelines, Not the Open Internet

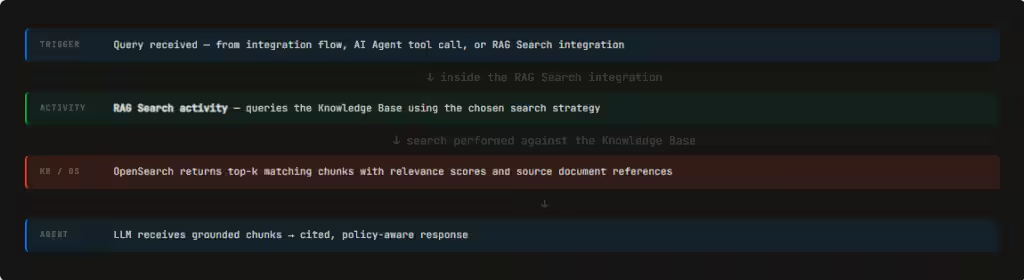

Retrieval augmented generation, or RAG, is becoming the quiet backbone of safe clinical AI. In simple terms, RAG connects language models to a vetted knowledge base so that answers are grounded in real documents instead of generic web data. In healthcare, that means linking AI agents to clinical guidelines, hospital protocols, and relevant segments of the medical record. Rather than stuffing entire documents into a prompt, hospitals ingest and index them once, then let agents retrieve only the most relevant passages at runtime. This mirrors managed RAG approaches where enterprise content is vector‑indexed so agents can pull precise, cited snippets rather than hallucinate. For clinicians, RAG in healthcare shifts the task from recalling every recommendation to validating decisions against current, locally approved sources, with the evidence surfaced inline and traceable back to its origin.

What RAG-Powered Medical AI Agents Actually Do in Daily Practice

When RAG is paired with medical AI agents, it starts to reshape day‑to‑day work. A typical example is an ophthalmologist reviewing a patient with diabetic retinopathy: an AI scribe first drafts the visit note, then a RAG agent pulls relevant staging criteria and follow‑up recommendations from guidelines for the doctor to confirm. Similar agents can summarize a multi‑year patient history into a timeline, highlight prior imaging or lab trends, and surface passages from protocols that match the current presentation. Others assist with drafting discharge summaries or referral letters, citing specific findings from the record. In more advanced setups, agentic AI chains tasks—pulling prior notes, querying evidence databases, and preparing documentation in one flow—while still keeping the clinician in the loop. The promise is not to replace judgment, but to reduce the time spent hunting through charts and PDFs so doctors can focus on reasoning and patient communication.

Training Doctors for Skeptical, Safe Use of Clinical AI Workflows

The power of RAG and agentic systems comes with real risks: hallucinated recommendations, outdated guidance, and overreliance on seemingly fluent output. Layered doctor AI training directly targets these pitfalls. In the LLM stage, clinicians are taught to assume every draft may contain subtle clinical errors, building habits of verification and precise editing. As they move into RAG tools, they learn to ask: Which source did this answer come from? How current is it? Does it reflect our local protocol? Training emphasizes comparing AI‑surfaced passages with the full underlying document and recognizing when the system may have retrieved the wrong chunk. With agents, doctors practice interrupting and redirecting workflows, rather than letting automation quietly proceed. The goal is a culture of healthy skepticism—treating AI as a powerful but fallible colleague whose work must be checked, not an oracle.

Governance, Culture Shift—and What Patients Should Expect Next

For hospitals, adopting retrieval augmented generation and medical AI agents is as much an organizational project as a technical one. Governance teams must decide which guidelines and policies populate the knowledge base, how often they are updated, and who signs off on changes. Continuous evaluation loops—where clinicians flag bad outputs and data teams refine indexing, search modes, and thresholds—are essential to keep systems aligned with real practice. Culturally, leadership needs to normalize AI as part of care while making clear that accountability still rests with human clinicians. For patients and non‑medical readers, AI‑assisted healthcare will likely show up first as faster, clearer documentation, more consistent adherence to guidelines, and doctors who spend less time staring at screens. Behind those improvements will be a new expectation: that every clinician knows not just medicine, but how to work safely and critically with AI embedded in their daily tools.