From Stylish Gadget to Open AR Platform

Ray-Ban Display glasses began as a sleek way to capture photos, access Meta AI, and view messages in the lens. Until now, those experiences were tightly controlled, limited to apps from Meta and a small group of partners. Meta is now opening the display to third-party smart eyewear developers, effectively transforming the glasses into an AR developer platform rather than a closed gadget. Developers can submit new smart glasses apps and games, tapping into the built-in display for real-time visual feedback. This shift mirrors what smartphones went through when app stores unlocked their full potential. For Ray-Ban Display users, it means the hardware they already own is on the verge of becoming far more capable. For Meta, it positions the glasses as a serious contender in the emerging smart eyewear market, where software ecosystems will likely define long-term winners.

Two Paths for Building Smart Glasses Apps

Meta’s new tools give developers two main routes to build for Ray-Ban Display glasses. The first is the Meta Wearables Device Access Toolkit, a native SDK for iOS and Android that lets existing mobile apps extend interfaces to the glasses. Using Swift or Kotlin, developers can render text, images, lists, buttons, and even video playback directly in the lens. The second route is web apps based on standard HTML, CSS, and JavaScript, ideal for lightweight, standalone experiences such as cooking guides, transit tools, or mini dashboards. These experiences can be tested in a browser and then deployed straight to the glasses. Both paths support the Neural Band controller for gesture-based input, enabling subtle hand movements to select items, scroll, or confirm actions. Together, they lower the barrier for third-party smart eyewear innovation while keeping development workflows familiar for mobile and web engineers.

New Features: Virtual Handwriting, Live Captions, and Smarter Navigation

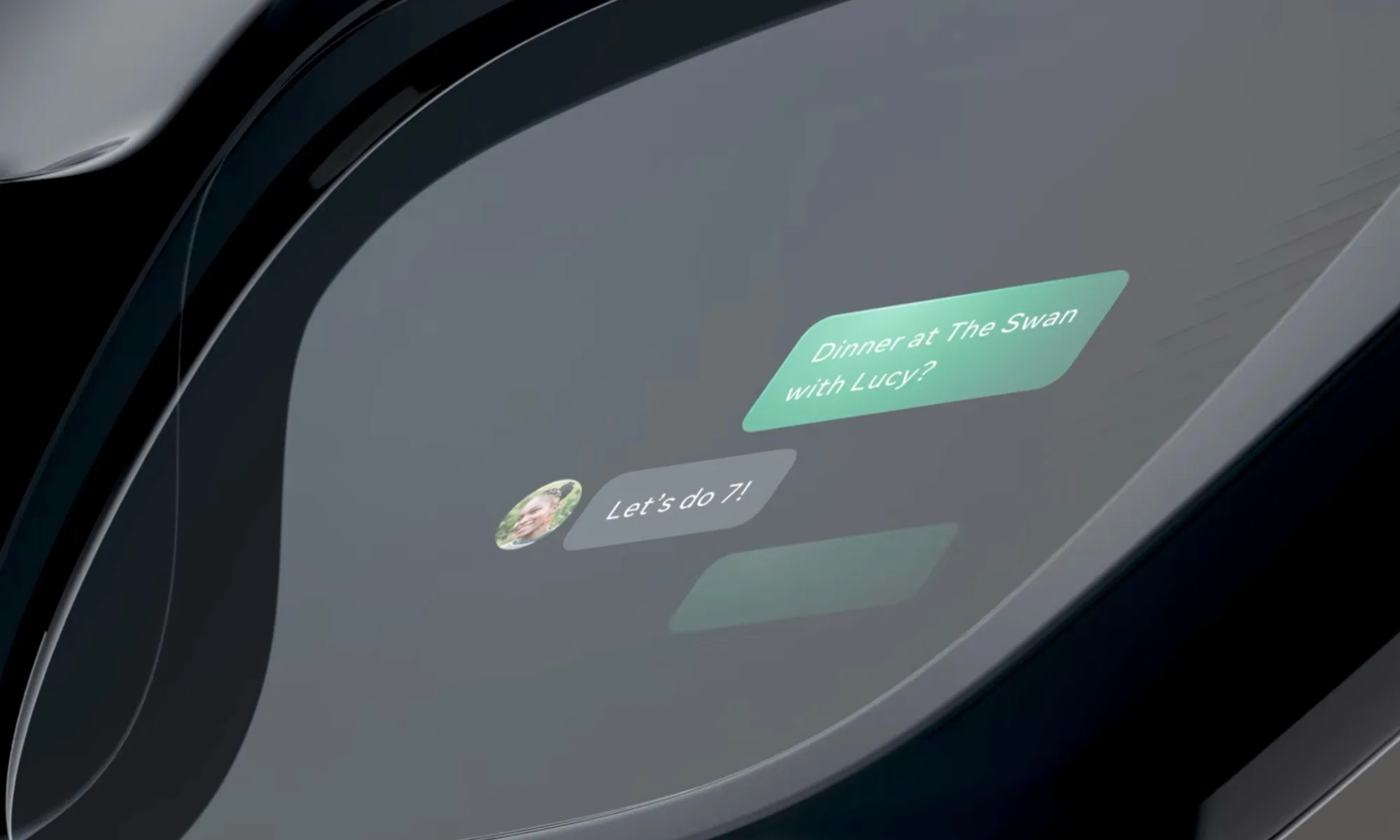

Alongside opening the platform, Meta is rolling out features that make Ray-Ban Display glasses more practical in daily life. A headline addition is virtual handwriting, powered by the Neural Band. Users can ‘write’ messages in the air with hand gestures, and the feature now works across WhatsApp, Messenger, Instagram, and native messaging on Android and iOS. Live captions are arriving for voice messages in WhatsApp, Facebook Messenger, and Instagram DMs, making audio easier to follow when you can’t listen out loud. Navigation has been expanded too, with walking directions now available across the US and key international cities such as London, Paris, and Rome, displayed directly in the lens. A new display recording mode combines the on-screen overlay, real-world view, and ambient audio into a single video, giving developers and users a powerful way to capture and share mixed-reality experiences.

Why Openness Could Make Smart Glasses Genuinely Useful

Opening Ray-Ban Display glasses to third-party developers is less about novelty apps and more about discovering genuinely useful AR workflows. With display-enabled apps, users could see live sports scores, real-time stock tickers, shopping lists, or transit updates without pulling out a phone. Micro-utilities might surface meeting agendas, language translation cues, or step-by-step repair instructions in the corner of your vision. Gesture controls via the Neural Band mean these experiences can stay hands-free, which is crucial when you’re cooking, commuting, or working on-site. As more developers experiment with overlays, real-time data, and sensor-aware interfaces, smart glasses apps can move beyond “cute demos” to become tools people rely on daily. This ecosystem approach positions Ray-Ban Display as a competitive platform in the broader smart eyewear market, where hardware design matters—but the richness of the software and services layered on top may matter even more.