A Split Stack for Real-Time Voice AI

OpenAI has introduced three specialized real-time voice models—GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper—through its Realtime API, targeting developers building live voice agents, translation tools, and streaming transcription products. Instead of relying on a single, monolithic voice model, OpenAI splits reasoning, translation, and transcription into separate capabilities. This lets teams tune each workload differently, balancing depth of intelligence against latency and cost depending on the task. The stack is framed as infrastructure for live assistants, call flows, and tool-using voice agents that must keep speaking while handling interruptions and background actions. As voice becomes an operational interface for apps and workflows rather than just a user-friendly front end, this modular design aims to reduce orchestration overhead. Developers can compose these models into voice-to-action, systems-to-voice, and voice-to-voice solutions that move beyond simple chatbot conversations into real business processes and complex reasoning tasks.

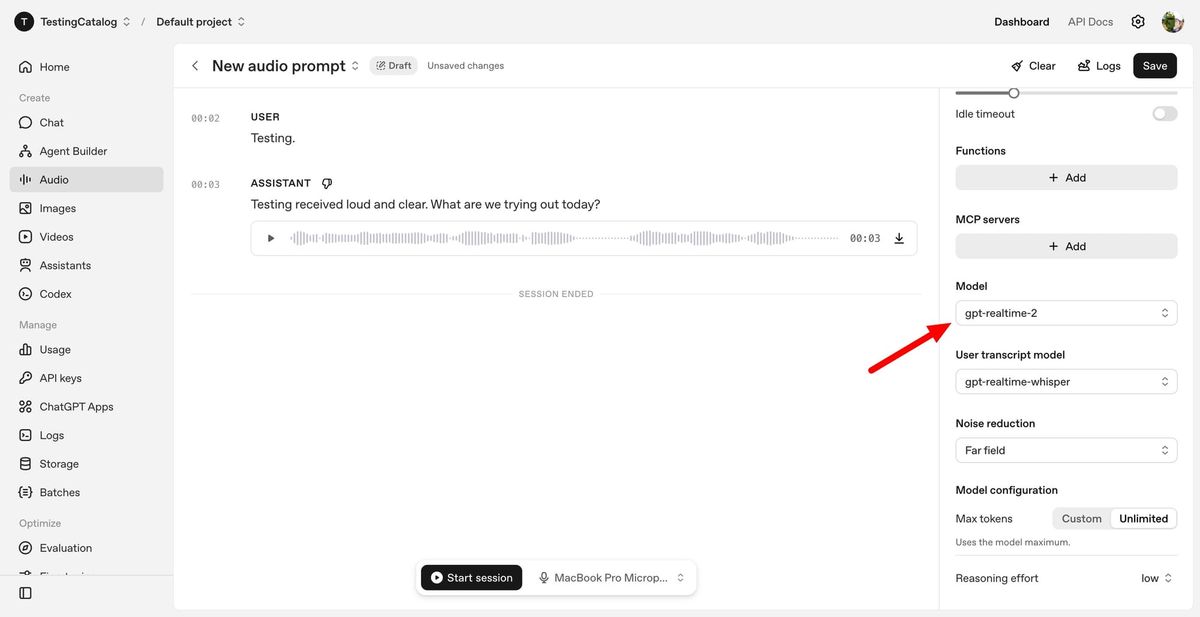

GPT-Realtime-2: GPT-5-Class Reasoning in Live Conversations

GPT-Realtime-2 is the centerpiece of OpenAI’s new lineup, bringing what the company calls GPT-5-class reasoning to spoken interactions. Designed for live conversations, it can manage interruptions, corrections, topic shifts, and multi-step tasks without collapsing into basic call-and-response behavior. The model features a 128K token context window—up from 32K in the previous generation—supporting longer, more coherent voice sessions. Developers can control “reasoning effort” from minimal to xhigh, choosing faster responses for simple flows or deeper reasoning for complex problem-solving. GPT-Realtime-2 also supports parallel tool calls, allowing the agent to say short preambles such as “let me check that” while executing background actions. Improved recovery behavior means that when tasks fail, the system can explain issues and continue the conversation instead of silently stalling. For voice app development, this turns voice agents into more reliable operators capable of orchestrating tools, workflows, and multi-turn logic in real time.

GPT-Realtime-Translate: Live Translation AI for Multilingual Voice Apps

GPT-Realtime-Translate focuses on live translation AI, enabling real-time multilingual conversations within voice applications. It accepts speech input in more than 70 languages and can produce speech output in 13 languages, designed to keep pace with speakers even when they talk quickly or change topics mid-sentence. The model is tuned to preserve meaning, context, and domain-specific terminology, making it suitable for customer support, cross-border sales, education platforms, live events, and media localization. Importantly for developers, this capability can be integrated directly via the OpenAI voice API, allowing them to add live translation to existing voice workflows without building a separate translation stack. As businesses increasingly serve global audiences, GPT-Realtime-Translate positions voice interfaces as immediate communication bridges, not just front ends that pass audio to other systems. This reduces friction in building multilingual voice agents that can listen, translate, and respond in real time across diverse use cases.

GPT-Realtime-Whisper: Low-Latency Speech Transcription for Streaming Audio

GPT-Realtime-Whisper brings streaming speech transcription to the OpenAI voice API, targeting scenarios where audio must be transcribed as people speak. The model provides low-latency speech-to-text, making it a fit for live captions, meeting notes, and voice-driven workflows where users need text outputs while the conversation is still unfolding. Unlike batch transcription pipelines that process recordings after the fact, GPT-Realtime-Whisper is built for continuous recognition over streaming audio, aligning with the broader push to treat voice as an operational layer rather than a post-processing artifact. Developers can wire it into voice apps as a dedicated transcription tier, while routing reasoning-heavy turns to GPT-Realtime-2 and translation workloads to GPT-Realtime-Translate. This separation of concerns simplifies architecture: transcription, translation, and reasoning each have their own track, yet all run within the same real-time voice infrastructure, helping teams build production-ready voice agents with responsive speech transcription at their core.

From Chatbots to Operational Voice Agents

Taken together, OpenAI’s three real-time voice models mark a shift from conversational novelties toward operational voice agents embedded in business workflows. GPT-Realtime-2 focuses on complex reasoning and tool use during live calls, GPT-Realtime-Translate enables immediate multilingual communication, and GPT-Realtime-Whisper supplies streaming speech transcription for continuous recognition. This architecture addresses longstanding pain points such as context loss during long calls, brittle interruption handling, and the need for custom orchestration logic around traditional voice bots. Developers can now design voice-to-action flows where users speak naturally while the system executes tasks in the background, systems-to-voice patterns where back-end processes are voiced proactively, and voice-to-voice experiences that combine reasoning, translation, and transcription in one seamless interaction. With these models accessible via the OpenAI voice API, voice app development can move beyond simple chatbot exchanges to robust, tool-using agents that assist with real operational work in production environments.