Why Anecdotes About AI Speed Are Not Enough

Many engineering leaders hear the same story: after adopting AI coding tools, developers say they ship features faster and feel more productive. Yet very few teams can prove that these gains are real, sustained, or visible in business outcomes. Public figures only deepen the confusion. GitHub cites 55% faster task completion in controlled trials, while Gartner forecasts 25–30% productivity gains by 2028 from AI across the lifecycle, but also estimates just 10% uplift from code-generation tools today and reports that only 34% of teams see high productivity gains. These numbers measure different scopes and horizons, so they cannot be treated as interchangeable truth. At the same time, DORA research links AI adoption to higher throughput but lower delivery stability. To move beyond hype, teams need a way to test AI coding tools ROI directly against their own engineering metrics, not vendor benchmarks.

Start With Strong Engineering Foundations Before Measuring AI ROI

The latest DORA research frames AI as an amplifier of your existing engineering system, not a magic productivity button. Teams with clear workflows, a solid internal platform, and disciplined version control see AI accelerate already healthy practices. Struggling organisations, by contrast, find that AI magnifies chaos: more code, more handoffs, more bottlenecks. The updated DORA framework for the ROI of AI-assisted software development ties value creation to seven capabilities, including platform quality and AI-accessible internal data, which then feed into improved delivery metrics and, ultimately, financial outcomes. This means you cannot meaningfully assess AI coding tools ROI if your pipelines are fragile, tests are unreliable, or work intake is ad hoc. Before turning on any measurement model, invest in continuous integration, automated testing, and working in small batches so that any throughput increase does not simply translate into instability and rework.

Use DORA Metrics as the Backbone of AI Coding Tools Assessment

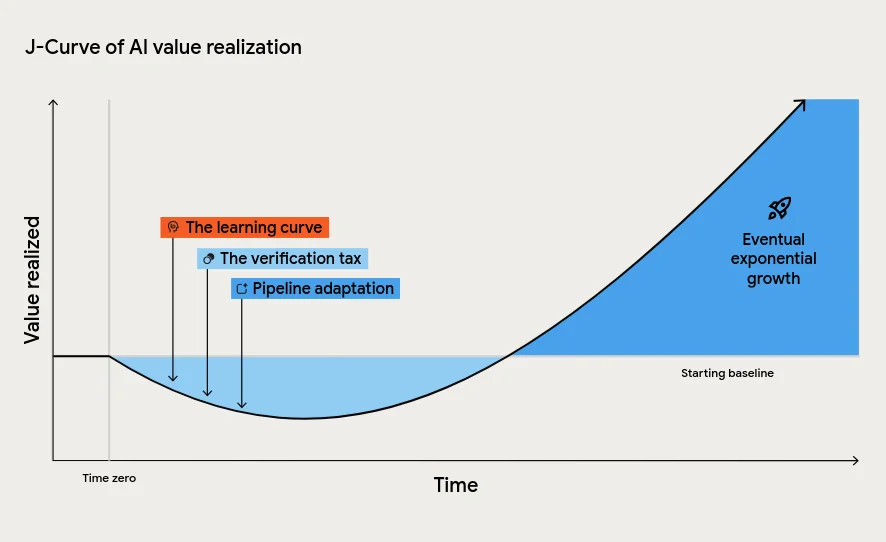

To measure AI impact systematically, anchor your analysis in the DORA framework: deployment frequency, lead time for changes, change failure rate, and time to restore service. These developer productivity metrics capture how quickly and safely value flows from idea to production. The DORA ROI model connects improvements in these metrics to non-financial outcomes such as developer and user experience, and then to cost savings and revenue effects. Crucially, the model also accounts for the J-curve of value realisation: a temporary dip in performance caused by the learning curve, the verification tax of reviewing AI-generated code, and necessary changes to downstream processes. Leaders who expect a straight-line improvement risk pulling the plug during this dip. Instead, define a baseline, anticipate short-term turbulence, and track whether your DORA metrics recover and surpass pre-AI levels over a realistic time horizon.

Look Beyond Cycle Time: Throughput, Stability, and Commit-Level Value

Faster lead time alone does not prove sustainable AI productivity gains. The 2025 DORA research found that AI adoption tends to raise throughput while also increasing delivery instability, suggesting more changes are shipped but with higher risk. To understand the full picture, you need a richer set of engineering metrics. Commit-level analysis, such as Engineering Throughput Value (ETV), offers one approach: it scores each team’s commits consistently against a pre-AI baseline, revealing whether changes associated with AI usage actually translate into meaningful throughput improvements in your own codebase. Combined with DORA stability metrics like change failure rate and time to restore, this helps identify whether AI is clearing bottlenecks or simply pushing more risky changes through the pipeline. The goal is not to celebrate more lines of code, but to verify that AI-driven throughput gains come without unacceptable increases in incidents, downtime, or rework.

Turn Measurements Into Decisions About AI Investment

Once foundations and metrics are in place, you can treat AI adoption as an investment decision rather than a trend to follow. The DORA ROI framework uses a standard formula—value minus investment, divided by investment—supported by a value model that traces how improvements in engineering metrics flow into business outcomes. The authors present their sample calculator as a high-uncertainty estimate meant to spark discussion, not as a precise forecast, and they highlight that falling inference costs shift the real burden of AI to governance and upskilling. Meanwhile, tools like ETV let you continuously test whether AI-linked changes outperform your pre-AI baseline at the commit level. By combining DORA framework assessment with granular engineering metrics measurement, leaders can decide where AI genuinely pays off, where it increases instability, and where to adjust workflows or decommission tools that do not deliver sustained ROI.