From Strategic Investor to Orbital AI Partner

Google’s advanced talks with SpaceX over launching orbital data centers build on a long-standing strategic relationship. In 2015, Google invested approximately USD 900 million (approx. RM4.14 billion) in SpaceX, securing a 6.1% stake and signaling early belief in the rocket company’s potential beyond launch services. Nearly a decade later, that bet is evolving into a deeper collaboration around Project Suncatcher, Google’s initiative to network solar-powered satellites equipped with Tensor Processing Units into an orbital AI cloud. Google has confirmed it is negotiating a launch agreement that would use SpaceX rockets to place these experimental satellites in orbit, with two prototypes planned in partnership with Planet Labs by 2027. The talks illustrate how the Google SpaceX partnership is shifting from simple capital alignment to a shared vision of space-based computing as a core pillar of next-generation AI infrastructure deployment.

Space-Based Computing as a New Cloud Architecture Layer

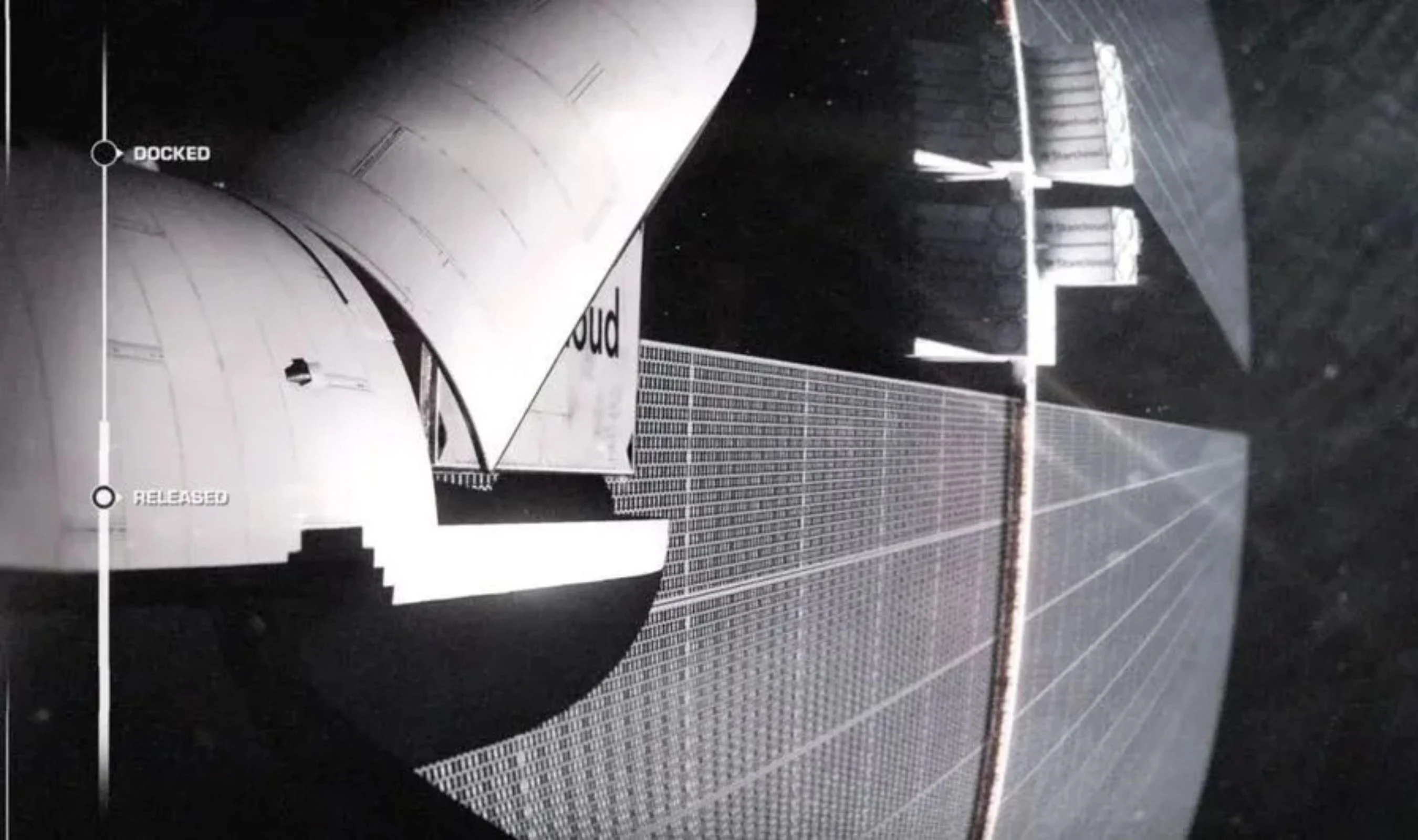

Orbital data centers move the idea of space-based computing from science fiction into a plausible extension of hyperscale cloud trends. Terrestrial data centers are increasingly constrained by energy consumption, land availability, and cooling limits, especially as AI workloads push power density higher. In orbit, continuous solar exposure and radiative cooling create a fundamentally different energy and thermal profile, while physical access risks are reduced. Google’s Project Suncatcher envisions “tiny racks of machines” in space, forming an orbital AI cloud that complements ground facilities rather than replacing them. SpaceX, meanwhile, has pitched satellites with localized AI compute as a future low-cost way to generate AI bitstreams, supported by its reusable launch ecosystem. If realized at scale, orbital data centers could become a distinct architectural layer in global computing, sitting between terrestrial hyperscale regions and edge nodes on the ground.

Latency, Redundancy, and a Reimagined Global Network Topology

Deploying compute in orbit could reshape how cloud providers think about latency, redundancy, and data distribution. Today’s cloud architecture routes data through geographically dispersed terrestrial regions, constrained by undersea cables, terrestrial fiber, and local regulatory environments. Orbital data centers introduce the possibility of placing compute closer to space-based assets and new kinds of edge nodes, changing traditional latency patterns. For certain workloads—such as satellite imaging, global-scale analytics, or cross-region AI inference—running models directly in orbit could reduce hops and improve responsiveness. At the same time, orbital infrastructure offers a novel redundancy layer: workloads could fail over not only between terrestrial regions but also to orbital clusters that are physically and geopolitically decoupled from any single jurisdiction. This emerging topology points toward a future where computing is less tied to national boundaries and more to a multi-layered mesh spanning ground and space.

Anchoring SpaceX’s IPO and Google’s AI Moonshots

Economically, the proposed orbital data centers could anchor SpaceX’s anticipated IPO while giving Google a marquee launch partner for its AI moonshots. Reports indicate SpaceX is targeting a valuation in the multi-trillion range and positioning orbital data centers as its next major commercial product after launch and connectivity. Having Google as an early orbital AI cloud customer strengthens that narrative, especially as SpaceX promotes reusable rockets and mass satellite deployment as key cost advantages. At the same time, Google is hedging by talking to other launch providers, preserving leverage as it explores prototype launches by 2027. Both companies acknowledge that terrestrial data centers remain cheaper today once satellite construction and launch costs are included, so the near-term focus is on experimentation rather than wholesale migration. Still, if orbital AI infrastructure proves economically viable at scale, it could create a new revenue stream for SpaceX and a differentiated cloud tier for Google.

Unresolved Challenges and the Road to Mainstream Orbital Clouds

Despite the bold vision, orbital data centers face significant technical, economic, and regulatory hurdles. Hardware must be designed or hardened to withstand radiation and extreme thermal cycles, while maintenance logistics in orbit remain complex compared with ground facilities. Bandwidth between Earth and orbit will be a limiting factor, requiring careful workload selection—high-value, compute-intensive tasks like AI training or inference may justify the overhead, but not all cloud workloads will. Regulatory frameworks governing large constellations of compute satellites, spectrum use, and debris mitigation are still evolving. Economically, today’s cost curves still favor terrestrial data centers, even with SpaceX’s reusable launch record and ambitions to scale satellite deployments dramatically. Yet the strategic direction is clear: by exploring orbital data centers now, Google and SpaceX are positioning themselves for a future where AI workloads fluidly span local devices, terrestrial regions, and space-based infrastructure in a unified, globally distributed cloud.