From Chatting to Shipping: Why Prompt Plus Tools Beats Raw Prompting

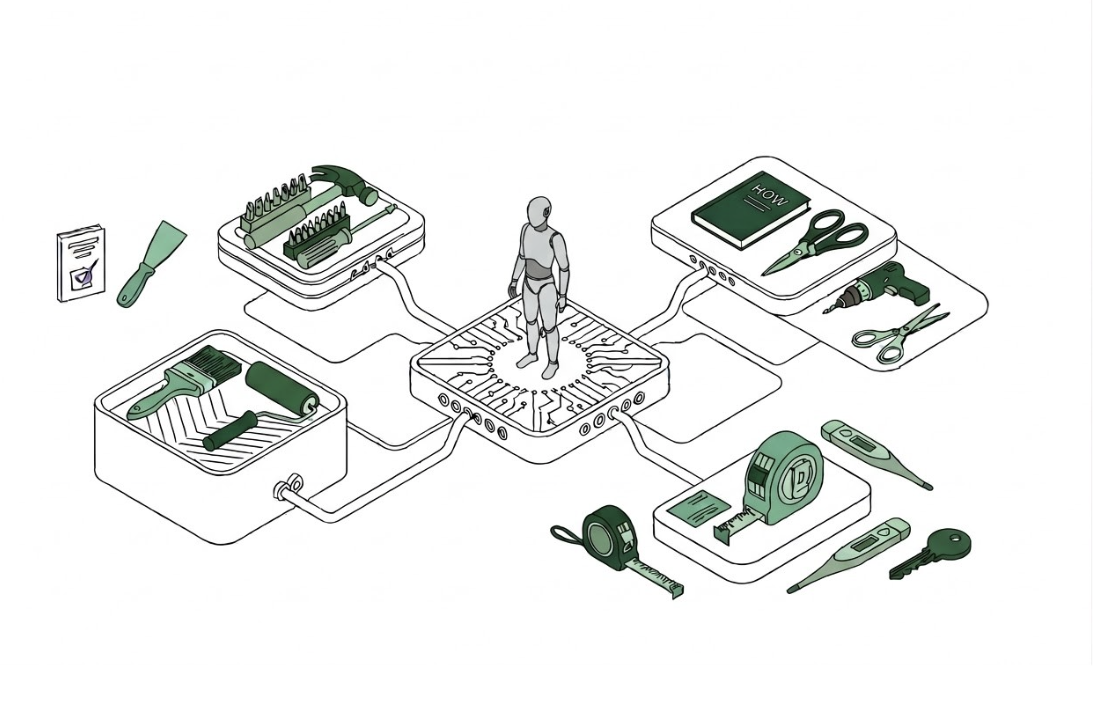

Most teams start their AI journey with a chat window and clever prompts. That’s fine for demos, but real productivity comes when you connect large language models to practical tools—APIs, CRMs, ticketing systems, search, and analytics. Think of it as the “prompt plus tools” equation: the prompt sets intent, tools provide actions, and the model orchestrates them into outcomes. Production experience from ThoughtSpot’s shared Agent Platform shows that prompts and tools create the business value, while everything else—session stores, context handling, tracing, security—is infrastructure that should be standardized. Meanwhile, infrastructure advances are happening underneath. Google’s new inference-focused TPU 8i chips are explicitly optimized for serving AI agents, recognizing that inference is where copilots actually summarize calls, route tickets, or trigger workflow steps. Together, these trends mark a shift from isolated chatbots toward agentic AI workflows that execute multi-step tasks in real environments, not just answer questions in a browser tab.

Agentic AI Workflows: Turning a Model into a Task-Completing Agent

An AI agent in the workplace is not just a smarter chatbot; it’s a workflow-aware system wired into your stack. ThoughtSpot’s shared Agent Platform framing is a useful mental model: define the tools an agent can use, wrap them with guardrails, and reuse a common layer for memory, auth, and observability. That way, each new agent only requires a new prompt and tool configuration, not fresh infrastructure. Orkes pushes this further by acting as a durable execution layer for agentic AI workflows. Their platform coordinates tools and AI agents step by step, ensuring they follow business logic, handle failures, and keep humans in the loop when needed. This is how you escape the “pilot trap,” where experiments never reach production. Instead of building fragile, one-off bots, you build a shared agentic platform where prompt plus tools are reusable, governable, and ready to support mission-critical workloads.

New Brains and New Chips: GPT 5.5 Workflows and Enterprise-Scale Inference

Agentic AI workflows depend on two pillars: smarter models and cheaper, faster inference. On the model side, GPT‑5.5 is designed for messy, multi-step work: writing and debugging code, researching online, analyzing data, operating software, and moving across tools until a task is finished. It can plan, call tools, check its own work, and keep going without you shepherding every step—ideal for GPT 5.5 workflows in engineering, research, and analytics. On the infrastructure side, Google’s TPU 8i inference chips are built specifically for serving AI agents and copilots at scale. Inference is where answers are generated, tickets are routed, and automations fire, so specialized inference hardware directly impacts the economics of AI agents in the workplace. Together, advanced models and inference chips make it feasible to run thousands of concurrent agentic AI workflows without blowing up latency, cost, or reliability.

A Mini Blueprint: Designing Your First Agentic AI Workflow

To move beyond ad hoc prompting, start with a single painful process and turn it into an agent. First, pick a high-friction workflow: for example, triaging support tickets, preparing weekly sales reports, or updating engineering status dashboards. Second, map the steps as a flowchart: inputs, decisions, approvals, and outputs. Third, list the tools the AI must use—ticketing APIs, BI dashboards, document stores, email or chat systems. Fourth, define success: what does “done” mean, which metrics matter, and where does human review stay mandatory. Finally, implement a simple agentic AI workflow: a system prompt describing the task, a small set of tools, and an orchestration layer (such as a shared agent platform or AI workflow orchestration engine) to manage state, retries, and handoffs. Start narrow, ship quickly, and expand only once the agent reliably completes the task end to end.

Monitor, Iterate, Escalate: Keeping Agents Reliable and Safe

Agentic AI workflows are not fire-and-forget; they need guardrails and continuous tuning. Begin with rigorous logging: capture prompts, tool calls, outputs, and failure modes so you can see where agents struggle or hallucinate. Platforms like Orkes emphasize orchestration, controls, and visibility so developers can run advanced AI agents with confidence, including durable execution, retries, and human-in-the-loop checkpoints. Use that visibility to add constraints to your prompts, tighten tool permissions, and refine success criteria. For complex edge cases, bake in explicit escalation paths: if confidence is low, data is missing, or policy violations are detected, the agent should stop and hand back to a human. Over time, treat each failure as training data for the workflow, not just the model. The result is a stable layer of agentic AI workflows that actually ship work instead of generating one-off chat transcripts.