Cerebras IPO Analysis: From Niche Experiment to Profitable AI Contender

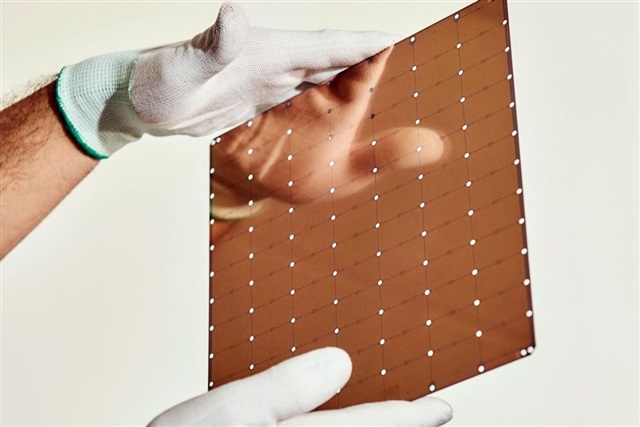

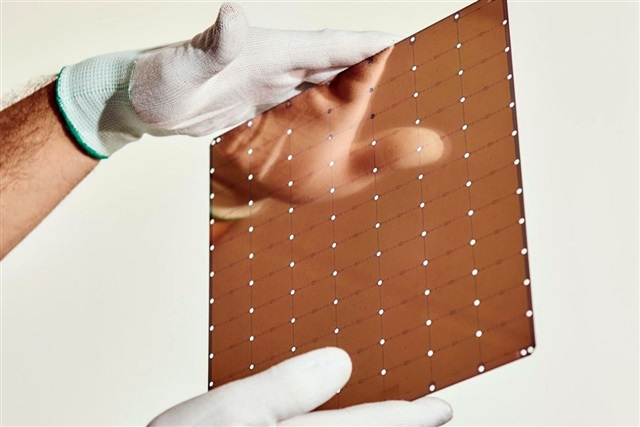

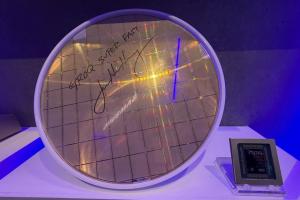

Cerebras Systems has filed for an IPO on Nasdaq, marking a turning point for a company once seen as a niche challenger to GPU giants. According to its filing, Cerebras generated US$510 million (approx. RM2.38 billion) in revenue and a net profit of US$87.9 million (approx. RM410 million) in 2025, a sharp reversal from prior losses. Its strategy hinges on a radical design: a wafer scale engine that uses an entire silicon wafer as a single AI processor, instead of cutting it into many smaller chips like GPUs. This approach targets both training and inference workloads with ultra-high on-chip memory and bandwidth, reducing the need for complex multi-GPU clusters. A deep partnership with Abu Dhabi–based G42 positions Cerebras within a growing Middle Eastern AI ecosystem, illustrating how AI semiconductor hardware demand is pushing cloud providers to diversify beyond NVIDIA-centric infrastructure.

How Wafer-Scale Engines Rewire AI Semiconductor Hardware

Traditional AI accelerators, such as GPUs, scale performance by linking thousands of chips with high-speed interconnects, which introduces latency, power losses, and software complexity. Cerebras’ wafer scale engine flips this model by keeping compute and memory on a single, massive piece of silicon. For AI semiconductor hardware, this means training very large language models can be done with fewer physical devices and simpler networking, while inference can run closer to peak hardware efficiency. The company’s architecture is designed to excel at sparse, irregular workloads used in modern generative AI, offering an alternative path as demand for GPU capacity repeatedly outstrips supply. If its IPO succeeds, Cerebras could validate wafer-scale processors as a mainstream option, encouraging more specialised accelerators—from optical chips to domain-specific xPUs—and giving hyperscale and regional clouds new bargaining power in the AI chip supply chain.

Kyocera’s Multilayer Ceramic Core Substrate: Quietly Reinventing AI Chip Packaging

While wafer-scale engines grab headlines, Kyocera is targeting a less visible but crucial layer of AI performance: the substrate that sits under advanced chips. The company has developed a multilayer ceramic core substrate for xPUs and switch ASICs, built from its proprietary Fine Ceramic materials. Substrates route power and signals between the chip and the circuit board; as AI processors grow larger and denser, organic-core substrates struggle with warpage and wiring limits. Kyocera’s highly rigid ceramic core reduces deformation in large packages and supports slimmer designs, improving reliability and thermals. Its multilayer structure enables high-density, three-dimensional wiring via tiny vias formed before firing, allowing finer pitches than conventional drilling in organic cores. This ceramic core substrate targets 2.5D packaging, where multiple chips sit side-by-side on an interposer, and shows how materials innovation is becoming as important as transistor scaling for next-generation AI data centers.

A Diversifying AI Chip Supply Chain: From Fabs to Data Centres

Taken together, Cerebras’ wafer-scale processors and Kyocera’s ceramic core substrate illustrate how AI hardware is diversifying beyond standard GPUs and organic substrates. As generative AI workloads surge, hyperscale and regional data centres are mixing architectures: wafer-scale engines for ultra-large models, xPUs and ASICs on advanced substrates for networking and specialised inference, and GPUs where ecosystem maturity matters most. This fragmentation is reshaping the AI chip supply chain, forcing foundries, packaging houses, substrate suppliers and cloud providers to co-design systems from the materials level upward. New demand for ceramic core substrates could shift some value from traditional organic PCB ecosystems to advanced ceramics, where Japanese and other Asian manufacturers are strong. At the same time, alternative accelerators like Cerebras’ reduce single-vendor dependency, altering procurement dynamics and creating openings for regional players that can integrate, host and optimise a broader mix of AI semiconductor hardware.

Implications for Asia and a Takeaway for Malaysia

For Asia, these trends present both opportunities and strategic choices. Japan’s Kyocera is positioning itself at the high-value materials and packaging layer, while Middle Eastern investments in Cerebras show that capital and demand are no longer concentrated only in US hyperscalers. Asian cloud providers and manufacturers that embrace wafer-scale engines, ceramic core substrates and other specialised AI hardware can reduce exposure to GPU shortages and export controls, while climbing into higher-margin parts of the AI chip supply chain. For Malaysia, a more diversified AI hardware ecosystem could translate into cheaper, more available AI services as operators are no longer locked into a single GPU roadmap. It also opens room for local data centres, integrators, and OSATs to plug into emerging packaging and testing needs. The core message: the more varied the AI hardware stack, the more resilient and affordable future AI infrastructure is likely to become.