The Productivity Hype Gap Around AI Coding Tools

Engineering leaders increasingly report shorter delivery cycles after adopting AI coding tools, yet many lack a credible way to verify whether those gains are real or sustainable. Industry numbers often get blended into a single narrative, even when they measure very different things. GitHub’s controlled trials talk about task-level speedups, while Gartner’s projections estimate future productivity gains and separate surveys track what teams feel today. On top of this, the 2025 DORA research links AI adoption with higher throughput but also lower delivery stability, while other studies show slower task completion for some experienced engineers when using large language models. Different scopes, cohorts, and time horizons all get summarized as “AI makes developers faster.” Without a consistent engineering metrics framework, finance and engineering leaders end up budgeting against anecdotes instead of evidence, and teams risk mistaking local, short-lived benefits for genuine, organization-wide productivity improvements.

Why Official Engineering Metrics Matter More Than Opinions

To distinguish perceived productivity from actual business value, teams need official, repeatable engineering metrics that tie AI usage directly to codebase outcomes. Navigara’s Engineering Throughput Value (ETV) is one example: a per-commit metric scored against each team’s own pre-AI baseline. By comparing commits over time, ETV aims to test AI-era productivity claims on the code itself rather than on survey responses or isolated experiments. This approach is a response to growing skepticism about outdated or misapplied metrics and to the risk of “AI washing,” where organizations credit AI for improvements that cannot be traced to specific workflow changes or measurable results. When teams standardize on metrics like ETV or DORA delivery indicators, they gain a consistent lens to evaluate whether AI coding tools ROI is real, whether improvements persist beyond pilot projects, and where bottlenecks simply move further downstream.

Inside DORA’s Framework for Measuring AI Coding Tools ROI

Google Cloud’s DORA team offers a structured engineering metrics framework for translating AI-assisted development into financial outcomes. Their model treats AI as an amplifier that works through seven capabilities, including a quality internal platform, disciplined version control, and AI-accessible internal data. Improvements in these areas should first show up in DORA delivery metrics—such as throughput and stability—then in non-financial outcomes like developer experience, and finally in cost savings and revenue growth. The ROI calculation itself uses a standard formula: value minus investment, divided by investment, with an example modeling returns for a 500-person engineering organization. The report stresses that these calculations are high-uncertainty estimates meant to drive informed discussions, not rigid forecasts. It also highlights that as AI inference costs fall dramatically, the real expense shifts toward governance: managing verification, adjusting workflows, and upskilling people so that productivity gains become measurable, repeatable, and financially meaningful.

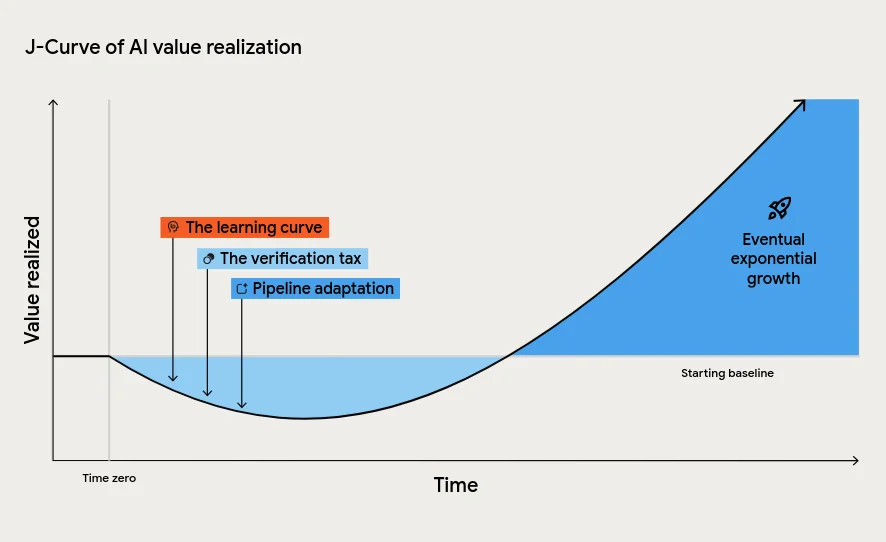

The J-Curve and the Hidden Costs of AI Implementation

DORA’s analysis warns that AI implementation success rarely looks like a smooth upward line. Instead, most organizations face a J-Curve of value realization: an initial productivity dip before sustained gains. This early downturn comes from three main factors. First, teams pay a learning curve as they rewire daily workflows around AI tools. Second, there is a verification tax, where engineers must carefully review AI-generated code to maintain quality. Third, downstream systems—testing, deployment, and change approval—must adapt to handle increased code volume without collapsing. Ignoring this J-Curve tempts leaders to label AI initiatives as failures just as they are paying the “tuition cost of transformation.” The report also describes an instability tax: AI can increase individual effectiveness while raising change failure rates if pipelines and automation are weak, turning local speed boosts into organizational risk.

Build Strong Engineering Foundations Before Chasing AI Speed

Both the Navigara work on Engineering Throughput Value and DORA’s ROI framework converge on a core message: AI alone does not transform software delivery. AI coding tools magnify whatever system they are dropped into. High-performing organizations with strong internal platforms, automated testing, continuous integration, and small-batch delivery tend to convert AI-driven throughput into real, measurable value. Struggling teams often see the opposite: localized productivity spikes followed by instability, bottlenecks, and rework. To realize genuine AI implementation success, leaders should first solidify foundational practices, define a small set of trusted engineering metrics, and establish clear baselines before introducing new tools. From there, they can track changes in throughput, stability, and downstream outcomes quarter over quarter. The goal is not to measure AI by how much code it writes, but by which bottlenecks it removes—and whether those changes persist in the metrics long after the pilot buzz fades.