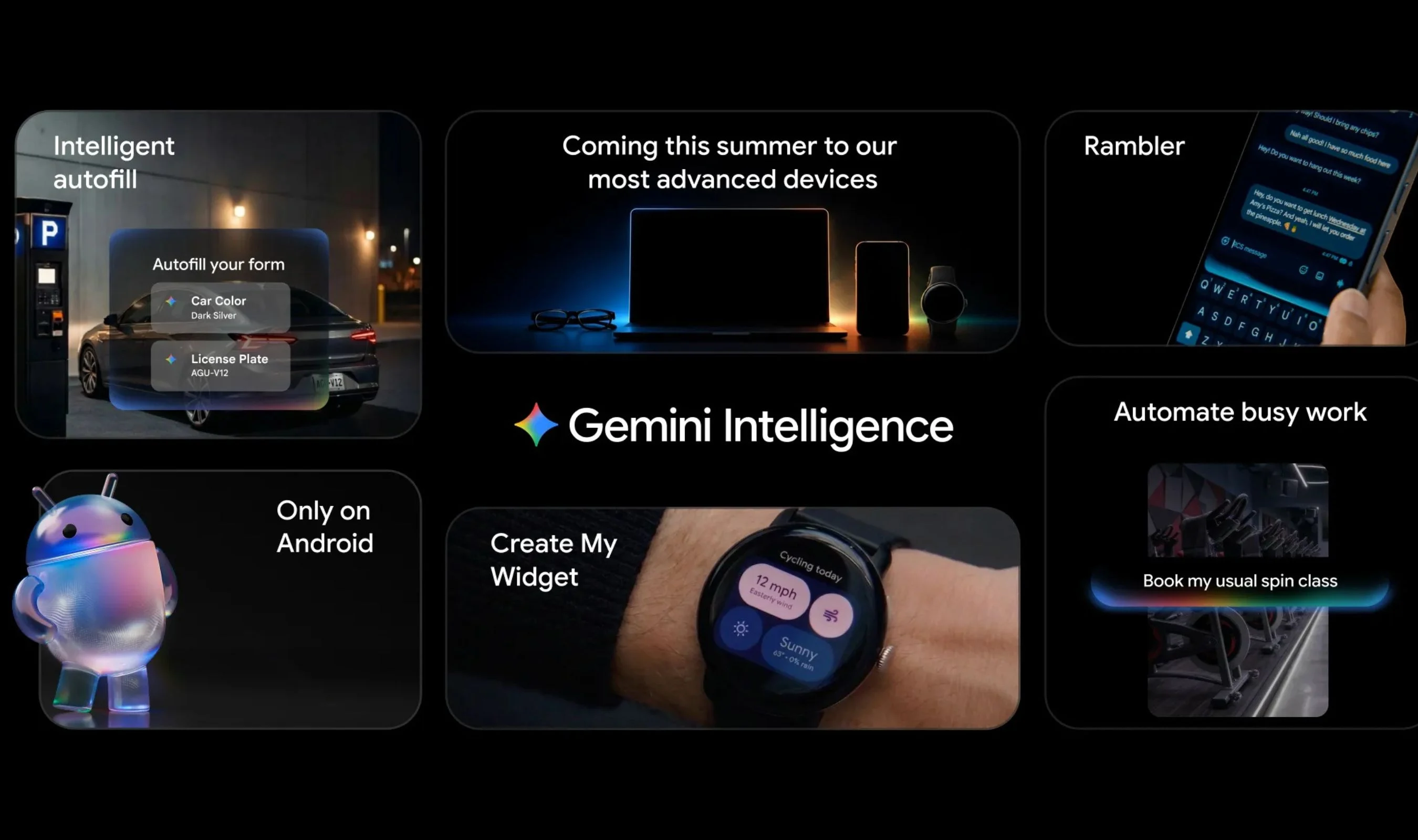

What Gemini Intelligence Is and Where It’s Launching

Gemini Intelligence is Google’s new umbrella for its most advanced Android AI features, combining automation, generative interfaces, and upgraded voice typing into a single system-wide suite. Rather than acting as a traditional reactive assistant, it adds a proactive intelligence layer that understands what’s on your screen, interprets your intent, and then orchestrates actions across apps. The first wave of Gemini Intelligence Android capabilities arrives this summer on the latest Pixel 10 and Galaxy S26 phones, with Google planning to extend the experience to Wear OS watches, Android Auto in cars, smart glasses, and laptops later in the year. All of this is wrapped in Google’s Material 3 Expressive design language, with subtle animations that indicate when Gemini is listening, thinking, or working, so users can see when AI is active without cluttering the interface. Ultimately, the suite is designed to make Android’s AI features feel cohesive, visible, and integrated across devices.

AI Proactive Tasks: Automating Digital Busywork

At the heart of Gemini Intelligence is AI proactive tasks, Google’s term for cross-app automations that run with minimal user input. Instead of opening multiple apps and repeating the same taps, users can rely on Gemini to handle multi-step workflows using on-screen and image context. For example, Gemini can locate a class syllabus in Gmail, parse the required textbooks, and add them to a digital shopping list. Long-pressing the power button while viewing a shopping list in a notes app can prompt Gemini to build a delivery order automatically. It can also interpret visual cues: take a photo of a travel pamphlet and ask Gemini to find a similar tour on Expedia for six people. Gemini sends progress updates and still requires final confirmation for actions such as checkouts or bookings, striking a balance between true automation and user control within Android AI features.

Generative Widgets and Personalized Interfaces

Gemini Intelligence introduces generative widgets through a feature called Create My Widget, bringing generative UI to Android home screens. From the widgets picker, users tap a new Create button and describe what they want in natural language, turning AI into a design partner. A cyclist could ask for a widget that tracks wind speed and rain probability on the morning commute, while a meal prepper might request a dashboard that surfaces three high-protein meal suggestions every week. These generative widgets are interactive, resizable, and styled using Material 3 Expressive, with animations tuned to reduce distraction rather than add flair for its own sake. Categories extend beyond weather and fitness to world clocks, daily briefs, important dates, and market tracking for stocks or crypto. Similar generative experiences are also coming to Wear OS Tiles, signaling a broader shift toward customizable, AI-authored surfaces across the Android ecosystem.

Gboard Rambler: Smarter, More Natural Voice Typing

Gboard Rambler voice typing is Gemini Intelligence’s upgrade to dictation, focused on making spoken messages as clean as typed text. Powered by Gemini models, Rambler listens for self-corrections, repetitions, and filler words like “um” and “uh,” and automatically filters them out so the final text is concise. You can dictate a shopping list, then say you “no longer want apples,” and the text updates accordingly without manual edits. Rambler is also built for multilingual users: it supports switching languages mid-sentence, such as mixing English and Hindi, while maintaining coherent transcription. A full-width waveform on the keyboard shows when Gemini is processing speech, offering transparent feedback. Google emphasizes that the audio used for real-time transcription is not stored, addressing privacy concerns. Together, Gboard Rambler voice typing and Gemini Intelligence Android integrations make speaking to your phone feel more like a natural conversation and less like talking to a rigid command system.

Chrome, Autofill, and the Growing Android AI Ecosystem

Beyond the home screen and keyboard, Gemini Intelligence extends into Chrome and system services to streamline everyday tasks. Chrome for Android is gaining an AI assistant that can research across multiple sites, summarize pages, and help with rote online chores such as making reservations or booking parking. Auto browse can navigate and compare information in the background, while users remain in control of final decisions. Autofill with Google is also getting an optional Personal Intelligence upgrade, using connected app data to complete more complex forms and long text boxes in a single tap, signaled by a spark icon in Gboard’s suggestion strip. All of these AI proactive tasks are strictly opt-in through system settings, reinforcing user choice and privacy. As features roll out to phones, watches, cars, glasses, and laptops, Gemini Intelligence is positioning Android as a deeply integrated, AI-first ecosystem rather than a collection of isolated smart features.