From Default Upgrade to Budget Shock

OpenAI has made GPT-5.5 Instant the new default model for ChatGPT and its chat-latest API endpoint, positioning it as a more accurate and concise successor to GPT-5.3 Instant for everyday tasks such as image analysis, STEM queries, and web-search decisions. A core promise is reliability: OpenAI reports that GPT-5.5 Instant delivers 52.5% fewer hallucinated claims on high-stakes prompts in domains like medicine, law, and finance, and 37.3% fewer inaccuracies on difficult, previously flagged conversations. However, production billing data paints a more complex picture for enterprise AI budgeting. OpenRouter’s April 2026 analysis of real workloads shows that GPT-5.5 costs rose 49% to 92% compared with GPT-5.4 once users actually switched models. That gap between OpenAI’s efficiency messaging and observed ChatGPT production expenses is now the central question for teams planning their next AI model pricing comparison.

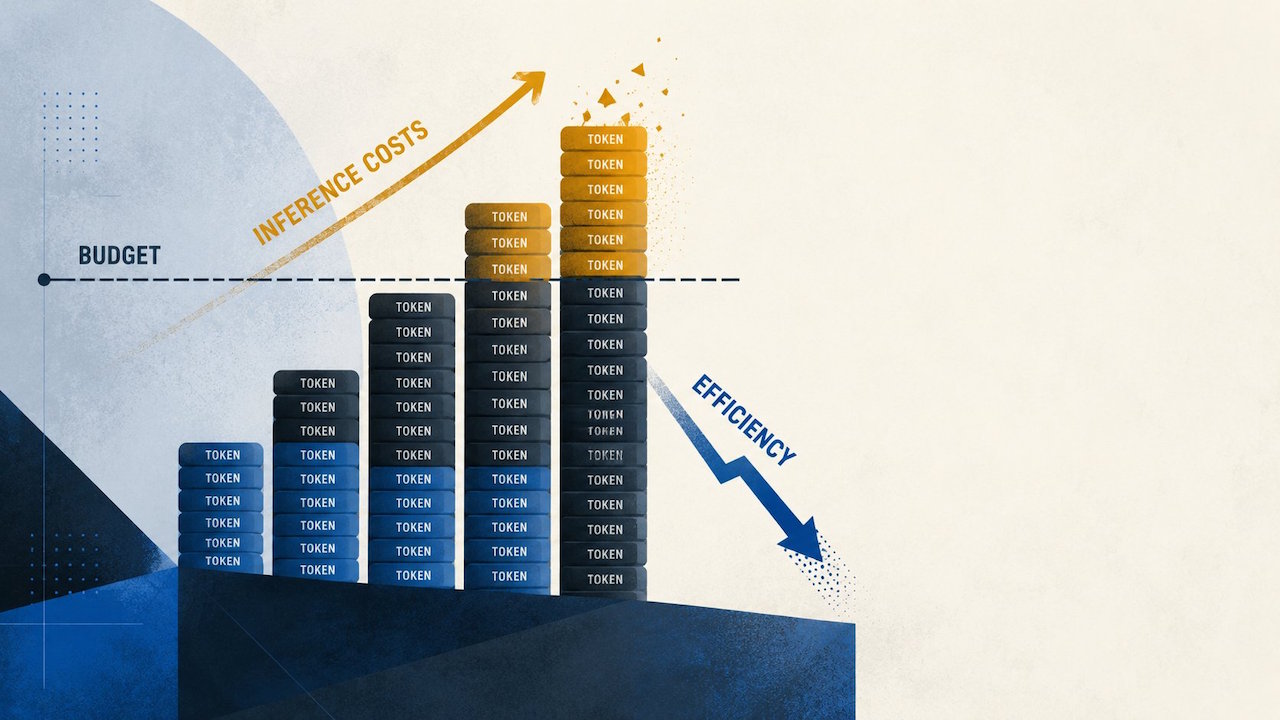

Why GPT-5.5 Instant Costs Climb in Real Workloads

OpenAI argues that GPT-5.5 is more token efficient than GPT-5.4, claiming shorter answers should offset a higher list price. Yet OpenRouter’s usage logs show that real-world behavior depends heavily on prompt length and completion patterns. For prompts above 10,000 tokens, GPT-5.5 produced 19% to 34% fewer completion tokens, suggesting potential savings on the largest-context tasks. The problem arises in the short and mid-range where most production assistants, copilots, and agents actually operate. Completions under 2,000 tokens grew slightly, while completions in the 2,000 to 10,000 token range expanded by 52%. That output drift, combined with pricing, pushed average cost per million OpenRouter tokens from USD 4.89 (approx. RM23.00) to USD 9.37 (approx. RM44.00) for prompts under 2,000 tokens, and from USD 0.74 (approx. RM3.50) to USD 1.10 (approx. RM5.20) for prompts between 50,000 and 128,000 tokens.

Balancing Hallucination Reduction Against Higher Token Spend

For buyers, the central trade-off is clear: GPT-5.5 Instant significantly improves factual reliability while driving up effective token costs. OpenAI’s internal evaluations report a 52.5% reduction in hallucinated claims on high-stakes prompts and a 37.3% drop in inaccurate answers on difficult, user-flagged conversations. These gains directly address risk in sensitive domains where erroneous responses translate into compliance, safety, or reputational issues. But OpenRouter’s metrics show that GPT-5.5 Instant costs 49% to 92% more than GPT-5.4 across common prompt-length bands. That means every extra follow-up turn, tool retry, or retrieval reformulation compounds the expense. Teams must treat hallucination reduction as one component of a broader cost-benefit analysis: is the lower error rate worth nearly doubling average cost per million tokens for the workloads that drive most of their ChatGPT production expenses?

A Decision Framework for Enterprise AI Budgeting

Choosing whether to adopt GPT-5.5 Instant should start with a traffic-aware AI model pricing comparison, not just vendor benchmarks. First, segment workloads by context length and business criticality. High-stakes, long-context scenarios that rely on fewer but more accurate answers may justify the higher cost, especially if hallucination risks are financially or legally significant. In contrast, high-volume, low-risk chat flows may be better left on earlier models to contain ChatGPT production expenses. Next, analyze production traces: prompt sizes, completion lengths, retry behavior, and tool calls. Map these to the cost bands where OpenRouter observed 49% to 92% increases. Finally, consider routing strategies—using GPT-5.5 Instant for premium tiers, long-context jobs, or flagged critical tasks, while defaulting to GPT-5.4 or similar models elsewhere. This selective deployment lets enterprises capture GPT-5.5’s reliability gains without surrendering control of their AI budget.