From Archival Thinking to Continuous AI Data Pipelines

Traditional enterprise storage architecture evolved around backup, archival, and periodic analytics. Data could be written once, stored cheaply, and accessed infrequently. AI factories break that model. Modern AI workload storage is defined by continuous, high-throughput access to massive datasets, feeding GPUs that must remain saturated to justify their power and space footprint. Instead of nightly jobs, organizations now run always-on data pipelines: ingesting raw data, transforming it, retraining models, and serving inference results in near real time. This shift forces IT teams to prioritize bandwidth, latency, and parallelism over pure capacity. AI storage systems are evaluated by how well they keep accelerators busy rather than how efficiently they store cold data. In this new landscape, storage becomes a performance-critical component of data center infrastructure, tightly coupled to compute, networking, and cooling decisions in ways legacy designs never anticipated.

How Enterprise AI Storage Systems Differ from Legacy Designs

Enterprise AI storage systems diverge sharply from conventional arrays in performance, latency, and scalability. Research from Network Storage Advisors shows vendors aligning their AI offerings along portfolio, storage, and workload strategies, with solutions categorized as configured, optimized, or specialized. These systems are engineered to match the parallel I/O patterns of GPU clusters and AI platforms such as Nvidia DGX BasePOD and DGX SuperPOD, where predictable throughput and low jitter matter as much as raw IOPS. AI workload storage must scale linearly across performance, capacity, power, and space, often captured in dashboards and reference architectures for decision-makers. Instead of a single monolithic stack, organizations increasingly deploy tiered designs that mix high-performance file and object platforms from vendors like NetApp, Dell, Everpure, IBM, Vast Data, and Weka. The result is a new class of data center infrastructure in which storage is co-designed with AI clusters, not bolted on afterward.

The Emergence of a New Middle Tier for AI Workloads

AI factories are driving a new “middle tier” of AI storage between ultra-fast but costly direct-attached memory and traditional network-attached storage. On one side are technologies like high-bandwidth memory directly attached to GPUs; on the other, large but slower NAS or object stores. Flash-based AI storage systems now occupy the middle, delivering high throughput and low latency at far greater capacity than in-node memory. A key example is KV cache storage, which keeps intermediate representations and context for large models, dramatically improving AI factory efficiency. Because this data can be recomputed, the tier can prioritize speed and density over strict, legacy-style durability guarantees. This rebalancing changes how architects think about resilience, backup, and replication. Instead of treating all data the same, AI workload storage separates critical system-of-record data from recomputable working sets, enabling more aggressive performance tuning without compromising overall reliability.

Extreme Co-Design: Power, Cooling, and Storage Density

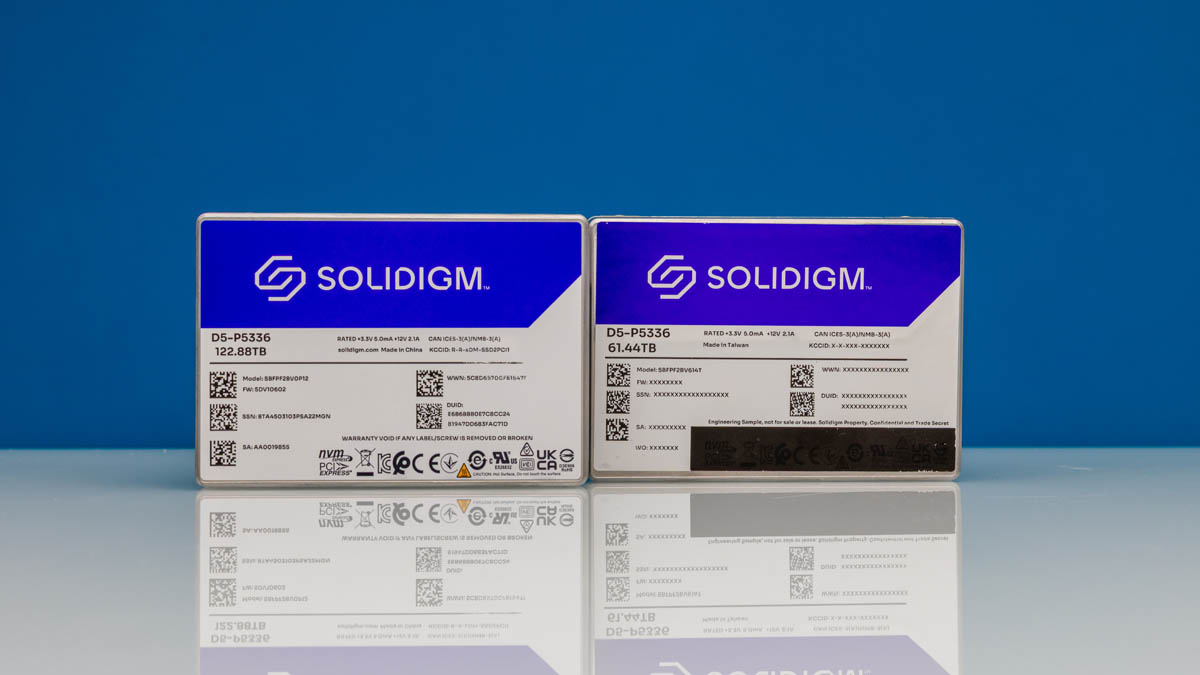

AI data centers are increasingly constrained by power budgets and physical space, pushing vendors toward extreme co-design of compute, networking, and storage. Companies like NVIDIA and Solidigm collaborate on everything from thermal management to electrical delivery, exemplified by concepts such as liquid-cooled SSDs for dense AI racks. The goal is simple: maximize the footprint of productive GPUs while ensuring AI storage systems can feed them at scale. In this AI factory model, a single large facility could demand exabytes of flash to operate efficiently, turning storage into a primary driver of rack layout, cooling strategy, and power distribution. Technologies like BlueField DPUs and specialized AI storage systems integrate acceleration, networking, and I/O control to reduce CPU overhead and latency. For architects, these trends mean storage planning is no longer a separate track; it is integral to overall data center infrastructure design for AI.

Planning the Next-Generation Storage Architecture for AI Factories

Organizations entering the AI factory era must rethink storage planning across the full AI lifecycle: data ingestion, model training, fine-tuning, inference, and feedback loops. Strategic landscapes such as the 2026 Enterprise AI Storage Systems report help compare configured, optimized, and specialized solutions aligned to specific AI workloads and platforms. Practically, teams should map data flows into tiers: high-performance KV caches and flash middle tiers for hot working sets; scalable, DGX-certified file or object systems for training corpora; and cost-efficient repositories for long-term retention. Power and space budgets must be modeled alongside throughput requirements, not as separate constraints. Finally, governance, observability, and management tools become central: AI storage systems must be measurable, automatable, and adaptable as models and agentic AI workflows evolve. Those who treat storage as a strategic, co-designed pillar of AI workload infrastructure will be best positioned to scale safely and competitively.