OpenRouter Data: GPT-5.4 vs GPT-5.5 in Real-World Billing

OpenRouter’s April 2026 usage-log analysis provides a rare, production-grade GPT-5.5 cost comparison against GPT-5.4 — and the gap is substantial. After organizations switched from GPT-5.4, effective GPT-5.5 costs rose between 49% and 92% across different prompt bands. That increase reflects real billing outcomes, not just higher list prices. While procurement teams can simulate spend using rate cards, only live workloads reveal how prompt length, completion length, and multi-turn behavior translate into invoices. Many common enterprise workloads — like assistants, copilots, and support bots — live mostly in short and mid-range prompts rather than extreme long-context windows. In that zone, even modest changes in response length or retry frequency can snowball into higher monthly charges. This makes AI model pricing in the real world a behavioral question as much as a line-item comparison, and GPT-5.5’s profile looks materially more expensive than GPT-5.4 in typical production use.

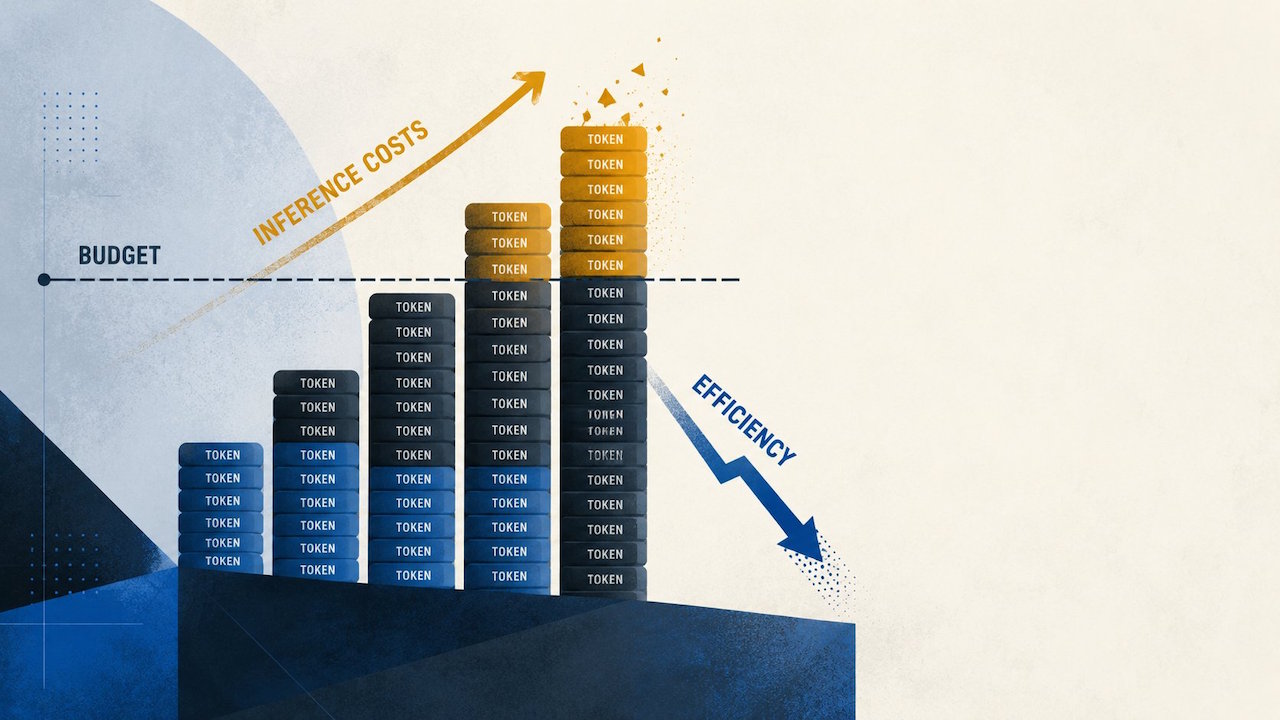

Why OpenAI’s Efficiency Narrative Doesn’t Match Production Reality

OpenAI positions GPT-5.5 as more token efficient than GPT-5.4, with similar real-world latency and shorter responses expected to offset higher prices. OpenRouter’s logs partially confirm this: for prompts above 10,000 tokens, GPT-5.5 generates 19% to 34% fewer completion tokens. However, the picture changes in the mid-range that dominates many enterprise workloads. For prompts of 2,000 to 10,000 tokens, median completion length jumps 52% with GPT-5.5, and even sub‑2,000‑token prompts see a 7% increase. When that behavior meets higher list pricing, average cost per million tokens climbs sharply — from USD 4.89 (approx. RM22.5) to USD 9.37 (approx. RM43.1) for prompts under 2,000 tokens, and from USD 0.74 (approx. RM3.4) to USD 1.10 (approx. RM5.1) between 50,000 and 128,000 tokens. In other words, efficiency gains at the longest contexts do not, by themselves, guarantee cheaper operation across a real workload mix.

The Hidden Budget Impact on Enterprise AI Expenses

The OpenRouter findings show how GPT-5.5’s completion behavior turns into a budget issue for shared AI platforms. Shorter answers on very long prompts do not offset the fact that most assistants and agents spend their time in shorter prompts with repeated calls: tool retries, retrieval reformulations, and follow-up questions. Each extra completion token is billed, so length drift in the 2,000–10,000 token band — where completions grew 52% — magnifies enterprise AI expenses month after month. Average cost per million tokens rising 49–92% across bands means finance teams that approved GPT-5.5 based on list prices may face unpleasant surprises once traffic scales. Platform owners inherit those recurring costs, and routing teams must decide where GPT-5.5 belongs: reserved for long-context jobs, limited to premium user tiers, or enabled broadly. Each deployment choice directly changes whether GPT-5.5 becomes a strategic upgrade or a structural cost overrun.

Recalculating ROI: When Does Upgrading to GPT-5.5 Make Sense?

For decision-makers, the GPT-5.4 vs GPT-5.5 question is no longer theoretical. Before the new pricing, short-context GPT-5.4 workloads sat at USD 2.50 (approx. RM11.5) per million input tokens and USD 15 (approx. RM69.0) per million output tokens. GPT-5.5 now lists at USD 5 (approx. RM23.0) per million input tokens and USD 30 (approx. RM138.0) per million output tokens, with GPT-5.5 Pro far higher. Earlier estimates suggested around a 19% API cost increase; production data instead shows 49–92% effective jumps once real behavior is included. That makes narrow operational testing mandatory. Teams should replay representative production traces through both models, segment by prompt length, and compare total token usage plus error/retry rates. For some long-context, high-value tasks, GPT-5.5’s quality or compression may justify the premium. In many mid-range, high-volume workloads, however, staying on GPT-5.4 — or mixing models by use case — may deliver better ROI.

Model Selection Strategy: Beyond List Prices and Benchmarks

The GPT-5.5 cost comparison also sits within a broader competitive context. OpenRouter notes that a separate analysis put GPT-5.5 base output pricing above Anthropic’s Claude Opus 4.7, with roughly similar input pricing, while Anthropic warns its tokenizer could consume more tokens depending on content. This reinforces a central lesson: AI model pricing in the real world is shaped by tokenization, response length, and workload shape, not just posted rates or benchmark scores. Enterprise buyers should treat GPT-5.5, GPT-5.4, and rivals as components in a routing strategy rather than one-size-fits-all defaults. Long-context, mission-critical flows might justify GPT-5.5; routine mid-range tasks could remain on GPT-5.4 or alternatives. Above all, organizations should institutionalize continuous cost observability — monitoring token usage, completion length, and per-feature spend — so that each new model release becomes a data-driven decision instead of a marketing-led upgrade.