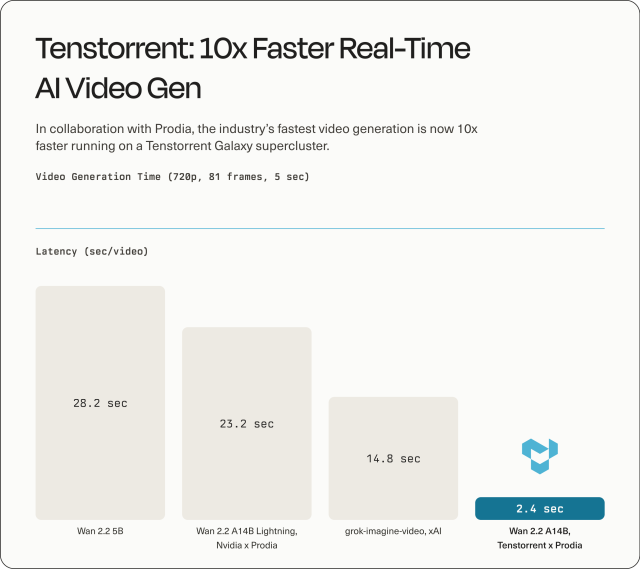

What Tenstorrent Just Demonstrated in AI Video Hardware

Tenstorrent has previewed a new large compute cluster designed to supercharge AI video generation, claiming roughly 10× speed gains over leading alternatives for the Wan2.2 video model. In a demo built with cloud AI company Prodia, an optimized Wan2.2-14B model produced a five‑second, 720p clip (81 frames, 40 steps) from a text prompt in about three seconds, with a record run of 2.4 seconds. This setup uses four Galaxy servers based on Tenstorrent’s BlackHole-generation chips, 128 chips in total, running a single software program across the entire cluster. The architecture unifies compute, memory, and networking without relying on proprietary interconnects or workload‑specific designs, which Tenstorrent argues makes it flexible enough for future video models as well as today’s diffusion-based systems. For creators and studios, the headline is simple: production-grade generative video, at respectable quality, can now be rendered faster than the footage’s own runtime.

What “Faster Than Real Time” Means for Day-to-Day Workflows

In practical terms, “faster than real time” means your AI tool can generate more seconds of video than the clock is ticking. Tenstorrent and Prodia’s Wan2.2-14B demo creates a five‑second clip in as little as 2.4 seconds, so the machine is effectively running the clock at double speed. For a director, game art lead, or editor, that translates into near‑instant feedback loops: tweak a prompt or style and see a new version before you’ve finished explaining the next idea. Instead of waiting many minutes for a single clip, you could cycle through multiple variations during a single meeting, approving, rejecting, or combining takes on the fly. This is particularly important for workflows built around real time AI video, like live previs in virtual production stages or interactive content tools, where latency directly dictates how often you can iterate and how experimental your team can afford to be.

Previz, Backgrounds, and Rapid Iteration for Creators and Game Studios

Tenstorrent’s leap in AI video rendering speed directly targets friction points in modern content pipelines. Previsualization and blocking, which traditionally relied on rough animatics or gray-boxed 3D scenes, can be replaced with quick, generated sequences: camera moves, lighting moods, and action beats drafted in seconds. For game studios already leaning on AI for ideation and asset generation, faster-than-real-time Wan2.2 video model outputs mean you can mock up environment fly-throughs or NPC vignettes as fast as designers can type prompts. Google’s games leadership has suggested that most major studios already use AI behind the scenes for tasks like filling large worlds with content; accelerating video generation extends that logic to motion, ambience, and narrative beats. Background plates for cinematics, atmospheric loops for loading screens, or pitch trailers can all be iterated rapidly, allowing teams to test more ideas without committing full animation or VFX resources up front.

Who Gets This Power First: Cloud, Studios, and Access Trade-offs

Running an optimized Wan2.2-14B model across 128 BlackHole chips is not something most creators will do on a desktop. In the near term, this class of AI video hardware is likely to live in cloud clusters and large studio machine rooms, fronted by familiar tools. Tenstorrent’s fully open-source software stack and focus on general-purpose AI computing make it attractive to frontier AI labs and video-focused enterprises that can integrate the hardware into their own pipelines. Individual editors or small teams will probably access this speed through hosted services and plugins—cloud render buttons inside game engines, NLEs, and DCC tools—rather than owning the hardware. Tenstorrent’s emphasis on non-specialized architecture is aimed at cushioning customers from model churn, but it does raise questions about energy usage and utilization: packing many chips into a cluster delivers speed, yet teams will need to balance throughput against power budgets and real-world demand.

Quality, Energy, and the Road to Consumer-Level AI Video Tools

Tenstorrent’s executives stress that their platform is designed for both today’s diffusion-based systems and emerging autoregressive or predictive video models, which may be less sequential and easier to scale. That matters for quality: higher resolutions and longer clips will demand more compute, even as research tries to cut the number of denoising steps. For now, the five‑second, 720p Wan2.2 demo illustrates a trade-off space—production-grade quality at high speed, but on large clusters. Energy use and heat will be central constraints, especially as customers push to bigger formats. On the software side, nearly every major creative stack is already weaving in AI, often quietly: game studios use AI for content generation, and engines and tools are integrating models under the hood. As clusters like Tenstorrent’s become more common in cloud backends, consumers are likely to see the benefits as “instant” video features in familiar apps, long before they touch this hardware directly.