The Productivity Hype Problem in AI-Assisted Development

Many engineering leaders hear that AI coding tools deliver faster cycles and assume those gains are both real and durable. GitHub reports 55% faster task completion with Copilot in controlled trials, while Gartner projects 25–30% productivity gains by 2028 for teams that apply AI across the full software development lifecycle, up from the 10% attributed to code-generation tools in 2024. Another Gartner survey shows only 34% of teams using generative AI report high productivity gains in practice. These DORA metrics AI debates are often treated as if they describe the same outcome, yet they measure different scopes, cohorts, and time horizons. Without consistent AI coding tools metrics tied to the actual codebase, organisations risk crediting AI for improvements driven by other factors—or missing instability that offsets apparent throughput gains. The challenge is moving from headline claims to verifiable software development productivity.

What the DORA Framework Adds to AI ROI Conversation

Google Cloud’s DORA team reframes AI as an amplifier, not a magic accelerator. Their ROI of AI-Assisted Software Development report connects engineering metrics to business outcomes through a structured value model. Value flows from foundational capabilities—such as a quality internal platform, strong version control, and AI-accessible internal data—into improved DORA delivery metrics, then into non-financial outcomes like developer and user experience, and finally into financial results such as cost savings and revenue growth. Crucially, they emphasise that engineering ROI measurement should not focus on how much code AI writes, but on which bottlenecks it clears. This approach helps teams compare AI-era metrics against a pre-AI baseline instead of relying on anecdotal wins. By aligning DORA metrics AI insights with financial reasoning, leaders gain a more defensible view of software development productivity and avoid over-indexing on isolated speed statistics.

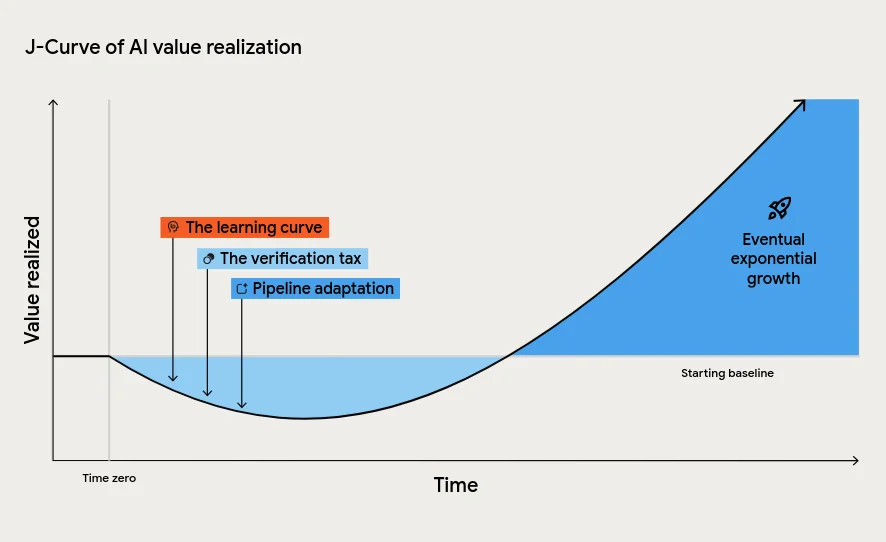

The J-Curve and the Cost of Misreading Early AI Results

DORA’s report introduces the J-Curve of value realisation, a pattern where productivity dips before rising as AI adoption matures. Early on, teams face a learning curve, a verification tax for reviewing AI-generated code, and pressure on downstream processes such as testing and change approval as code volume increases. These factors temporarily drag down software development productivity, even when long-term benefits are attainable. The report calls this phase “the tuition cost of transformation” and warns that leaders who misinterpret the dip as failure may cut funding precisely when persistence is required. At the same time, research shows AI adoption can raise throughput while lowering delivery stability, with more code moving faster than pipelines and manual gates can safely handle. Effective AI coding tools metrics must therefore track both throughput and stability, capturing the full shape of the J-Curve rather than only short-term cycle-time improvements.

Why Strong Engineering Foundations Matter Before AI

DORA’s findings emphasise that strong engineering foundations are prerequisites for reliable AI gains. AI magnifies the strengths of high-performing organisations and the dysfunctions of struggling ones. Without automated testing, continuous integration, and disciplined version control, teams experience what the report calls an instability tax: change failure rates and downtime can increase as AI accelerates code delivery. The sample model even quantifies a negative downtime impact when change failure rate rises after AI adoption. Meanwhile, other research highlights how AI can be blamed for productivity gains or workforce changes that cannot be traced to specific workflow shifts or measurable outcomes. To achieve meaningful engineering ROI measurement, teams must first stabilise their delivery processes, clarify workflows, and invest in internal platforms and data accessibility. Only then can AI coding tools metrics reveal whether AI is genuinely improving throughput, quality, and reliability rather than amplifying chaos.

From Headlines to Hard Metrics: Building a Real AI ROI Scorecard

To move beyond hype, teams need a concrete AI ROI scorecard anchored in both DORA metrics AI perspectives and code-level evidence. One emerging approach is commit-level analysis, such as Engineering Throughput Value (ETV), which scores each team’s commits against its own pre-AI baseline. Combined with DORA’s value model, this enables a robust comparison of throughput, stability, and downstream effects over time. Practically, teams should track metrics like deployment frequency, lead time, change failure rate, and mean time to restore, alongside developer experience and verification overhead. They should also distinguish between local productivity spikes and end-to-end value by measuring how AI-driven changes affect testing queues, approvals, and production incidents. By grounding AI coding tools metrics in consistent measurement and strong engineering practices, organisations can confidently answer the core question: is AI creating sustainable software development productivity, or just generating flattering but misleading numbers?