OpenRouter Data Exposes a Hidden GPT-5.5 Cost Gap

OpenRouter’s April 2026 usage-log analysis offers a rare, production-grade view of GPT-5.5 cost behavior. After customers migrated from GPT-5.4, effective spending on GPT-5.5 rose between 49 and 92 percent. This jump did not come from a simple list-price hike alone; it emerged from how real workloads interact with the model over thousands of requests. The logs show that GPT-5.5 tends to generate shorter outputs only in very large prompt windows above 10,000 tokens. In the more common short and mid-range prompts that dominate enterprise traffic, completions often became longer, driving up per-request and monthly LLM production expenses. For procurement and engineering teams, this means lab benchmarks and vendor slides are no longer enough. To understand true AI deployment pricing, organizations must examine production traces, aggregate token usage, and real billing outcomes once applications are live.

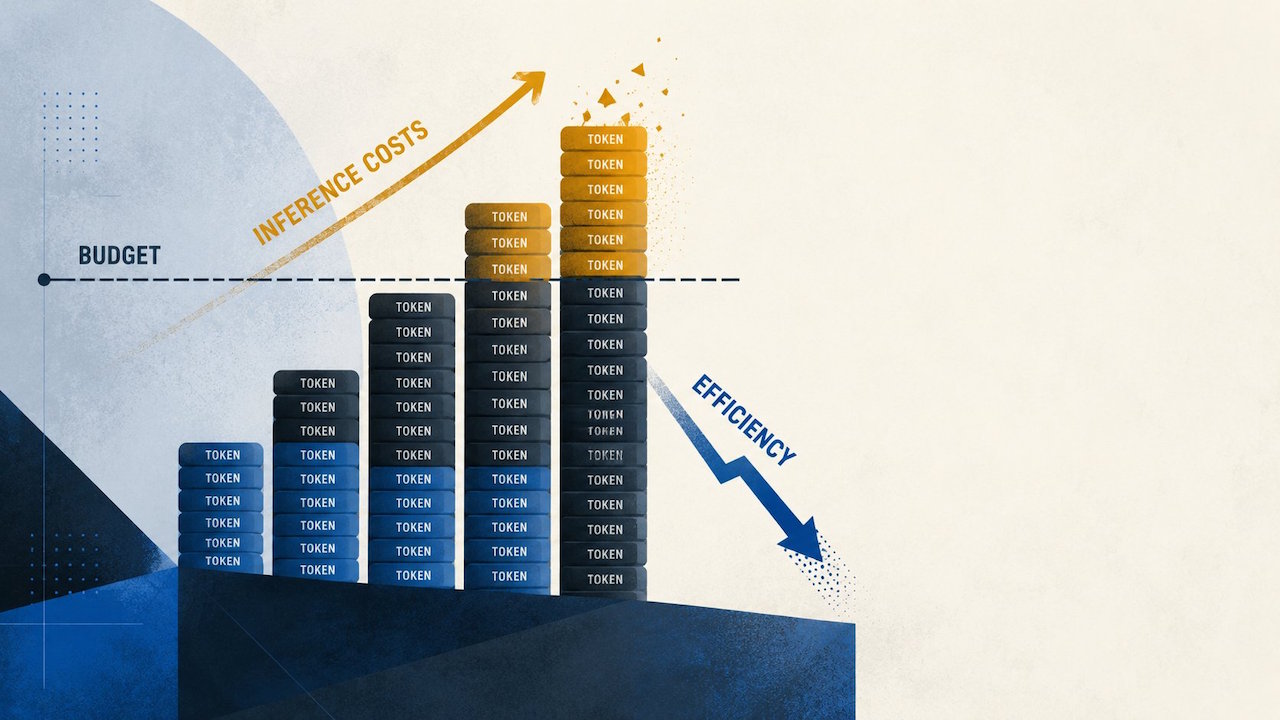

Why OpenAI Efficiency Claims Diverge from Real-World Bills

Model vendors emphasize efficiency in best-case scenarios: long prompts, carefully tuned tasks, and benchmark-style interactions. However, most enterprise applications spend far more time in everyday patterns like short chats, mid-length analyses, or multi-turn agent workflows. OpenRouter’s findings suggest that in these realistic regimes, GPT-5.5’s behavior can increase token generation enough to outweigh any per-token efficiency gains. This gap matters because buyers often extrapolate from headline claims to their own environments, assuming lower latency or better compression will translate directly into lower spend. Instead, real usage reveals a different story: a model that thinks longer, adds more detail, or encourages iterative prompting can quietly inflate costs, even when the nominal price sheet seems manageable. The result is a growing disconnect between OpenAI efficiency claims and what engineering leaders see when monthly invoices arrive for their production LLM workloads.

Per-Token vs Per-Request Thinking: The New Budgeting Mindset

The OpenRouter analysis underscores a critical budgeting lesson: list prices and per-token rates are only the starting point. Real costs emerge from the interaction between prompt patterns, completion behavior, and session length over time. A model that appears affordable per token can still produce much higher per-request and per-user costs if it habitually returns longer answers or encourages additional turns. Teams planning AI deployment pricing need to move beyond static spreadsheets and test models with realistic workloads before fully committing. That means replaying expected traffic, logging total tokens per conversation, and modeling how changes in completion length translate into monthly LLM production expenses. This approach helps identify where GPT-5.5’s 49–92 percent cost uplift might appear in your own stack—and whether its quality gains justify that premium. Without this discipline, organizations risk underestimating their LLM infrastructure budget and facing unpleasant surprises later.

Rising Cloud LLM Bills and the Turn Toward Local Models

As cloud-hosted AI services grow more capable, they are also becoming harder for providers to subsidize. Industry commentary has highlighted how vendors offered powerful models at loss-leading rates, only to clamp down later with metered billing, session limits, or feature removals when compute demand surged. This pressure is driving organizations to reassess how much of their AI stack truly needs to run in the cloud. Recent experiments discussed by practitioners show that locally hosted LLMs—especially as coding assistants—are now good enough to offload a meaningful share of everyday workloads. By running models on in-house hardware, teams can ease compute strain on external services and gain more predictable cost control, even if some high-end capabilities still require cloud APIs. As GPT-5.5 cost comparisons continue to favor alternatives in many scenarios, local LLMs are emerging as a practical counterweight to escalating production expenses.

How to Evaluate GPT-5.5 Against Alternatives for Your Stack

Choosing between GPT-5.5, prior OpenAI models, and rivals like Claude Opus 4.7 now requires a production-first mindset. Rather than relying solely on benchmarks or marketing, teams should design bake-offs grounded in their actual workloads: representative prompts, realistic conversation depth, and real-time constraints. Key metrics include tokens per request, error rates, user satisfaction, and the total cost of serving a fixed volume of business tasks. For many organizations, the right answer will be a hybrid approach. High-value, complex reasoning may justify GPT-5.5’s higher effective price, while routine coding assistance, document drafting, or internal tools can shift to cheaper cloud models or local LLMs. By instrumenting every layer—from prompt orchestration to billing dashboards—engineering and finance leaders can clearly see where GPT-5.5’s premium delivers genuine ROI, and where more economical options can safely absorb the bulk of their LLM production expenses.