From Novel Gadget to Open App Ecosystem

Meta’s Ray-Ban Display glasses are shifting from a closed gadget into a true platform. Until now, they mostly handled Meta’s own experiences: in-lens previews of what the camera sees, basic display of messages, and responses from Meta AI. That’s useful, but limited — especially for hardware worn on your face all day. Meta is now opening the display to third-party smart glasses apps, allowing external developers to build and ship their own experiences. Instead of waiting for Meta’s feature roadmap, the glasses can become a flexible interface for whatever developers imagine, from always-on utilities to tiny, task-specific tools. This is the same pivot smartphones made when they went from locked-down devices to open app ecosystems. The hardware hasn’t radically changed overnight, but its potential has: Ray-Ban Display glasses are no longer just smart eyewear, they’re a wearable screen for an emerging app platform.

Inside Meta’s Developer Platform for Ray-Ban Display

Meta’s developer platform offers two main paths to build for Ray-Ban Display glasses. First is the Wearables Device Access Toolkit, a native SDK for iOS and Android. Developers can extend existing mobile apps using Swift or Kotlin, pushing UI components like text, images, lists, buttons, and even video directly into the lens. That means a fitness app, for example, can surface workout stats without rebuilding from scratch. The second path is web apps, built with HTML, CSS, and JavaScript. These run like lightweight tools accessed through a URL, ideal for quick experiments such as transit dashboards, cooking guides, or micro-productivity utilities. Together, these options lower the barrier for third-party smart glasses development, encouraging both established app makers and indie developers to experiment. On paper, Ray-Ban Display becomes less a standalone gadget and more a wearable interface layer that sits on top of your existing digital life.

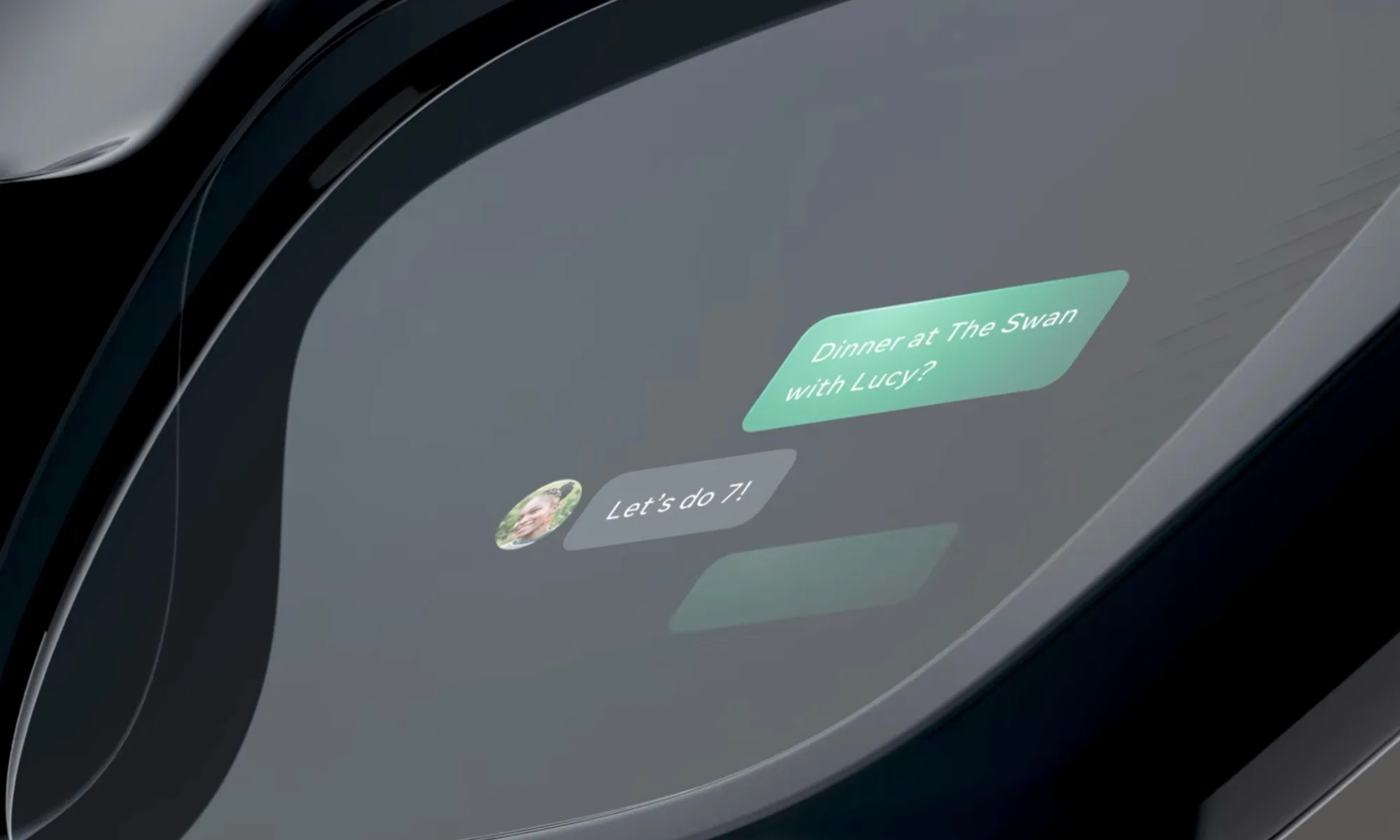

New Native Features: Handwriting, Captions and Smarter Navigation

Alongside opening the platform, Meta is expanding its own features to make the glasses more useful out of the box. Neural handwriting — powered by the included Neural Band — lets you compose messages with mid-air hand gestures, now working across WhatsApp, Messenger, Instagram, and native Android and iOS messaging. Live captions are rolling out for voice messages in WhatsApp, Facebook Messenger, and Instagram DMs, turning the glasses into a lightweight accessibility and context tool. Display recording can capture a composite of what the lens sees, what the display shows, and ambient audio, useful for walkthroughs or tutorials. Navigation has also grown more ambitious, with walking directions now available across the US and major cities like London, Paris, and Rome. These upgrades don’t depend on third-party apps, but they create a richer baseline that developers can hook into, making their own experiences more compelling and context-aware.

What Third-Party Apps Could Actually Change

The real transformation will come from what developers build on top of Meta’s foundation. Always-on overlays could show live sports scores, stock tickers, or breaking news as you move through your day. Productivity tools might sync calendars, task lists, and reminders into subtle prompts that appear only when relevant, like nudging you with a shopping list as you enter a supermarket. Travel apps could blend walking navigation with transit updates and translation snippets, while education experiences might walk you through recipes, DIY repairs, or language drills step-by-step in your field of view. Because apps can use the Neural Band for gesture input, interactions can stay hands-free and discreet. Over time, this could mirror the smartphone shift: a few breakout smart glasses apps redefining the device from a novelty into something people feel underdressed without.

The Road Ahead for Meta’s Smart Glasses Platform

Meta’s push doesn’t stop with third-party apps. Its Muse Spark AI assistant is slated to reach Ray-Ban Meta and Oakley Meta glasses in the coming weeks in select markets, with Ray-Ban Display support expected later. Muse Spark enables natural, interruptible conversations, quick topic shifts, and multilingual interactions, while Live AI features bring camera-based queries and shopping integrations through Meta’s broader ecosystem. Combined with over two million first-generation units reportedly sold and the ongoing evolution of Meta’s underlying AI models, Ray-Ban Display glasses are being positioned as a key access point into Meta’s wider wearables and AI strategy. The platform is still early — there are no public third-party apps at launch — but the ingredients are there. If developers embrace the Meta developer platform, smart glasses apps could finally give everyday users a reason to keep a display on their face.