The Myth of Unverified AI Speedups

Engineering leaders widely report shorter development cycles after adopting AI coding tools, yet few can prove those gains are real or durable. Industry numbers are all over the map: controlled trials show significant task speedups, forecasts project larger long‑term improvements, and surveys reveal that only a minority of teams experience high productivity gains in practice. These figures describe different scopes, time horizons, and cohorts, but they are often treated as interchangeable truths. Inside organisations, the situation is similar. Teams attribute faster delivery, fewer bugs, or reduced overtime to AI without tying those outcomes to specific workflow changes or measurable indicators in the codebase. Finance and strategy teams are then asked to fund AI initiatives on the basis of anecdotes and vendor benchmarks. To move beyond this, organisations need disciplined AI productivity metrics that connect what developers do every day to business outcomes.

What the DORA Framework Actually Measures

Google Cloud’s DORA framework gives engineering teams a shared language for AI productivity metrics and engineering ROI measurement. Instead of fixating on how much code AI writes, DORA focuses on the flow of value: how work moves from idea to running software. Core DORA delivery metrics such as deployment frequency, lead time for changes, change failure rate, and time to restore service remain central, but the latest research extends them into an AI context. DORA’s model links seven technical capabilities—including internal platforms, disciplined version control, and making internal data accessible to AI—into a value chain. Improvements in these capabilities show up first as better development cycle metrics, then as non‑financial outcomes like improved developer experience, and finally as financial benefits such as cost savings or revenue impact. This structured approach turns AI coding tools ROI from a vague promise into a measurable, organisation‑wide transformation.

Why Strong Engineering Foundations Matter More Than Tools

DORA’s latest report characterises AI as an amplifier, not a silver bullet. High‑performing teams with clear workflows, quality internal platforms, and well‑aligned structures see AI magnify their strengths: smoother pipelines, faster experiments, and more reliable releases. Struggling teams, by contrast, often experience the opposite. The research highlights that AI adoption is associated with higher throughput but also increased delivery instability when pipelines, testing, and approvals are not ready for increased code volume. This “instability tax” manifests as more failed changes and disrupted service, offsetting gains in individual productivity. The message is clear: without robust engineering foundations—automated testing, continuous integration, small batch work—AI simply accelerates the chaos. Organisations hoping to improve AI coding tools ROI must therefore invest first in the system that surrounds the tools. AI is evaluated not by lines of code generated, but by the bottlenecks it helps remove across the entire software lifecycle.

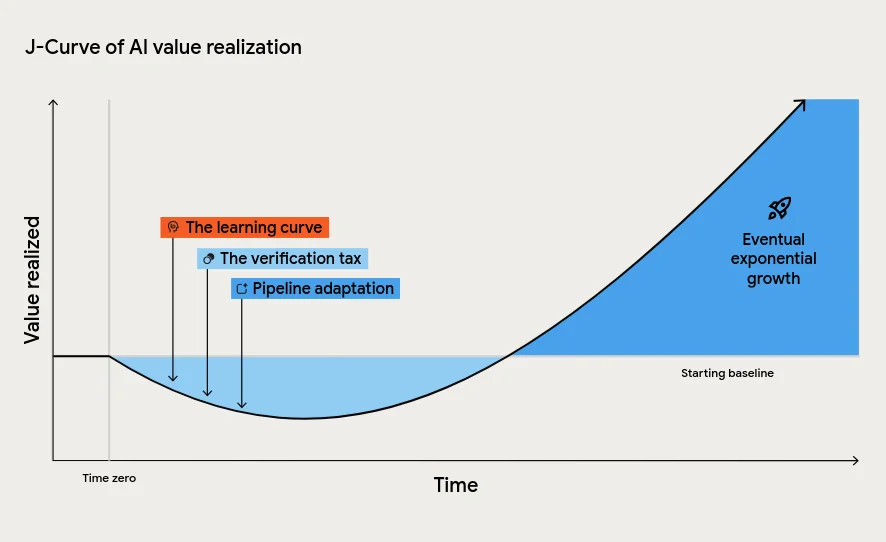

Navigating the J‑Curve and Building a Metrics Stack

DORA introduces the J‑Curve of value realisation to explain why AI often feels disappointing early on. Teams typically hit a productivity dip as developers learn new workflows, reviewers pay a verification tax on AI‑generated code, and downstream processes adapt to higher change volume. Leaders who misinterpret this period as failure risk cancelling AI programmes just before the compounding benefits appear. To avoid this, organisations need a layered metrics stack. At the code level, commit‑centric measures like Engineering Throughput Value (ETV) help test whether AI‑era claims show up in the repository compared to a pre‑AI baseline. At the delivery level, DORA metrics capture improvements—or regressions—in deployment frequency and stability. At the business level, ROI calculations link these changes to cost and value. Combined, they let teams distinguish real, sustained AI productivity gains from optimism and buzzword‑driven reporting.