A New Stack for Real-Time Voice Applications

OpenAI’s latest real-time voice models mark a turning point for voice API development. Instead of a single, monolithic assistant, the company now offers three specialized models through the Realtime API: GPT-Realtime-2 for live reasoning, GPT-Realtime-Translate for multilingual conversations, and GPT-Realtime-Whisper for streaming transcription. This split design lets developers tune each part of a voice workload separately, deciding where to prioritize depth of reasoning, latency, or cost. The models are built to keep speaking as users talk, handle interruptions, and coordinate tool calls without losing conversational context. For teams building call flows, live assistants, or AI-powered contact centers, this modular approach promises less orchestration glue code and more robust, human-like interactions. Together, these real-time voice models aim to bring advanced language understanding and live translation AI into production-ready voice agent development.

GPT-Realtime-2: GPT-5-Class Reasoning for Spoken Interactions

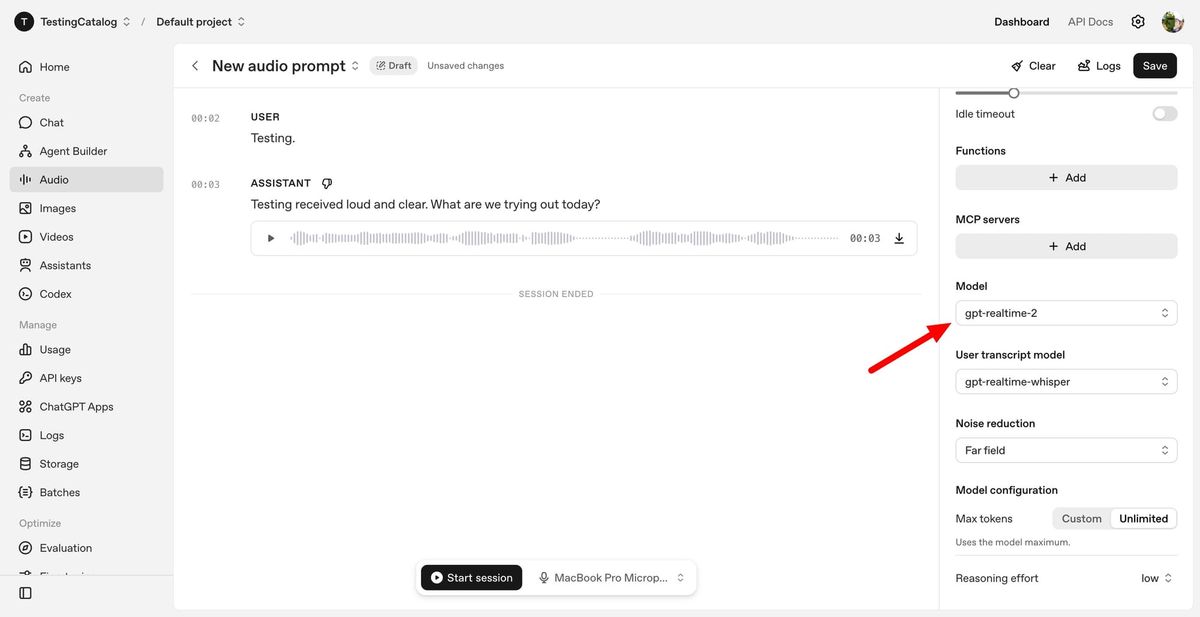

GPT-Realtime-2 is the flagship reasoning model in OpenAI’s real-time voice lineup. It brings GPT-5-class reasoning to spoken conversations, allowing voice agents to handle complex tasks instead of sticking to basic question–answer patterns. The model can manage longer call flows with a 128K context window, up from 32K in the previous generation, helping it maintain state across interruptions and tool calls. Developers can set reasoning effort from minimal to xhigh, balancing latency against depth for different turns in a conversation. GPT-Realtime-2 also introduces more natural behaviors, such as short spoken preambles like “let me check that,” clearer verbal cues when calling tools, and better recovery when tasks fail. Benchmarks cited by OpenAI show notable gains over GPT-Realtime-1.5 in audio intelligence, instruction following, and live conversation control, making it a strong foundation for sophisticated voice agent development.

GPT-Realtime-Translate: Live Multilingual Conversations at Scale

GPT-Realtime-Translate focuses on live translation AI for voice. It can accept speech input in more than 70 languages and produce spoken or transcribed output in 13 languages, keeping pace with the speaker in real time. The model is tuned for the realities of multilingual communication: regional accents, shifting context, and domain-specific vocabulary. Developers can use it to build tools for customer support, cross-border sales, education, events, creator platforms, and media localization, all within a single voice API development stack. Because translation is handled by a dedicated model, teams don’t have to bolt translation logic onto a general-purpose assistant; they can route translation-heavy segments to GPT-Realtime-Translate while reserving GPT-Realtime-2 for reasoning-intensive turns. This separation helps maintain low latency and clarity in complex multilingual workflows, enabling truly global voice interactions without constant manual orchestration.

GPT-Realtime-Whisper: Low-Latency Streaming Transcription

GPT-Realtime-Whisper fills the transcription lane as a low-latency streaming speech-to-text model. It is designed to transcribe audio as people speak, providing continuous text output that other systems can consume instantly. This makes it ideal for meeting assistants, note-taking tools, compliance logging, and any workflow where accurate, real-time transcripts are essential. Crucially, transcription is decoupled from the reasoning tier: developers no longer need to run a heavy, reasoning-focused model just to capture speech reliably. Instead, GPT-Realtime-Whisper can handle raw speech input, while GPT-Realtime-2 or GPT-Realtime-Translate process the resulting text when deeper understanding or translation is required. This architecture can simplify debugging and monitoring: if transcription remains accurate while reasoning slows or fails, teams can quickly isolate issues. For enterprises, this separation unlocks more predictable performance and cost profiles across large-scale, always-on voice systems.

What Developers Can Build with the New Real-Time Voice Stack

Together, GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper expand what’s possible in voice agent development. Developers can now compose voice experiences that listen, think, translate, and act in real time. A single application might use GPT-Realtime-Whisper to capture speech, GPT-Realtime-Translate to bridge languages, and GPT-Realtime-2 to coordinate tools, manage context, and deliver nuanced responses. This flexibility targets enterprise workflows such as customer service, travel booking, technical support, and media localization, where immediate response times and multilingual support are non-negotiable. By shifting more orchestration into the model layer, OpenAI aims to reduce the brittle session resets and state compression many teams previously relied on. The result is a voice infrastructure that can sustain long, tool-rich conversations while staying responsive and context-aware, opening the door to new categories of real-time voice products and services.