Apple’s New Dilemma: Agentic AI Apps Inside a Walled Garden

Apple is wrestling with how to welcome Apple App Store AI agents without tearing up the rulebook that has governed its marketplace for over a decade. Agentic AI apps can generate code, spin up mini‑apps on demand, and automate complex workflows—exactly the sort of dynamically changing software Apple’s existing guidelines were designed to block. Historically, App Store policy changes have focused on static binaries that can be fully inspected during review. AI agent approval guidelines must instead account for systems that evolve after download, potentially bypassing review safeguards and even creating malware. Apple reportedly views these agents as a major growth area, but also as a threat: if AI tools can build or replace traditional apps on-device, they could erode the company’s tightly controlled distribution model and its commissions. The result is an internal balancing act between innovation and the preservation of App Store control.

Safety, Revenue, and the Limits of On‑Device AI Coding

At the heart of Apple’s AI agent approval guidelines is a security concern that predates the current AI boom: no app should act as an unregulated runtime environment. Agentic AI apps that support “vibe coding”—where users describe features in natural language and let AI generate and run code—cut close to this line. Apple fears such tools could be used to produce unreviewed apps or even malware on iPhone and iPad, escaping traditional App Store checks. There is also a business risk. If agentic AI apps can generate specialized tools on the fly, users may skip purchasing standalone apps entirely, threatening Apple’s existing revenue structure. Reports indicate engineers are exploring a new security and privacy framework that constrains how deeply AI agents can interact with system resources, ruling out highly intrusive tools while still enabling safer, sandboxed agentic AI apps to reach the store.

Developer Trust Woes: Siri, App Intents, and Future Commissions

Apple’s parallel effort to revamp Siri via App Intents reveals a deeper trust problem with developers. The new Siri is designed to trigger actions directly inside third‑party apps, making it a natural control surface for agentic AI apps and services. Yet major developers have been reluctant to embrace the integration, not because of technical limits, but due to commercial uncertainty. Apple has told partners it will not charge commissions for early Siri integrations, while explicitly leaving open the possibility of future fees once the ecosystem matures. That ambiguity makes developers wary of ceding a primary customer interaction channel to Apple, fearing another chokepoint layered on top of App Store distribution. Without clear long‑term terms, many see deep Siri integration—and by extension tighter links to agentic AI apps—as a risk rather than an opportunity, complicating Apple’s broader AI strategy.

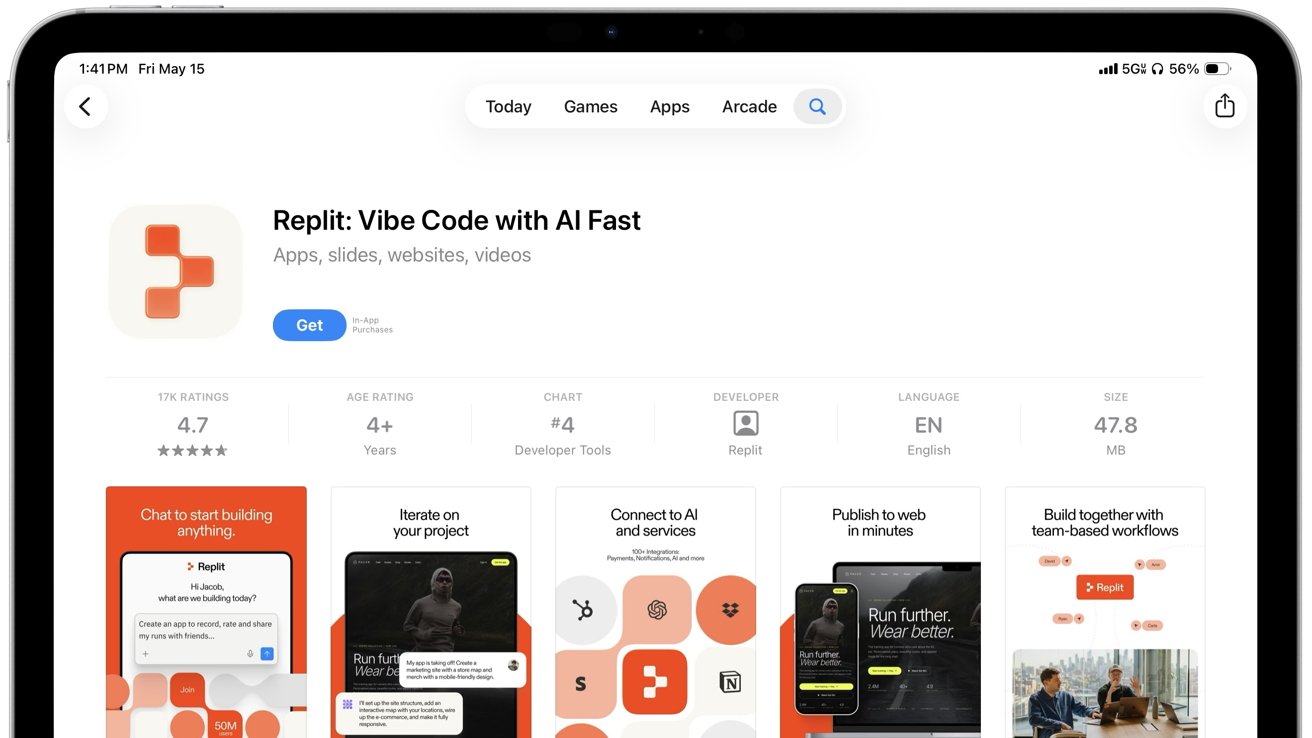

Replit’s Breakthrough and the Quiet Softening of App Store Rules

Replit’s recent iPhone update offers a rare public glimpse into how Apple’s stance on AI coding tools may be shifting. After months of stalled updates and a reported dispute over how AI‑generated apps were previewed on iOS, Replit and Apple “worked things out,” allowing Replit Agent 4 and new features such as parallel agents and expanded project views to ship. The core tension was that Replit’s mobile workflow resembles a full development environment, where users can describe an app in natural language, have AI generate it, and then preview behavior directly on-device. That workflow tests Apple’s long‑standing ban on apps that change functionality after review. The fact that a compromise was reached—without public clarity on what changed—suggests Apple is experimenting with nuanced boundaries rather than blanket bans, especially for agentic AI apps that stop short of becoming fully independent runtimes on iPhone.

WWDC: The Moment Apple Must Clarify Its Agentic AI Strategy

With WWDC approaching, Apple is under pressure to articulate a coherent plan for agentic AI apps, Siri’s future, and App Store policy changes. Internally, engineers are reportedly building a security system that lets AI agents operate within strict privacy constraints, avoiding “runaway agent” scenarios where software gains broad, unintended control over user data or accounts. Externally, developers want to know if building Apple App Store AI agents or deeply integrating with Siri will be rewarded or later taxed through new commissions. Clarity around App Intents, runtime limits, and what counts as acceptable on-device code generation will heavily influence whether cutting‑edge AI tools prioritize Apple’s platforms. WWDC is expected to spotlight AI across the stack, and any announcements about AI agent approval guidelines could redefine how users discover, trust, and pay for agentic AI apps in the App Store ecosystem.