RISC-V AI Board Targets Local LLM Inference

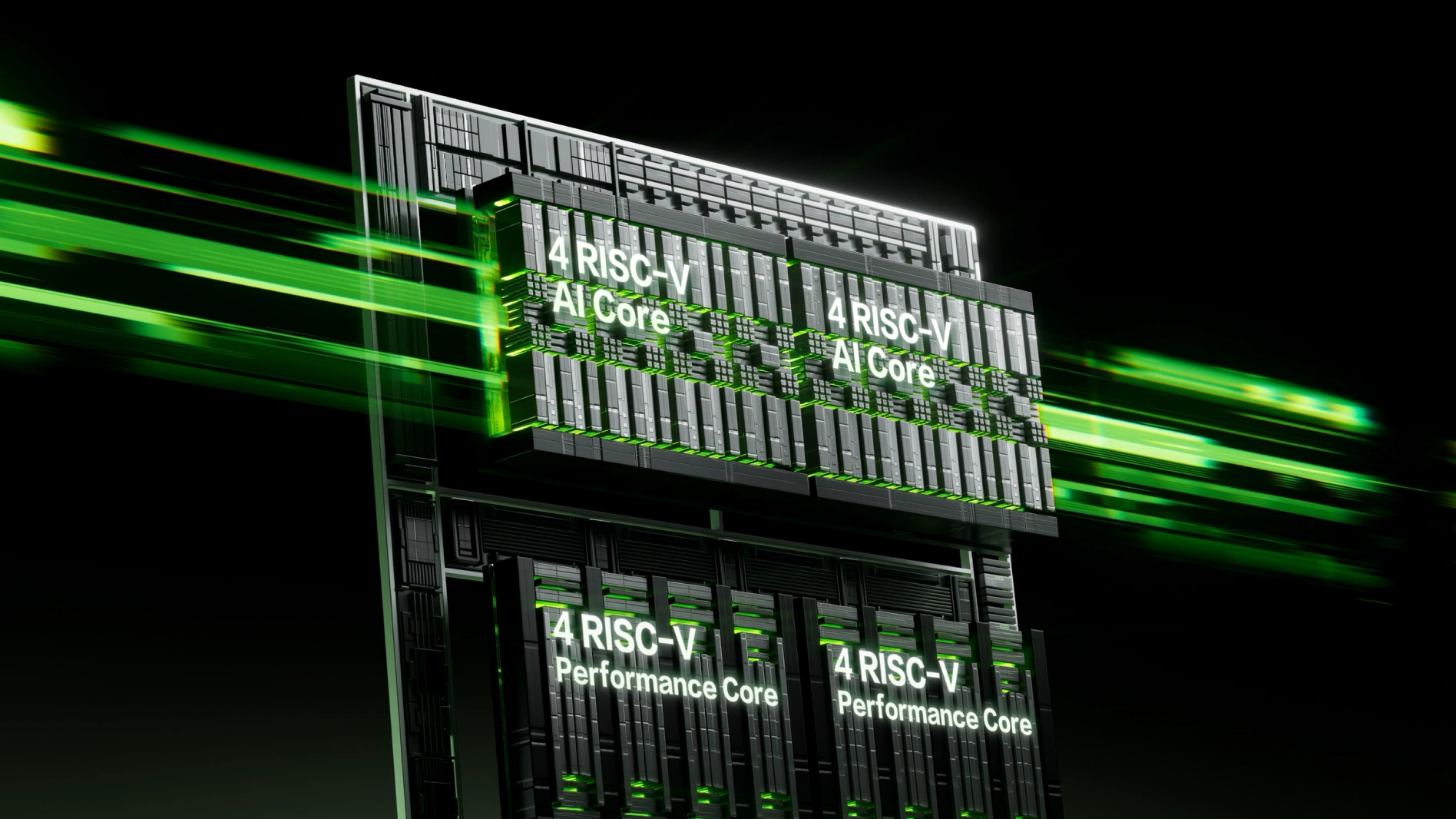

Sipeed’s new K3 series single-board computers aims squarely at local LLM inference, positioning itself as a compact yet capable alternative to x86 and ARM-based AI platforms. Built around SPACEMIT’s Key Stone K3 AI CPU, the board uses a RISC-V architecture with a fusion of 8 X100 high-performance cores and 8 A100 AI cores, delivering up to 130,000 DMIPS of general-purpose compute. This design enables developers to deploy large language models without relying on cloud infrastructure, keeping data on-device and reducing latency. Sipeed highlights that the K3 can run Qwen-3.5 35B, a substantial Qwen language model, at up to 15 tokens per second, putting serious generative AI within reach of edge AI computing projects. By combining an open instruction set with a purpose-built AI CPU and NPU, the Sipeed K3 series illustrates how RISC-V AI boards are maturing into practical platforms for advanced on-device intelligence.

60 TOPS NPU and 32GB LPDDR5 for On-Device LLMs

At the heart of the Sipeed K3 series is a 60 TOPS NPU designed to accelerate AI workloads across multiple data types, including BF16, FP16, FP8, INT8, and INT4. This flexibility is crucial for quantized large language models, where lower-precision formats can dramatically reduce memory and compute requirements while preserving accuracy. Paired with up to 32GB of LPDDR5 memory running at 6400MT/s and providing 51GB/s bandwidth, the board can handle models with up to 30 billion parameters natively. Sipeed reports that the platform achieves more than 10 tokens per second generally, and up to 15 tokens per second when running the Qwen3.5 35B model. Unified high-bandwidth memory ensures both CPU and NPU have fast access to model weights, reducing bottlenecks. This combination of a high-throughput NPU and generous memory footprint makes the Sipeed K3 series a strong candidate for developers who want to experiment with large local LLM inference without a traditional desktop GPU.

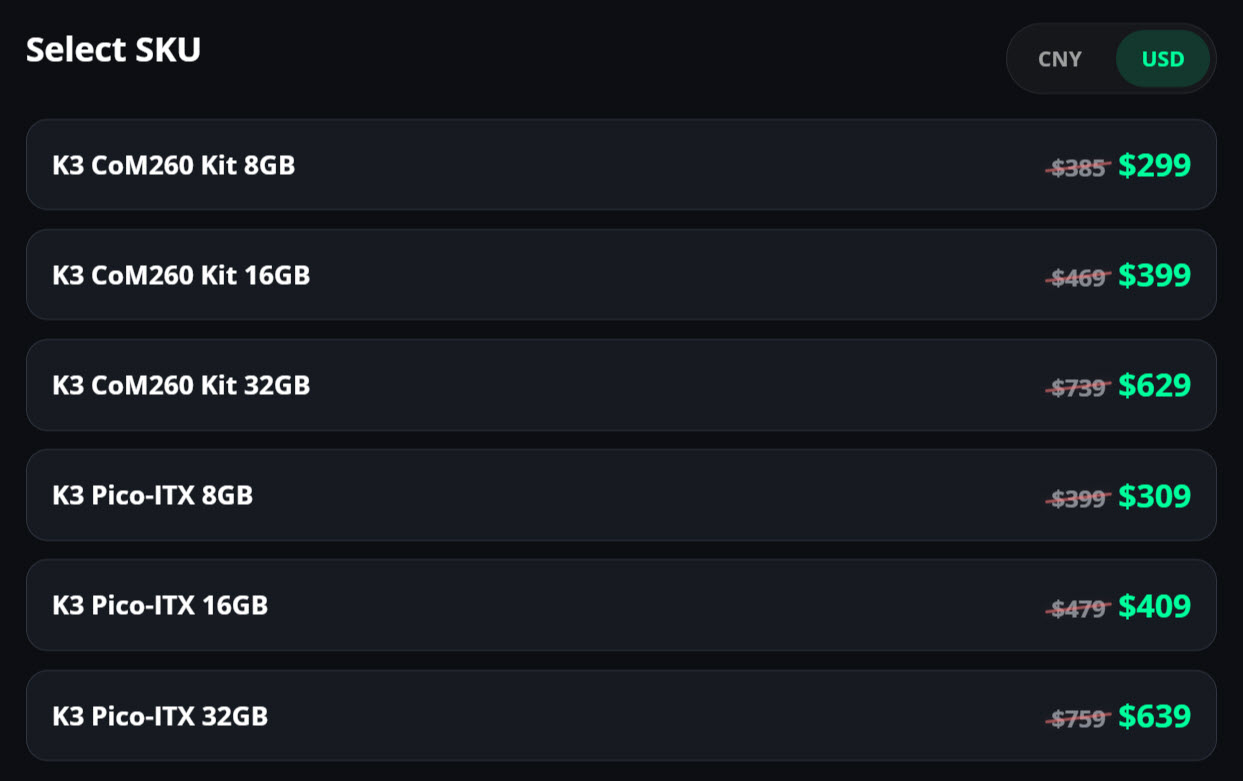

Pricing Lowers the Barrier to Local AI

Beyond performance, the Sipeed K3 series leans heavily on affordability to attract developers and edge AI computing projects. The company lists its 8GB configurations of the K3 CoM260 and K3 Pico-ITX boards starting at USD 299 (approx. RM1,380) and USD 309 (approx. RM1,420) respectively, with higher-capacity 32GB options reaching USD 629 (approx. RM2,900) and USD 639 (approx. RM2,950). That positions the entry-level K3 close to NVIDIA’s Jetson Orin Nano 8GB, which is cited at USD 247.99 (approx. RM1,150), while offering the ability to run much larger LLMs. For developers and startups, these prices make it feasible to prototype or deploy local generative AI workloads without investing in full-sized x86 systems or discrete GPUs. The relatively narrow price gap between models also encourages choosing higher memory configurations, which are critical for running larger Qwen language models and other demanding AI workloads entirely on-device.

Pico-ITX Form Factor for Edge and Embedded AI

The Sipeed K3 Pico-ITX variant measures just 100mm by 86mm, bringing serious AI capabilities to a compact board tailored for embedded and edge AI computing. Despite its size, it offers a rich set of interfaces, including onboard 10 Gigabit Ethernet and Gigabit LAN for high-throughput networking, plus dual USB Type-C ports supporting USB-PD and DisplayPort Alt Mode. Unified 16GB or 32GB LPDDR5 memory is soldered on-board, simplifying integration into small enclosures. Sipeed also offers a K3 CoM260 module at 69.6mm by 45mm, compatible with Jetson Orin carrier boards to ease migration from ARM to RISC-V. Operating system support includes an Ubuntu 26.04-based environment and ROS, alongside Docker and RISC-V KVM virtualization, catering to robotics and industrial applications. This combination of compact design, strong I/O, and software support underscores how the Sipeed K3 series is engineered for real-world edge deployments rather than lab-only experimentation.

RISC-V as an Open Alternative to ARM and x86

By basing the K3 series on RISC-V, Sipeed is pushing an open instruction set into a space traditionally dominated by ARM and x86. The Key Stone K3 AI CPU is described as roughly equivalent to an ARM Cortex-A76-class CPU at 2.4GHz in general performance, narrowing the gap between emerging RISC-V and established architectures. Compatibility with Jetson Orin Nano carrier boards further softens the transition, letting developers reuse cases, power supplies, and accessories while swapping out the core compute module. For AI practitioners, this means they can explore RISC-V without abandoning familiar development workflows or ecosystems. The Sipeed K3 series therefore serves not only as a RISC-V AI board for local LLM inference, but also as a practical testbed for evaluating how open hardware can support production-grade AI workloads. As more frameworks and toolchains add RISC-V support, platforms like K3 could accelerate adoption of open, vendor-neutral computing in AI deployments.