Why Perceived AI Speed Gains Aren’t Enough

Many engineering leaders now claim that AI coding tools have shortened development cycles, but most lack a rigorous developer productivity measurement strategy. Industry numbers are all over the place: controlled trials show faster task completion, forecasts project sizable future gains, yet surveys report only a minority of teams seeing high productivity improvements. These figures often describe different scopes, cohorts, and time horizons, making them a shaky basis for AI ROI assessment. At the same time, research has linked AI adoption to higher throughput but lower delivery stability, warning that more code moving faster can destabilize releases. Without grounded engineering metrics AI success quickly becomes a matter of opinion. Teams need to move beyond anecdotes like “it feels faster” and instead tie AI usage to traceable outcomes in the codebase and delivery pipeline, or risk misattributing both wins and failures to the tools.

Using DORA Metrics to Connect AI to Business Value

Google Cloud’s DORA metrics framework offers a structured way to translate engineering metrics AI improvements into software development ROI. The latest DORA report positions AI as an amplifier: it boosts strong systems and magnifies weak ones. Their value model starts with capabilities such as a quality internal platform, mature version control, and AI-accessible internal data. Improvements in these areas flow into better DORA delivery metrics—deployment frequency, lead time, change failure rate, and time to restore. Those in turn affect non-financial outcomes like developer experience and user satisfaction, and finally roll up into financial outcomes such as cost savings and revenue growth. Crucially, the authors emphasize that ROI calculations should be treated as high-uncertainty estimates designed to guide conversation, not as exact science. The real cost center has shifted from infrastructure to governance: managing verification, revising workflows, and upskilling staff to sustain AI-assisted development.

Recognizing the J-Curve and Avoiding False Signals

AI-assisted development often follows a J-curve of value realization: productivity may dip before long-term gains appear. This early decline has three main causes. First, teams climb a learning curve while they rework habits and workflows around new tools. Second, a verification tax emerges, as engineers spend time reviewing AI-generated code to ensure safety and correctness. Third, downstream processes—testing, change approvals, deployment pipelines—must adjust to handle increased code volume. If leaders interpret this temporary slump as failure, they risk cancelling initiatives just before benefits materialize. The latest DORA analysis even accounts for an instability tax, where change failure rates can initially rise after AI adoption. Measuring both throughput and stability over time helps distinguish a short-term dip from a genuine regression, and prevents teams from overreacting to early noise while still taking delivery risks seriously.

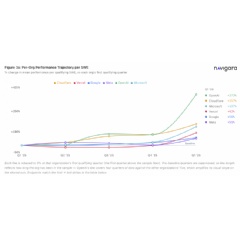

Beyond DORA: Measuring Commits with Engineering Throughput Value

While DORA focuses on delivery outcomes, new metrics like Engineering Throughput Value (ETV) look inside the codebase itself. Introduced by Navigara, ETV is a per-commit metric designed to test AI-era productivity claims against real commit history. Instead of benchmarking teams against generic industry numbers, ETV compares each team’s post-AI performance to its own pre-AI baseline, quarter by quarter. This approach aims to close the widening measurement gap where AI is credited for productivity gains that cannot be traced to specific workflow changes or measurable outcomes. By scoring commits consistently, teams can see whether AI tools are increasing meaningful throughput or just generating more noise and rework. Combining commit-level analysis with delivery metrics helps organizations separate genuine engineering improvements from temporary efficiency illusions and avoid AI washing—where tools are blamed or praised without evidence rooted in their actual impact on the codebase.

Building the Foundations for Sustainable AI ROI

Both the DORA framework and ETV emphasize that strong engineering foundations and organizational systems are prerequisites for real AI ROI assessment. AI will not fix broken processes; it tends to amplify existing patterns. Teams with robust internal platforms, clear workflows, automated testing, and continuous integration are better positioned to convert AI-assisted speed into stable, high-quality delivery. Those lacking these basics may see localized efficiency gains that vanish in downstream chaos—more incidents, rework, and bottlenecks. To avoid wasted investment, organizations should first solidify core practices, then layer AI tools on top, using DORA metrics and commit-level analysis to track impact. Proper developer productivity measurement, including both throughput and stability, helps ensure that AI tooling contributes sustained business value instead of short-lived speed highs, and gives finance and engineering leaders a shared, evidence-based language for evaluating AI-assisted software development.