Seven Hidden Gemini Live Models Surface Inside the Google App

A concealed model selector discovered in Google App version 17.18.22 has exposed seven previously unknown Gemini Live models. The menu, locked behind a server-side flag, lists: Default, A2A_Rev25_RC2, A2A_Rev25_RC2_Thinking, A2A_Rev23_P13n, A2A_Nitrogen_Rev23, A2A_Capybara, A2A_Capybara_Exp, and A2A_Native_Input. The A2A prefix almost certainly stands for “Audio-to-Audio,” Google’s term for models that process speech and audio directly rather than routing everything through text. Two entries, marked Rev25_RC2 and Rev25_RC2_Thinking, appeared overnight on May 8, implying they are close to production readiness and primed for public demos. Because the model list is fetched from Google’s servers, the company can silently add or remove options without updating the app, giving it a flexible way to test, stage, or showcase different Gemini Live experiences as it ramps up to Google I/O 2026 and beyond.

Inside the Thinking Variant and Personalization-Focused Models

Among the hidden Gemini Live models, two stand out: the A2A_Rev25_RC2_Thinking variant and the A2A_Rev23_P13n personalization model. In testing, all models produced clearly different responses, but the thinking variant is designed specifically for more complex reasoning tasks, signaling Google’s push toward deeper, more deliberate conversational intelligence within real-time AI development. The personalization-focused P13n model behaves differently from the default Gemini Live system: when asked for the current date and time, it first clarifies the user’s time zone instead of making assumptions. It also remembers personal details shared earlier and weaves them naturally into later replies, a capability the existing default model declines to use. Together, these variants show Google experimenting with both reasoning depth and individualized context, hinting at future Gemini Live experiences that can think more carefully while tailoring interactions to each user’s preferences and history.

Capybara, Nitrogen and a Multi-Model Gemini Live Future

Codenamed models such as A2A_Capybara, A2A_Capybara_Exp and A2A_Nitrogen_Rev23 underline how aggressively Google is diversifying Gemini Live. In tests, the Capybara model even identified itself as “Gemini 3.1 Pro,” rather than the Flash Live model that typically powers Gemini Live chats today. Some models could access live location data to deliver real-time weather updates, while others could not, proving that Google is trialling different capability bundles inside its voice stack. A2A_Native_Input suggests further experimentation around how audio is captured and processed. This multi-model architecture allows Google to route conversations through specialized systems—for example, one tuned for speed, another for reasoning, another for personalization—without changing the user-facing app. For developers and advanced users, that points toward a future where Gemini Live behaves less like a single assistant and more like a flexible routing layer across multiple, task-optimized models.

Gemini Omni and the Push into Video Reasoning

Parallel to the hidden Gemini Live models, early testers on Reddit report access to Gemini Omni, described in-product as Google’s new video model and apparently built on the Veo foundation. Omni already handles sophisticated reasoning prompts in video, such as generating a professor writing a trigonometric proof on a chalkboard with striking realism. Yet it also reveals the familiar fragility of generative video: in a complex dinner scene, spaghetti appears out of nowhere on empty plates, and a notorious “Will Smith eating spaghetti” request is blocked by safety guardrails. The computational demands are heavy; one user hit 86% of their daily usage allowance after just two video generations on the Google AI Pro plan. Omni underscores why Google is adding explicit usage limits to Gemini services and highlights how resource-intensive, reasoning-capable video generation will influence both pricing models and access policies for developers.

What This Means for Developers, Users and Google’s AI Roadmap

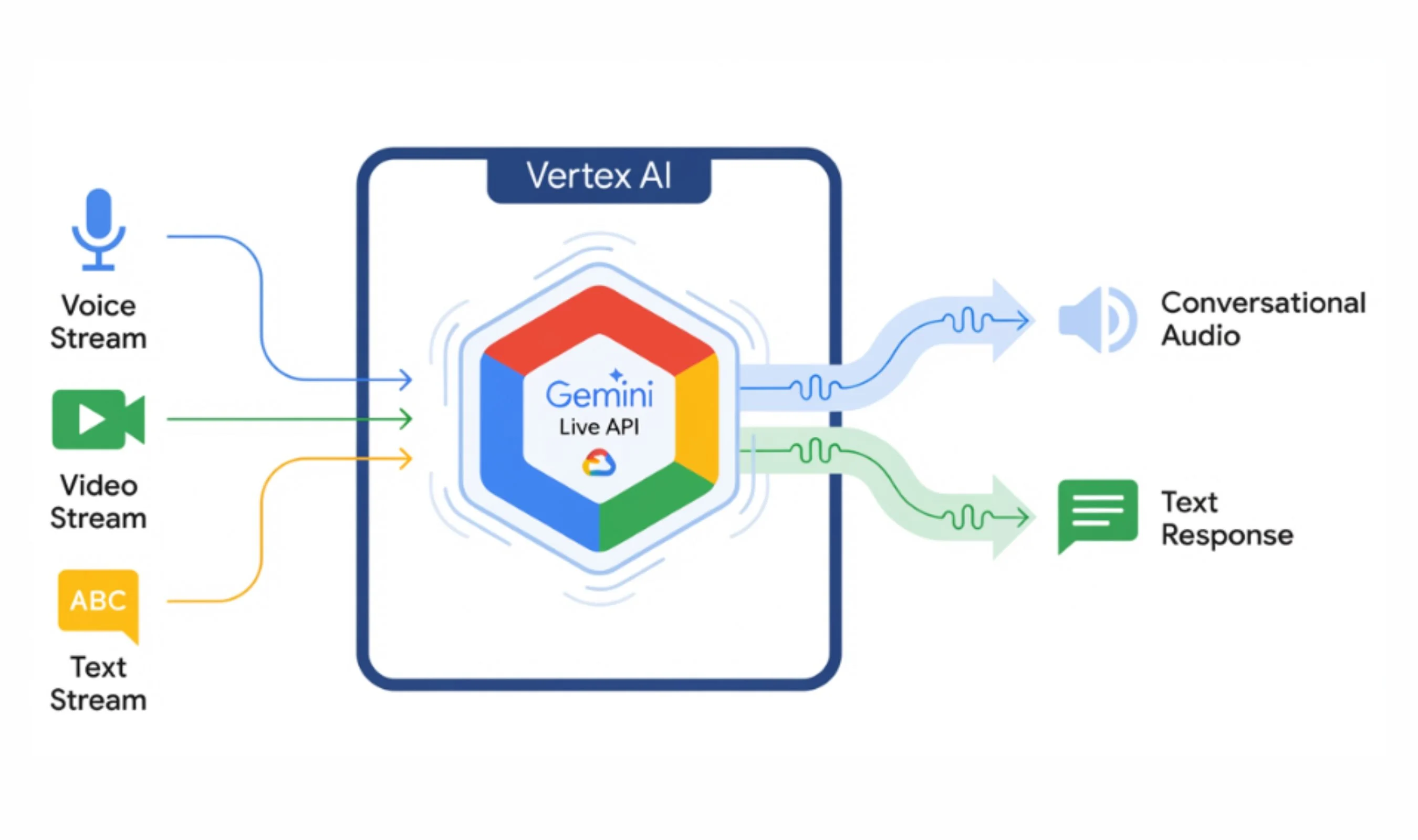

Taken together, the hidden Gemini Live roster, the thinking variant, Gemini Omni and the newly announced Gemini Intelligence paint a coherent roadmap. Google is building a layered, multi-modal ecosystem where audio-to-audio models, video generation and task automation can interoperate in real time. For developers, this suggests future access to a menu of Gemini Live models exposed via APIs, allowing them to choose between fast, default responses, deeper reasoning options or personalization-heavy variants depending on their use case. Users can expect more adaptive assistants that remember context, handle live data such as weather, and eventually orchestrate actions across apps as Chrome’s auto-browse capability rolls out. The RC2 labels and server-driven model switching indicate Google’s infrastructure is already wired for live demos at Google I/O 2026, and for a gradual transition from monolithic assistant experiences to highly dynamic, model-routed real-time AI development.