Firefox’s AI-Assisted Bug Surge: Result or Just Correlation?

Mozilla’s April security numbers for Firefox are eye-catching: 423 security bugs fixed, up from 76 in March and far above last year’s monthly average of 21.5. The organization credits Anthropic’s Mythos Preview model with identifying 271 of those issues in Firefox 150, and engineers report that AI-generated security reports have evolved from noisy “slop” into something far more actionable. They argue that AI analysis now complements fuzzing by surfacing elusive sandbox escapes and even stress-testing earlier hardening measures such as defenses against prototype pollution. Yet the data is more suggestive than conclusive. Mozilla has disclosed a curated sample of bugs, including a 20‑year‑old heap use‑after‑free reachable with no user interaction, but has not shown whether other, cheaper models or non‑AI tools would have missed the same flaws. For AI bug detection, the headline gains are clear—but the counterfactual remains unresolved.

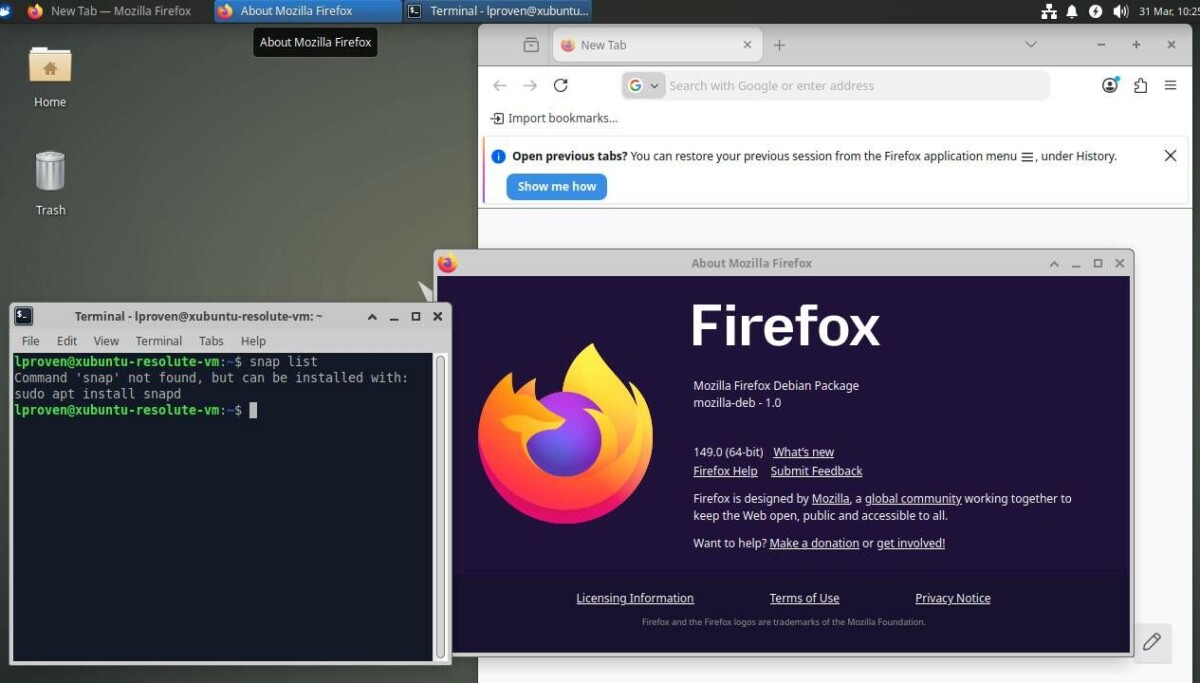

Mythos vs Opus and the Rising Role of AI Harnesses

Behind Mozilla’s results lies a quieter story: the importance of agentic harnesses—middleware that orchestrates how models explore code, generate hypotheses, and report vulnerabilities. Firefox engineers emphasize that better steering has been as important as better models, with Mythos and its less-hyped sibling Opus 4.6 both feeding into the process. External researchers echo this, showing strong bug-hunting performance from off‑the‑shelf models like Opus 4.6, which is said to cost about five times less than Mythos. Security consultant Davi Ottenheimer argues that Mozilla’s framing confuses observation with measurement: stating “Mythos found 271 bugs” does not prove other models or harnesses could not have done the same. His own tests, wiring Anthropic’s smaller models into a custom harness with an auditing skill, surfaced eight findings in two minutes, two of which overlapped Mythos results. The emerging lesson is that AI security tools succeed or fail as full systems, not as standalone models.

Browser Vulnerabilities, AI, and the User in the Loop

The Firefox case spotlights how AI bug detection can improve Firefox security and broaden coverage of browser vulnerabilities. But another recent incident involving Anthropic’s Claude Code shows how quickly gains can be undermined when user-facing UX and security assumptions diverge. Security firm Adversa AI demonstrated a one‑click remote code execution scenario using Model Context Protocol (MCP) servers in multiple AI coding CLIs. By hiding malicious configuration in two JSON files within a cloned repository, an attacker can ensure that the moment a developer presses Enter on a generic “Yes, I trust this folder” dialog, an unsandboxed Node.js process launches with full user privileges. Anthropic’s view is that this trust decision pushes the problem outside its threat model; researchers counter that the prompt provides no meaningful MCP‑specific warning. The result is a gap between technically documented risk and what a reasonable user thinks they are consenting to.

One-Click Vulnerabilities Expose Limits of AI Security Tools

Adversa AI notes that the one‑click issue in Claude Code is the third CVE in six months from the same root cause: project‑scoped settings used as an injection vector. Each patch addressed a specific flaw, but the underlying class of misconfiguration remains. Earlier versions of the tool presented an explicit warning that .mcp.json could execute code and offered a safer option to proceed with MCP disabled; that language disappeared in v2.1, replaced by a softer, default‑to‑trust dialog. For interactive users, this weakens informed consent. For non‑interactive environments like CI/CD pipelines, the situation is even starker: the tool may be invoked via SDK with no prompt at all, creating a zero‑click pathway for compromise. These problems are not caused by AI models hallucinating exploits but by surrounding architecture and UX choices that normalize risky defaults, demonstrating that AI security tools must be judged on their entire lifecycle design.

Does Better Security Come from Smarter Models or Smarter Systems?

Taken together, Firefox’s bug-fixing surge and the Claude Code one‑click vulnerability suggest that security outcomes depend less on raw AI model capability and more on how those models are embedded into tools, workflows, and user decisions. On one side, carefully harnessed models can illuminate decades‑old browser vulnerabilities and validate hardening assumptions that traditional fuzzers might miss. On the other, the same ecosystem can expose developers to remote code execution through a single ambiguous prompt or silent configuration file. For organizations evaluating AI security tools, the key questions are shifting: not just “how powerful is the model?” but “how transparent is the harness?”, “how are permissions scoped and surfaced?”, and “what happens at the human decision points?” Advanced models clearly can accelerate bug discovery, yet the decisive gains in Firefox security—and the failures in AI development tooling—appear to hinge on system architecture and human-centered design.