Production Data Shows GPT-5.5 Pricing Spike Over GPT-5.4

OpenRouter’s April 2026 usage analysis has turned GPT-5.5 pricing from a theoretical concern into a concrete budgeting issue. Across live workloads where users migrated from GPT-5.4, effective AI model costs rose between 49 and 92 percent, depending on prompt-length bands. This divergence from headline list prices highlights a crucial point for enterprise buyers: production AI expenses are shaped more by how models behave under real traffic than by catalog tariffs alone. OpenAI frames GPT-5.5 as more token efficient than GPT-5.4, with matching per-token latency. On paper, that narrative is reinforced by list rates that set GPT-5.5 at USD 5 (approx. RM23) per million input tokens and USD 30 (approx. RM138) per million output tokens, compared with GPT-5.4’s USD 2.50 (approx. RM11.50) and USD 15 (approx. RM69) respectively. Yet OpenRouter’s findings show that, once workloads settle, GPT-5.5 pricing often translates into significantly higher monthly bills than initial forecasts suggested.

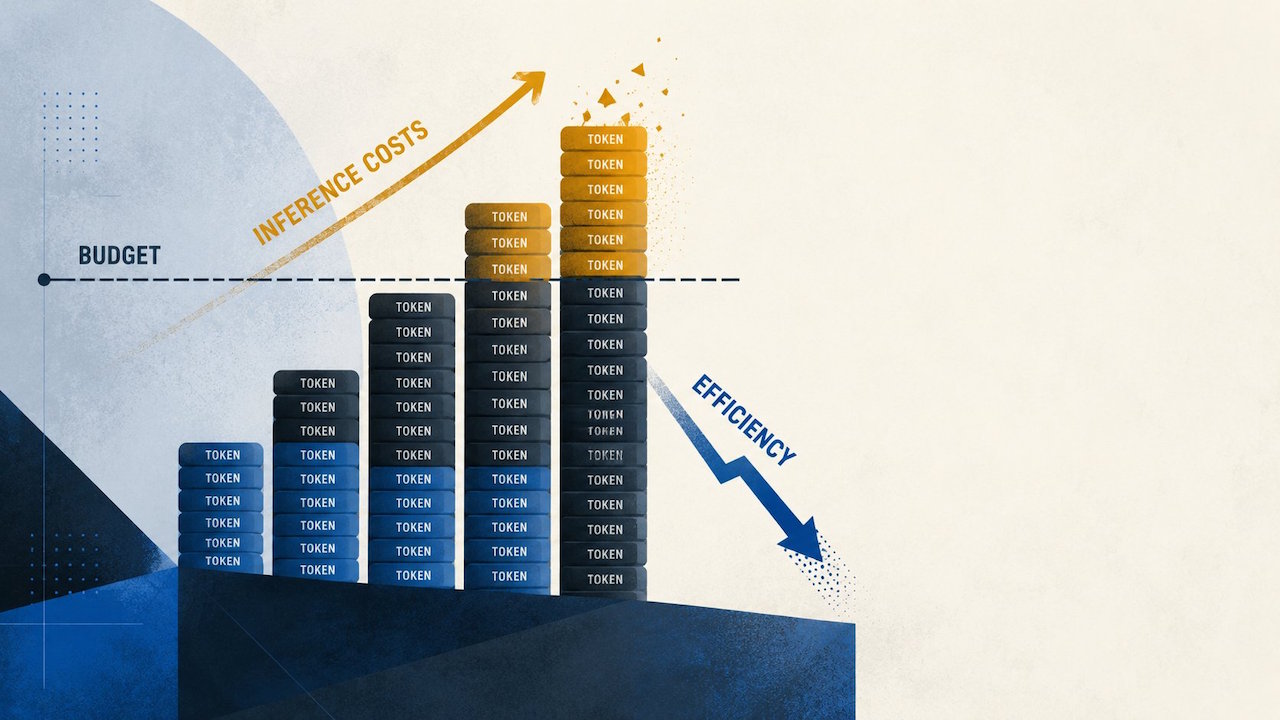

Why Shorter Answers Don’t Automatically Lower Production AI Expenses

On the surface, GPT-5.5 appears more efficient for very long prompts. For inputs above 10,000 tokens, OpenRouter reports that GPT-5.5 produced 19 to 34 percent fewer completion tokens than GPT-5.4. However, in the 2,000 to 10,000 token band, completions were 52 percent longer, and even sub‑2,000 token prompts saw a 7 percent increase in median completion length. This asymmetric behavior is exactly where real-world GPT-5.4 comparison efforts can mislead if they rely solely on benchmark runs. The cost impact is clear in OpenRouter’s per-million-token billing data. For prompts under 2,000 tokens, average costs jumped from USD 4.89 (approx. RM22.50) to USD 9.37 (approx. RM43). In the 50,000 to 128,000 token band, they rose from USD 0.74 (approx. RM3.40) to USD 1.10 (approx. RM5). Shorter answers at the extreme high end do not offset the higher GPT-5.5 pricing when most user traffic lives in short and mid‑range interactions.

How GPT-5.5 Completion Behavior Inflates Enterprise AI Model Costs

Many production systems—retrieval assistants, coding copilots, workflow agents, and customer-support bots—rarely hit the maximum context windows that vendors emphasize in marketing. Instead, they cycle through short prompts, tool calls, and iterative retries. In these mid-range zones, GPT-5.5’s tendency to generate longer completions turns into a recurring budget penalty. Each follow-up answer, tool retry, or retrieval reformulation adds tokens, amplifying the underlying GPT-5.5 pricing differences versus GPT-5.4. OpenRouter’s cost curve shows effective cost per million tokens for 2,000 to 10,000 token prompts rising from USD 2.25 (approx. RM10.40) to USD 3.81 (approx. RM17.50), and for 25,000 to 50,000 tokens from USD 1.02 (approx. RM4.70) to USD 1.65 (approx. RM7.60). The result is that OpenAI’s efficiency claims may hold only in niche long-context scenarios. For most enterprise traffic mixes, GPT-5.5 can become a structurally more expensive choice, even if raw model quality appears higher in controlled benchmarks.

Recalculating ROI: When to Upgrade from GPT-5.4 to GPT-5.5

For platform teams, the key lesson is that ROI cannot be decided on list prices or benchmark efficiency alone. Finance may approve GPT-5.5 based on headline rates, but engineers and product owners inherit the ongoing token bill as traffic scales. Given the 49 to 92 percent cost increase seen in OpenRouter’s traces, buyers need to segment workloads carefully rather than treating GPT-5.5 as a drop-in replacement for GPT-5.4. A pragmatic strategy is to route GPT-5.5 only to workloads where long-context compression really matters, or to premium user tiers where higher production AI expenses are justified by differentiated value. For everything else, GPT-5.4 or rival models such as Claude Opus 4.7—which reportedly undercut GPT-5.5 on output pricing in some comparisons—may deliver a better cost-to-performance ratio. Ultimately, organizations should run narrow operational tests on their own traffic before committing to broad GPT-5.5 adoption.