AI Coding Tools Decouple Output From Understanding

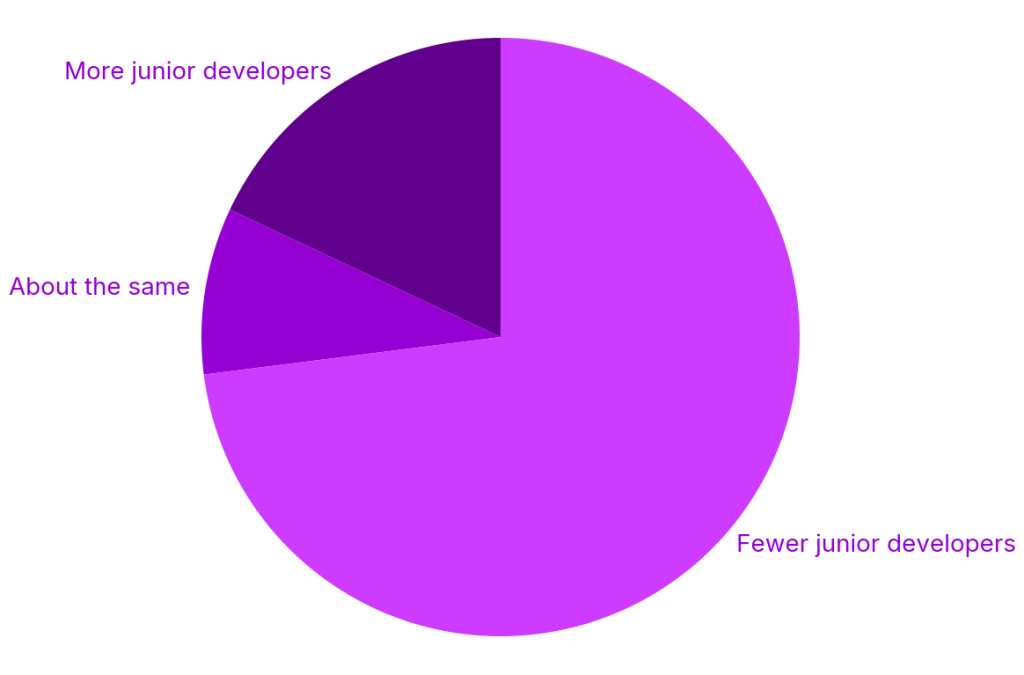

AI coding tools have transformed how software gets written, but not how it gets understood. Industry data shows juniors completing tasks up to 55% faster with AI assistance, while 73% of organizations report reducing junior hiring. Tools like Claude Code are rapidly spreading through teams, making the “seniors with AI” model—where experienced engineers plus AI replace entire entry-level cohorts—an emerging default. Yet this productivity hides a structural problem: AI accelerates code generation but not comprehension. Senior developers can lean on years of architectural context to validate AI-generated code. Juniors, however, often cannot explain why their code works or fails, because they did not truly write it. This decoupling becomes dangerous when subtle issues—like timing bugs that only appear under rare conditions—slip through tests and reviews. The code looks careful, passes checks, and ships quickly, but the human responsible lacks the mental model needed to debug or maintain it.

The New Code Review Challenge: Fast, Clean, and Misunderstood

Engineering leaders are increasingly encountering a new archetype in code review: the “expert beginner” who ships clean, passing code but cannot explain its behavior. Unlike the ego-driven stagnation described in earlier critiques of this term, today’s version is often conscientious and highly productive—just deeply dependent on AI coding tools. This shows up most acutely during code review. When subtle bugs emerge, juniors struggle to reason about concurrency, data races, or edge cases that the AI papered over. Their open-mindedness and willingness to adopt AI quickly is a strength, but also a liability: they lack the experience to critically evaluate machine-generated output. Leaders are reporting more review conversations where developers can describe what the code does in natural language, yet cannot walk through how or why. As a result, reviewers must spend more time probing understanding, not just scanning for style and correctness, stretching already limited senior bandwidth.

Developers Fear Cognitive Dependence and Skill Erosion

Beyond organizational metrics, many developers themselves are uneasy about what heavy AI usage is doing to their skills. On developer forums and in anonymous interviews, practitioners describe the experience of relying on AI coding tools as “brain-rotting”—a sense that their problem-solving muscles are atrophying. Some say they now reach for AI even on problems they once solved instinctively, and worry that their baseline competence is slipping. One UX-focused engineer described being directed to use AI agents for broad, sweeping changes across a large codebase, alongside “hundreds of other programmers” doing the same. The result, they argue, is a growing tangle of tech debt that may be impossible to untangle if models become harder to use or more restricted. Many report that AI often makes work slower, not faster: they must painstakingly inspect, run, and patch AI output. The hidden cost is mental—less deliberate practice, less architectural thinking, and an uneasy dependence on a tool they do not fully control.

AI Debugging Limitations Exposed in Long-Running and Complex Tasks

Recent research highlights how AI agents struggle when tasks move from quick snippets to extended workflows. Microsoft researchers evaluated large language models on DELEGATE-52, a benchmark simulating multistep professional tasks across 52 domains, including programming. Even top-tier models introduced substantial errors in long-running document edits, losing on average a quarter of content over 20 delegated interactions, with overall degradation across models around half. Programming fared better than many domains, yet the message is clear: current AI is brittle when asked to autonomously manage complex, stateful work. Catastrophic corruption in some scenarios underscores why engineering leaders hesitate to hand over refactors or broad architectural changes to agents. In practice, developers must vigilantly supervise AI-driven transformations, catching subtle regressions, missing content, and semantic drift. AI debugging limitations mean humans still carry ultimate accountability for correctness—but if their own skills erode, the safety net frays exactly when it is most needed.

How Engineering Leaders Are Rebalancing AI and Hands-On Learning

With no industry consensus on best practices, engineering leadership is improvising guardrails to balance AI efficiency with real skill development. Some teams are redefining code review to emphasize explanation: contributors must articulate the reasoning behind key decisions, regardless of whether AI wrote the first draft. Others require developers to disable AI for certain tasks—like critical-path modules, tricky concurrency, or initial prototypes—so that problem-solving instincts remain sharp. Leaders are also rethinking the talent pipeline. The “seniors with AI” model may deliver short-term output, but it risks a gap in mid-level expertise later if fewer juniors learn the craft deeply. Paradoxically, seniors who avoid AI altogether may become disconnected from the patterns emerging in AI-shaped codebases. Forward-looking managers encourage all levels to use AI consciously: as a collaborator, not a crutch. That means pairing AI suggestions with deliberate practice, post-mortems focused on understanding, and structured opportunities to debug without automated help.