AI Cloud Infrastructure Enters Its Independence Phase

AI cloud infrastructure is shifting from single-provider reliance to a patchwork of partnerships and custom builds. As models grow more capable and usage spikes, companies are discovering that traditional hyperscalers alone cannot guarantee the right mix of capacity, control, and compliance. This is driving a new wave of multi-cloud strategies and experiments with alternative cloud providers, from terrestrial supercomputers to orbital compute concepts. At the heart of this evolution is a desire for compute sovereignty: the ability to decide where and how AI workloads run without being locked into one vendor’s roadmap or pricing. AI firms now treat infrastructure choices as product and governance decisions, not just backend engineering. Anthropic and DeepL exemplify this transition, using different paths to escape vendor lock-in risks while still tapping the scale and convenience of established platforms.

Anthropic, Colossus, and the Rise of Orbital Compute Ambitions

Anthropic’s partnership with SpaceX’s Colossus 1 supercomputer highlights how AI providers are reaching beyond classic clouds to secure capacity. The company is using all the capacity of Colossus 1, a facility with over 220,000 Nvidia GPUs and more than 300 megawatts of new compute brought online within a month, to relieve bottlenecks that left Claude developers facing tight rate limits. Anthropic immediately doubled five-hour rate limits on Claude Code for paid tiers and raised API limits for Claude Opus, directly tying infrastructure expansion to user experience. The agreement also includes access to Colossus 1 for Claude Pro and Claude Max subscribers and an expressed interest in developing multiple gigawatts of orbital AI compute capacity with SpaceX. While details on timelines remain tentative, this move signals a willingness to diversify away from exclusive dependence on traditional hyperscalers and treat alternative cloud providers as strategic pillars, not backup options.

DeepL’s AWS Pivot and the Politics of AI Data Residency

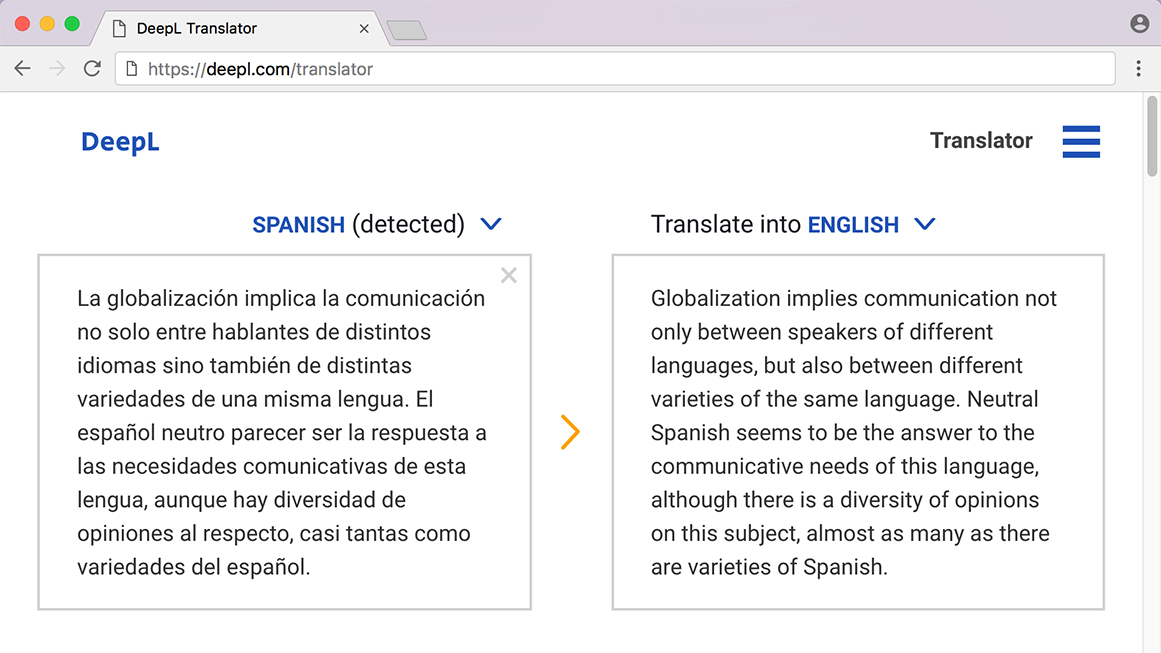

DeepL’s decision to add AWS as a sub-processor marked a significant shift in its infrastructure posture and ignited debate over AI data residency. By moving away from a Europe-only default processing setup and expanding through AWS, DeepL aims to cut latency and support high-availability translation workloads across more regions. Existing AWS customers gain the convenience of deploying its Language AI through familiar procurement, billing, and identity systems, improving rollout speed for large enterprises. Yet this convenience comes with perception challenges. Industry voices warn that relying on a major U.S. hyperscaler could erode confidence in local AI translation champions and deepen concerns over compute sovereignty and vendor lock-in risks. DeepL stresses that paid customer text remains protected and is not used for model training without consent. Buyers must now weigh infrastructure dependence, regulatory expectations around AI data residency, and product quality when choosing their translation stack.

Balancing Vendor Lock-In Risks with Performance and Compliance

Both Anthropic and DeepL illustrate how AI companies are juggling performance demands with structural independence. Anthropic spreads its workloads across Amazon, Google/Broadcom, and now SpaceX’s Colossus, using multi-cloud and alternative compute strategies to keep pace with surging Claude usage while retaining negotiation leverage. This reduces single-vendor exposure and opens paths to specialized hardware and experimental orbital capacity. DeepL’s AWS expansion follows a different route but faces similar trade-offs. The move improves latency and procurement flexibility for global enterprises but raises questions about how much foreign infrastructure dependence buyers will tolerate. In both cases, cloud choices are no longer invisible infrastructure decisions: they shape service limits, legal risk, and strategic autonomy. As competition intensifies and regulations tighten, AI leaders are likely to deepen multi-cloud deployments, negotiate stricter data-control terms, and explore new compute options that promise more predictable pricing, location control, and governance.