From "Did You Use AI?" to "How Did You Use It?"

AI in academic writing has shifted from a yes-or-no question to a detailed investigation of process. Traditionally, AI checker tools scanned finished essays, trying to label a text as human or machine-generated and sometimes estimating an overall “AI usage percentage.” These approaches faced major drawbacks: they could be inaccurate, and they revealed nothing about how a student actually worked with AI while drafting and revising. Educators, meanwhile, care about learning, originality and revision—not just whether a chatbot was opened. This has led to a new generation of education AI assistants that look beyond the final file. Instead of punishing any AI involvement, they examine quality, structure, clarity and the evolution of ideas across drafts, helping teachers see whether a student is thoughtfully shaping their work or simply pasting in unedited AI output.

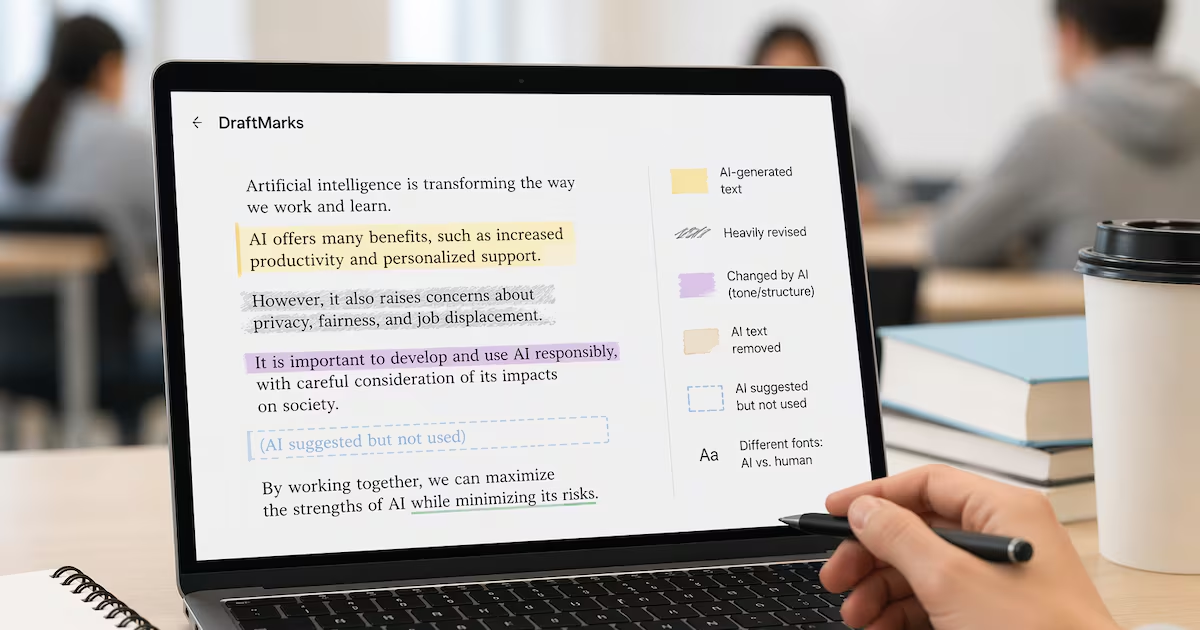

How DraftMarks Tracks AI and Human Edits in Student Writing

DraftMarks student writing analysis is a clear example of this shift. Developed by a research team from Stanford University and the Georgia Institute of Technology, DraftMarks tracks the entire writing process instead of only the finished assignment. It records draft history and interactions with AI, then overlays visual cues directly onto the document. Sentences first generated by AI are tagged with “masking tape,” while heavily revised passages show “eraser dust” to signal substantial human editing. If an AI-generated sentence is deleted later, it leaves an “adhesive residue.” Even AI suggestions that students read but reject appear as “ghost text.” These features let teachers see not just where AI was used, but how students evaluated, edited or discarded those suggestions. In other words, DraftMarks helps track AI edits while highlighting human judgment and revision decisions rather than treating AI use as automatically suspect.

AI Checker Tools Are Becoming Writing Coaches, Not Just Police

Modern AI checker tools increasingly act like coaches that support content quality and originality, rather than mere detectors of misconduct. They review language usage, syntax, flow, consistency and overall structure, helping writers notice repetitions, awkward phrasing or unclear sections that are easy to overlook. Instead of replacing human effort, these tools encourage more intentional editing: students can see where a paragraph feels stiff, a sentence is too dense, or a transition does not quite work, then decide what to keep, rewrite or clarify. This aligns with how readers judge writing in the real world—favoring content that is crisp, well-structured and genuinely fresh. In classrooms, that means AI in academic writing is slowly moving from a secret shortcut to a transparent, supervised collaborator that can surface weaknesses, suggest improvements and give students a clearer view of their own style and thinking.

Surveillance, Privacy and the Future of Document Workflows

Tracking every keystroke and AI interaction raises serious ethical and privacy questions. Tools that log detailed edit histories and AI usage patterns create rich data about how individuals think, struggle and learn. In education, that data can help teachers assess revision habits and originality, but it can also feel like surveillance if students are not clearly informed about what is tracked and why. Similar capabilities are likely to appear in workplace document systems, where AI checker tools and DraftMarks-style timelines could influence performance reviews, compliance audits or content approval workflows. For example, managers might examine which sections were AI-generated, how thoroughly employees edited them, and whether sensitive information was handled carefully. This makes transparency critical: institutions need clear policies on data retention, access and acceptable AI use, so that tracking AI edits supports trust and learning rather than fear and micromanagement.

Using Education AI Assistants Responsibly as a Student

For students, the rise of process-aware tools changes how to work with AI responsibly. First, know your course policies on AI in academic writing and follow them strictly; tools like DraftMarks can show exactly how you used AI, so secrecy is risky and unnecessary. Use AI assistants for brainstorming, outlining, or getting feedback on clarity and structure rather than generating entire answers. When you accept AI suggestions, revise them in your own words, adding your analysis and examples. Treat AI checker tools as an extra set of eyes: run drafts through them to catch unclear sentences, weak structure or repetitive phrasing, then apply what you learn to future assignments. Finally, keep your own copies of drafts and be mindful of what personal information you type into any system. The goal is to improve your writing and thinking—not outsource them.