From software AI to physical AI: when intelligence grows a body

Most Malaysians first met “robot dogs” through viral clips of metal canines dancing or patrolling malls. Behind the memes, a deeper shift is happening in factories worldwide: the rise of physical AI robots. Unlike pure software AI, which lives in the cloud and manipulates data, physical or embodied AI is intelligence housed in a physical machine that can perceive, reason and act in the real world. Think of three layers working together: perception (cameras, LIDAR and other sensors), reasoning (AI models deciding what to do) and action (motors, robotic legs or arms carrying it out). Traditional factory automation robots follow fixed scripts and struggle when something changes, like a misaligned box. Physical AI allows robots to adapt on the fly, learning from new situations instead of waiting for engineers to reprogram every step. That same adaptability is what will separate tomorrow’s industrial robot dogs from today’s viral gadgets.

Factory pioneers: how embodied intelligence is leaving the production line

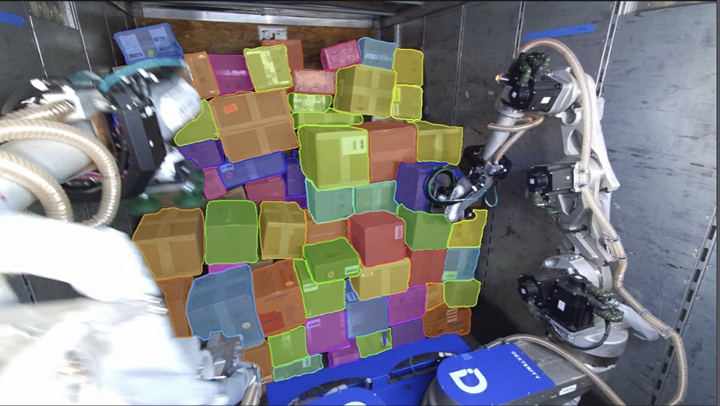

Chinese manufacturers are already weaving embodied intelligence into everyday automation. Hikrobot, for example, has built a full-stack capability that combines “eyes, feet and hands” by integrating machine vision, articulated robots and mobile robots into one platform. Its machine vision products have shipped over 10 million units, and more than 180,000 mobile robots are working on factory and warehouse floors, supported by industrial software used over 600,000 times by customers worldwide. The company’s concept of “embodied intelligent manufacturing” aims to flip the traditional model from “humans adapting to machines” toward “machines adapting to the environment” by using flexible, multi-tasking robots and scene-based applications that can be replicated quickly. At the same time, firms like NEURA Robotics are using cloud tools and high-fidelity simulation to close the data gap in robotics, so fleets of robots can share experience instead of learning everything the hard way. These advances in embodied intelligence are exactly what mobile platforms like quadruped robots will inherit next.

Robot Ops and the rise of physical AI platforms

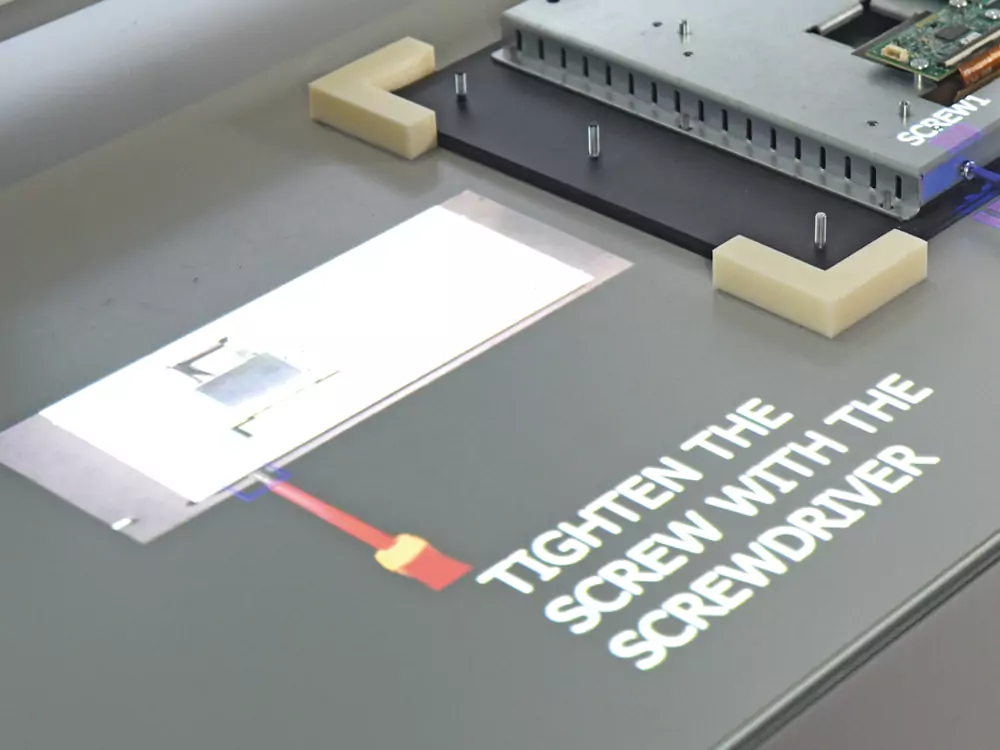

In computing, mass adoption only took off once platforms like Windows and Android standardised how software and hardware work together. Something similar is now happening in physical AI. At Hannover Messe, Zoomlion unveiled Robot Ops, an embodied intelligence operating system built around the idea of “Data, Software, and Agents.” Rather than treating each robot as a one-off project, Robot Ops offers four modules—basic tools, imitation learning, reinforcement learning and task orchestration—to manage the full lifecycle from data collection and model training to simulation, deployment and maintenance. The aim is to cut technical barriers, make it easier to move solutions between scenarios, and provide strong lifecycle management so robots can keep improving after installation. Crucially, Robot Ops is designed to support everything from humanoids and industrial arms to construction machinery and autonomous driving. In the future, industrial robot dogs are likely to run on similar physical AI platforms, gaining app-like capabilities instead of being locked into single-purpose patrol routines.

From viral robot dogs to real workhorses in Malaysian industry

Once these physical AI platforms mature, quadruped robots will gain sharper perception, better manipulation and smarter decision-making. Instead of just walking a fixed patrol route, an industrial robot dog could navigate cluttered warehouses, recognise damaged pallets, read labels and coordinate with human workers or other factory automation robots. In ports, they could inspect containers and hard-to-reach areas, feeding real-time data into logistics systems. On large university or industrial campuses, the same platform could be repurposed for security sweeps in the morning and infrastructure inspection at night, checking pipes, cables or rooftop equipment. Malaysia’s push into Industry 4.0 and smart factories makes these use cases particularly relevant, as embodied intelligence promises flexibility for high-mix, medium-volume production. As companies scale physical AI in manufacturing, component volumes should rise and costs fall, making next-generation industrial robot dogs more affordable and capable for local operators, from Penang’s electronics hubs to Johor’s logistics corridors.

Risks, regulation and skills: what Malaysia must prepare for

Physical AI is powerful but far from plug-and-play. Experts note that getting robots to handle unstructured environments—like uneven floors, mixed boxes or crowded loading bays—is as challenging as autonomous driving. Systems must combine reliable perception, motion planning, force control, anomaly detection and safety checks, all at production speed. That raises questions for Malaysia around regulation, standards and workforce impact. Clear rules will be needed on where physical AI robots can operate, how they interact with humans and who is accountable when something fails. At the same time, the country will need technicians and engineers who understand AI robotics Malaysia-wide—from configuring sensors and maintaining quadruped platforms to integrating them with warehouse management or security systems. Rather than replacing workers overnight, early deployments are likely to handle dangerous, dirty or dull tasks first, but policymakers, universities and industry will have to cooperate to ensure skills and safety keep pace with the march of embodied intelligence.