Frontier AI Models Have Become a Security Control Point

Frontier AI models are no longer just R&D tools; they are becoming security control points that decide who detects vulnerabilities first. Systems like Claude Mythos can scan massive, complex codebases and infrastructure stacks at a depth and speed that human teams simply cannot match. That capability turns frontier AI models into a new form of security leverage: whoever controls access effectively controls a powerful vulnerability discovery engine. In this new landscape, frontier AI models security is about more than model integrity or prompt filtering. It is about Claude Mythos access control and similar programs that decide which organizations can apply these capabilities to harden their systems. The result is a growing cybersecurity model disparity between defenders with advanced AI assistance and those relying solely on traditional tools, manual reviews, and limited automation.

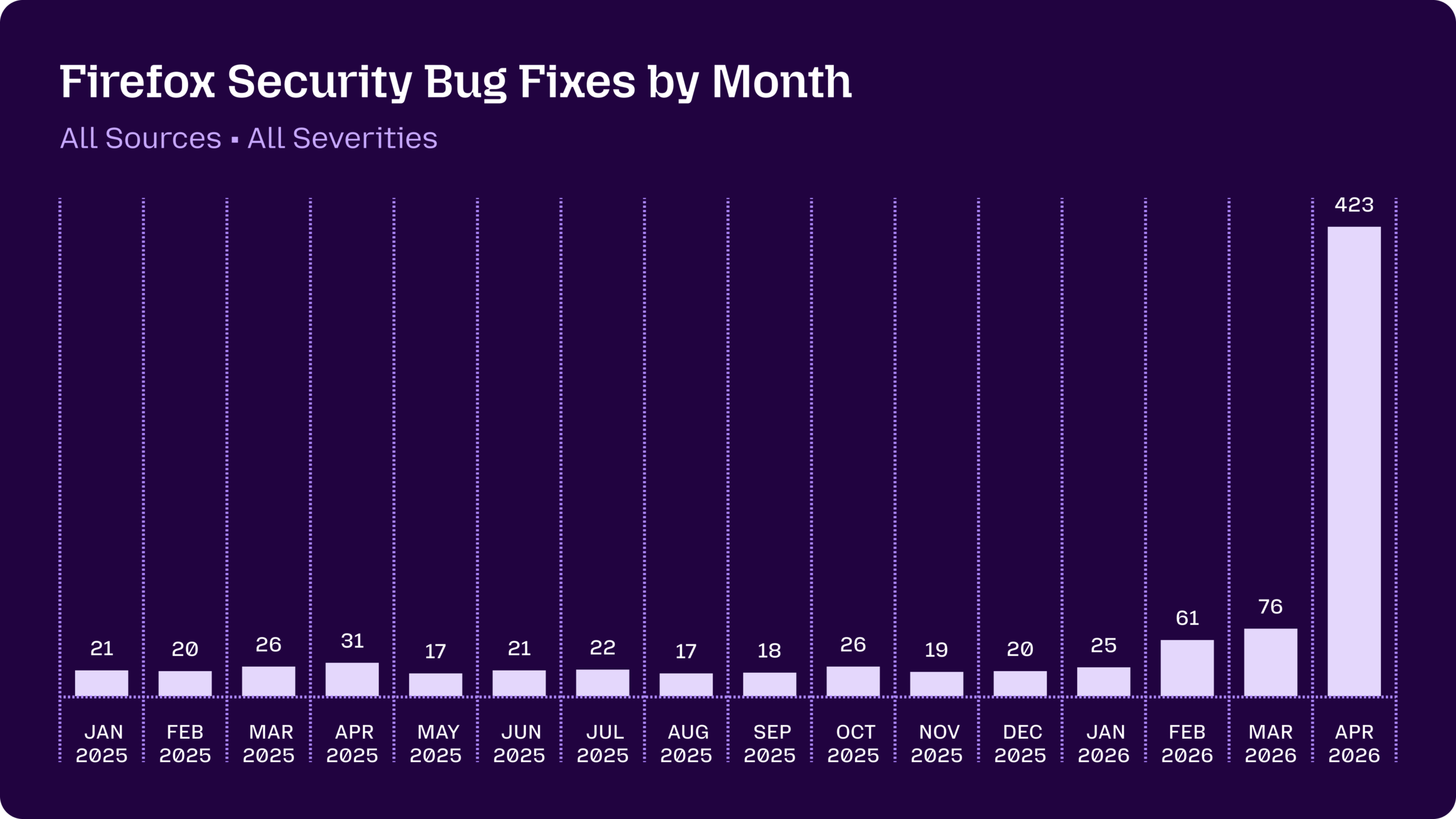

Mozilla’s Claude Mythos Deployment Shows the Scale of the Gap

Mozilla’s recent experience with Claude Mythos Preview illustrates how access concentration can radically reshape security outcomes. For most of a year, Firefox security fixes hovered in the teens and mid-twenties each month. After Anthropic deployed Claude Opus 4.6 to scan the browser, the model uncovered 22 vulnerabilities in a two-week window, including 14 high-severity issues. Then, once Mozilla gained access to Claude Mythos through Project Glasswing, the number of Firefox security fixes spiked to 423 in April, with Mythos alone surfacing 271 issues. Only a handful of these warranted standalone CVEs, but the rest represented defense-in-depth improvements and fixes in dormant code paths that human teams would not realistically prioritize. This is AI vulnerability discovery at industrial scale—and it demonstrates how organizations with frontier AI models can rapidly compress years of backlog into weeks, leaving others far behind in basic hardening.

When AI Turns ‘Low Severity’ Issues into High-Impact Chains

The real power of frontier AI models lies not just in finding more bugs, but in chaining seemingly minor weaknesses into major attacks. Consider a pharma-focused scenario: an AI system scans a company’s external attack surface, flags a medium-severity flaw in a vendor portal, and then identifies a misconfigured lab integration that provides the needed foothold. Each issue alone scores in the midrange on traditional scales and might sit low in a patch queue. Together, they create a direct path to sensitive research data. Early testing of Mythos-tier systems and similar cyber-focused models shows that their real leap is in rapidly assembling multi-step exploit paths at a scale no human red team can match. This changes how security leaders must think about risk: a backlog of ignored “fives and sixes” can now be weaponized by AI agents faster than defenders can triage them, especially in complex R&D environments.

The Human Attack Surface and AI-Driven Social Engineering

The cybersecurity model disparity does not stop at code and cloud infrastructure. Frontier AI models amplify the human attack surface by industrializing high-quality social engineering. Weaponized large language model agents can generate convincing emails, phone scripts, text messages, and complete social profiles at scale—without the telltale urgency, spelling errors, or clumsy pretexts many people are trained to spot. Future phishing and pretexting campaigns may look slower, more strategic, and more personalized, unfolding over weeks or months. Non-experts, armed with powerful models, can already produce complete, working exploits; the same capabilities can be applied to crafting psychological exploits targeting executives, researchers, and administrators. Traditional security programs focused on systems hardening now need a parallel discipline of human risk management, recognizing that AI can systematically probe both technical and human weaknesses and chain them into sophisticated, blended attacks.

Access Concentration: Who Gets Hardened First—and Who Doesn’t

Programs like Anthropic’s Project Glasswing and OpenAI’s Trusted Access for Cyber are explicitly designed to limit the most capable models to vetted defenders. The partner list itself reads like a map of which layers of the technology stack will be hardened earliest: hyperscale cloud providers, operating system maintainers, chipmakers, networking and firewall vendors, endpoint security firms, browser developers, and key infrastructure projects. Their foundational systems form a large share of the world’s shared attack surface. But this frontier AI models security strategy has a second-order effect. Organizations outside these elite access programs—especially those with complex R&D pipelines, regulated data, or extensive vendor ecosystems—remain comparatively exposed. Meanwhile, other model developers are racing to match these capabilities without imposing the same strict access controls, meaning sophisticated tools may reach both defenders and attackers. In this transition period, security leaders need explicit strategies for closing the AI gap: partnering for access, automating backlog reduction, and designing defenses that assume attackers already have Mythos-class capabilities.